| Multi-point evaluation in higher dimensions |

|

| February 2, 2012 |

|

. This work has

been partly supported by the French

. This work has

been partly supported by the French

. This work has

also been supported by NSERC and the Canada Research Chairs

program.

. This work has

also been supported by NSERC and the Canada Research Chairs

program.

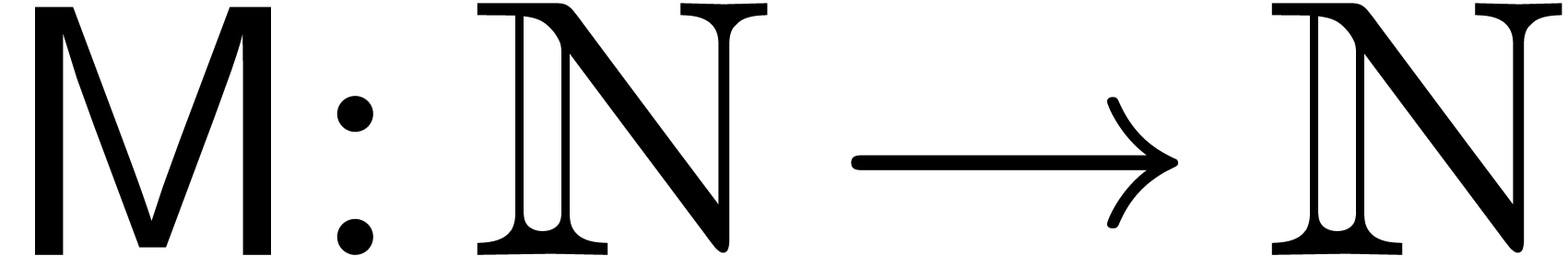

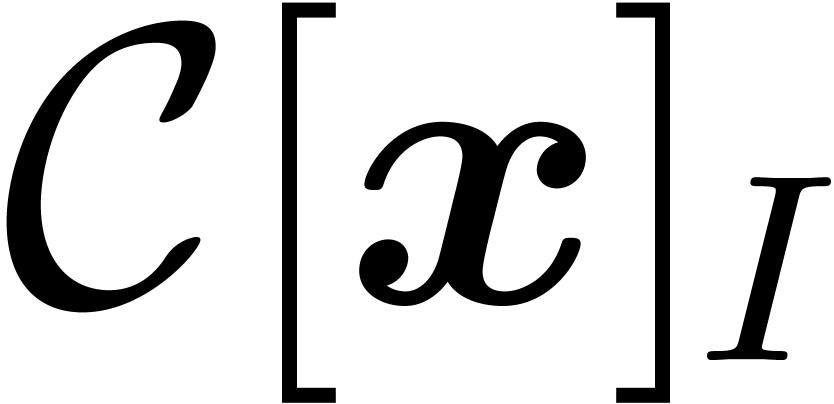

In this paper, we propose efficient new algorithms for

multi-dimensional multi-point evaluation and interpolation on

certain subsets of so called tensor product grids. These

point-sets naturally occur in the design of efficient

multiplication algorithms for finite-dimensional

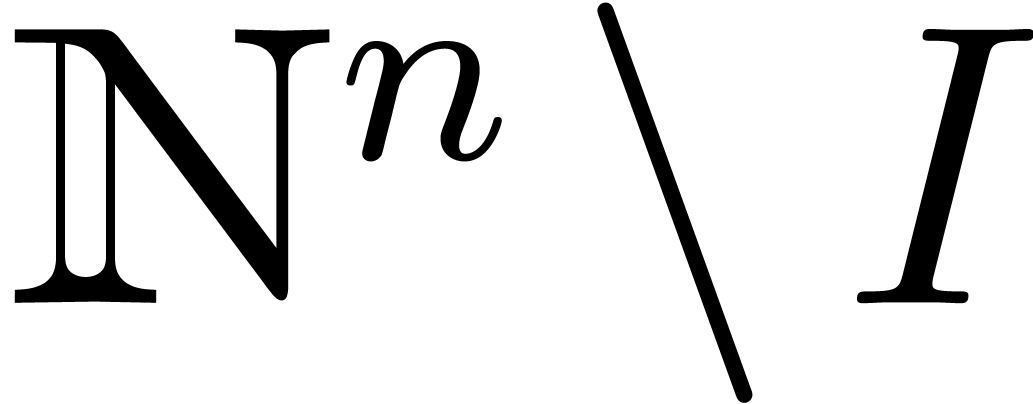

|

The complexity of our algorithms will be measured by counting base field operations: we do not consider numerical issues (they may anyway be irrelevant, if e.g. our base field is a finite field), and do not discuss the choice of data structures or index manipulation issues.

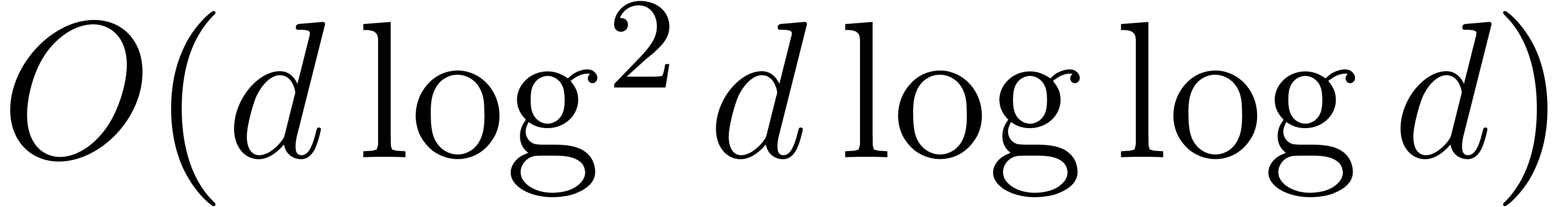

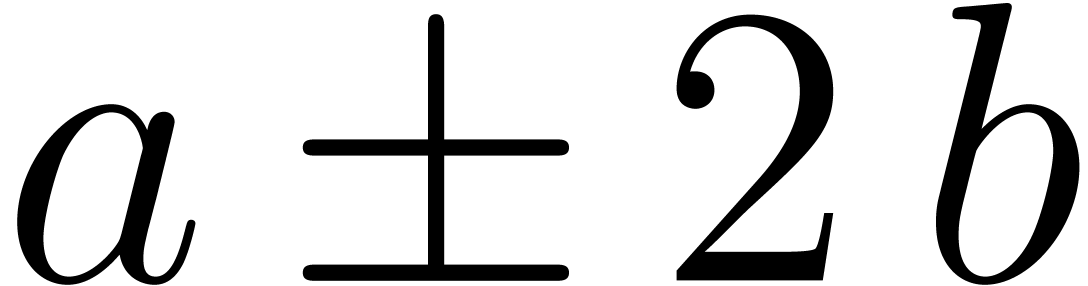

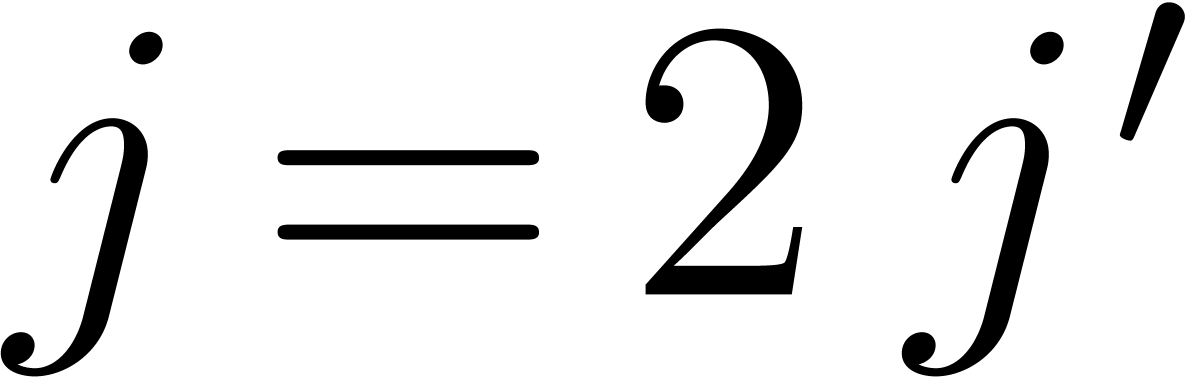

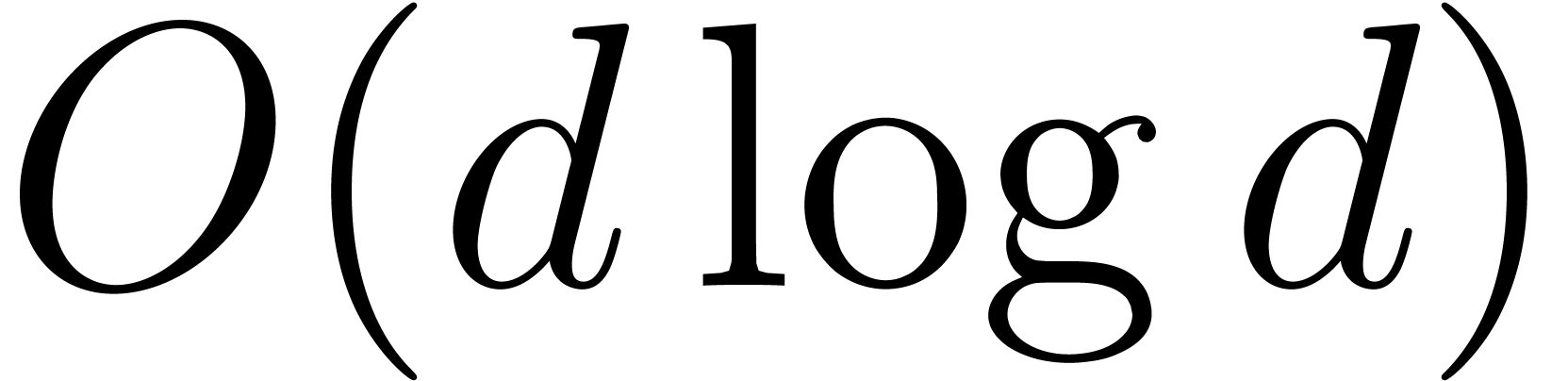

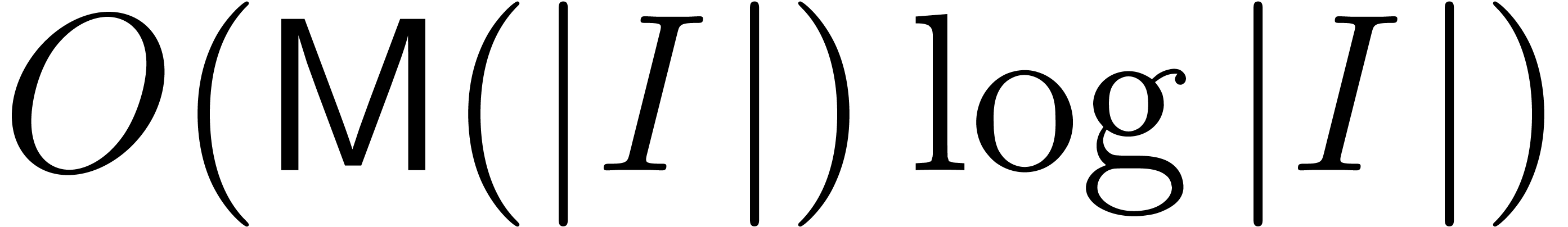

From the complexity point of view, evaluation and interpolation are

rather well understood for polynomials in one variable: algorithms of

quasi-linear complexity are known to evaluate a polynomial of degree

less than  at

at  points, and

conversely to interpolate it. The best known algorithms [BM74]

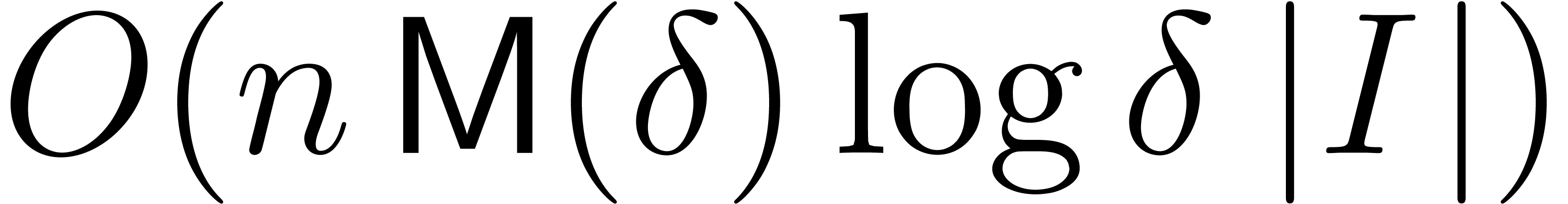

run in time

points, and

conversely to interpolate it. The best known algorithms [BM74]

run in time  , and the main

remaining question is to close the gap between this and an optimal

, and the main

remaining question is to close the gap between this and an optimal  , if at all possible.

, if at all possible.

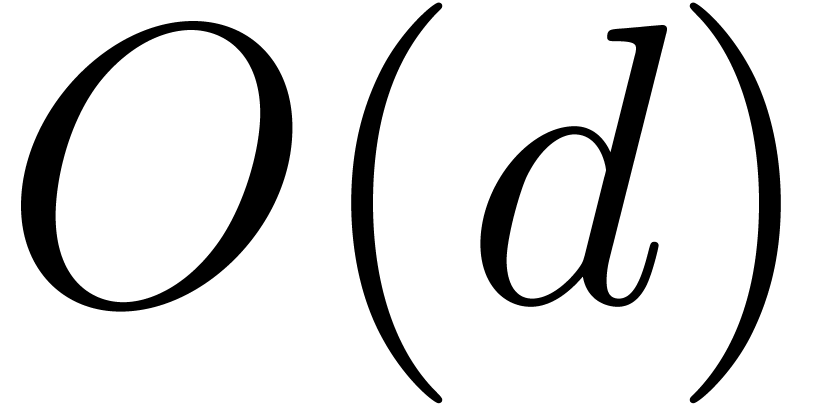

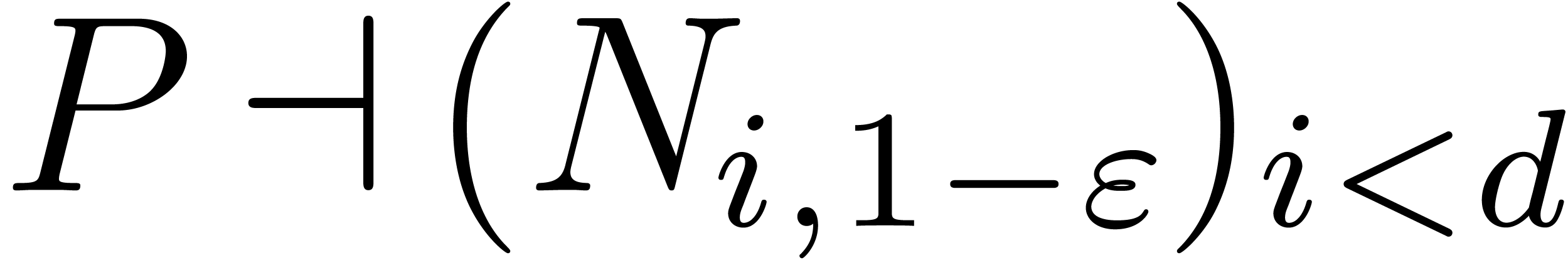

In several variables, the questions are substantially harder, due to the

variety of monomial bases and evaluation sets one may consider; no

quasi-linear time algorithm is known in general. In this paper,

following the terminology of [Sau04], we consider

evaluation points that are subgrids of tensor product grids. We

prove that for some suitable monomial bases, evaluation and

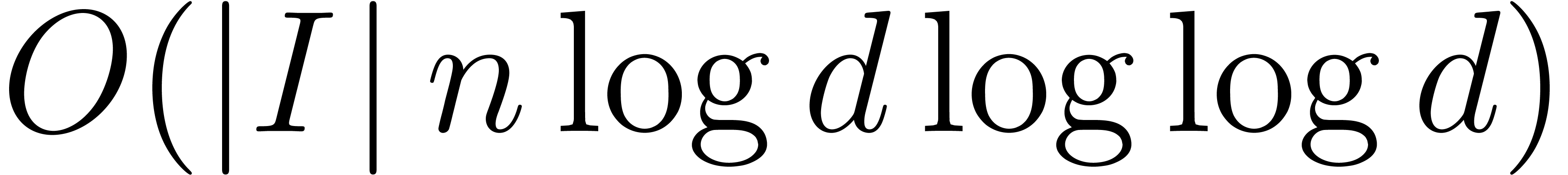

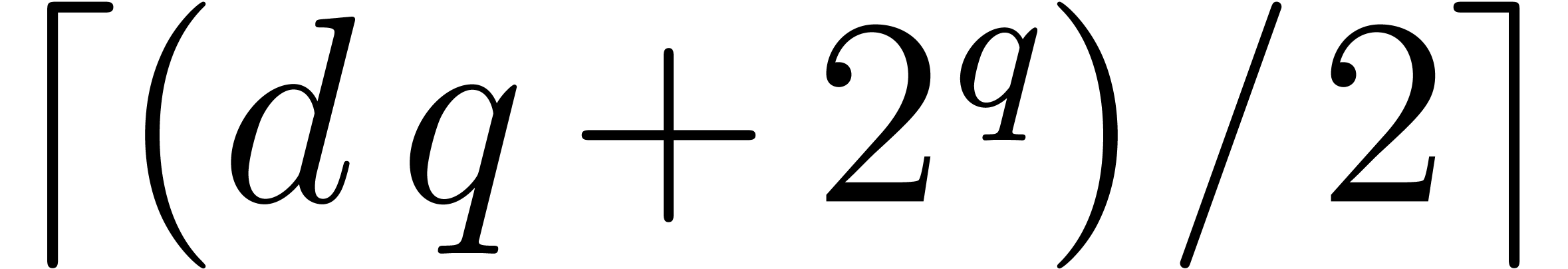

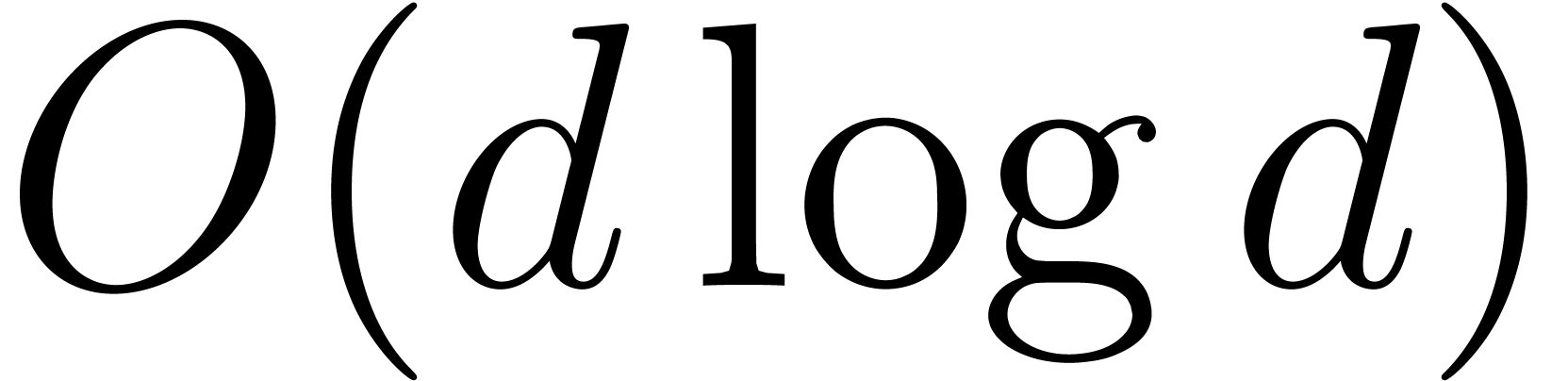

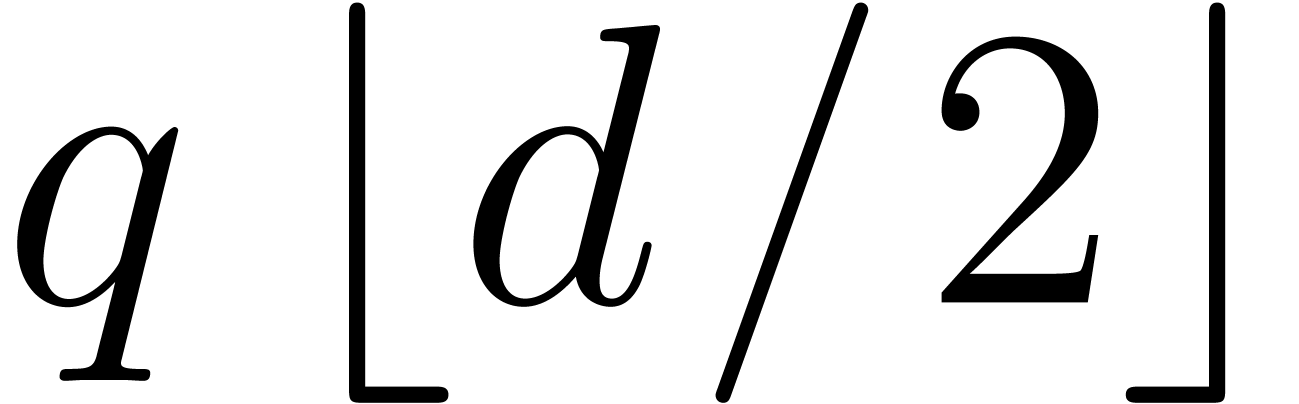

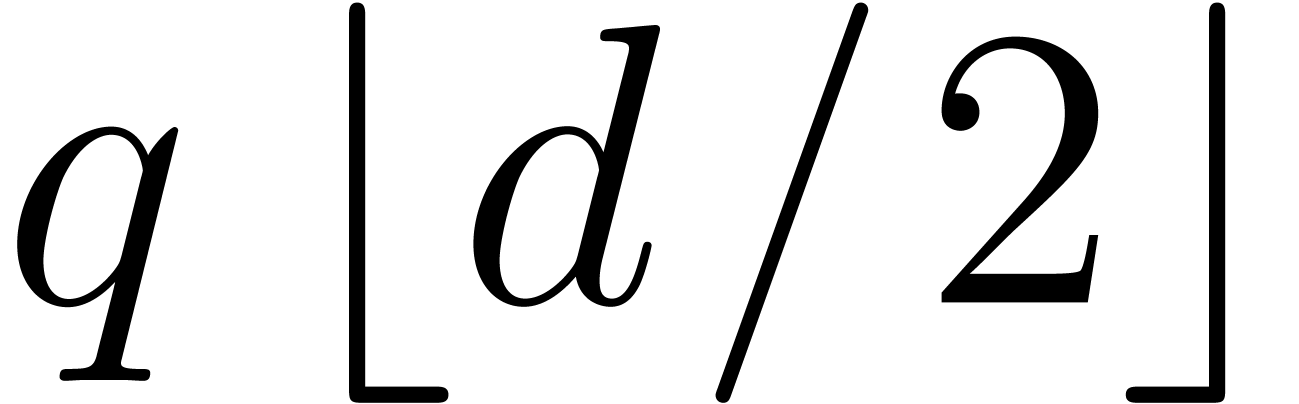

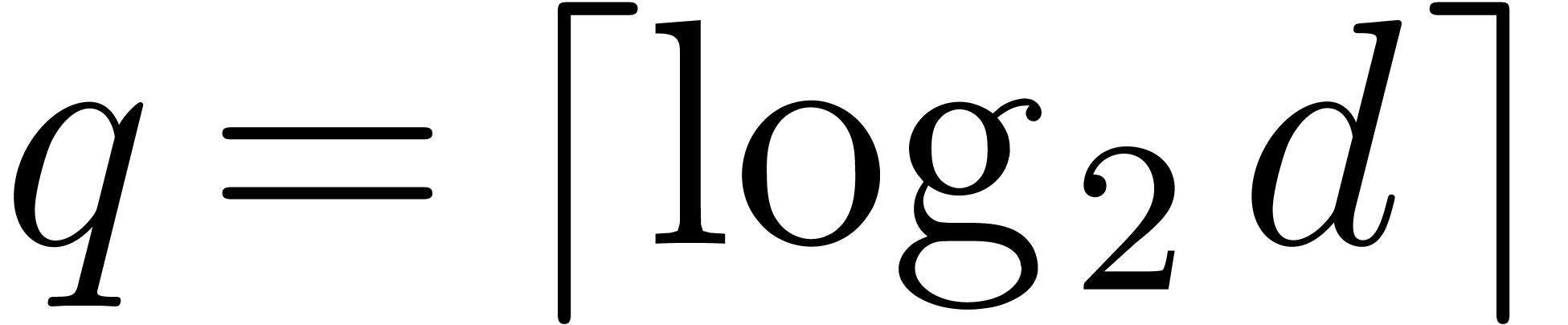

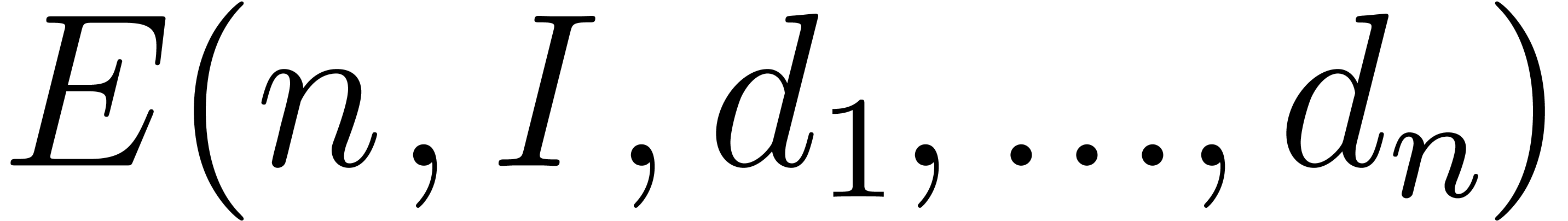

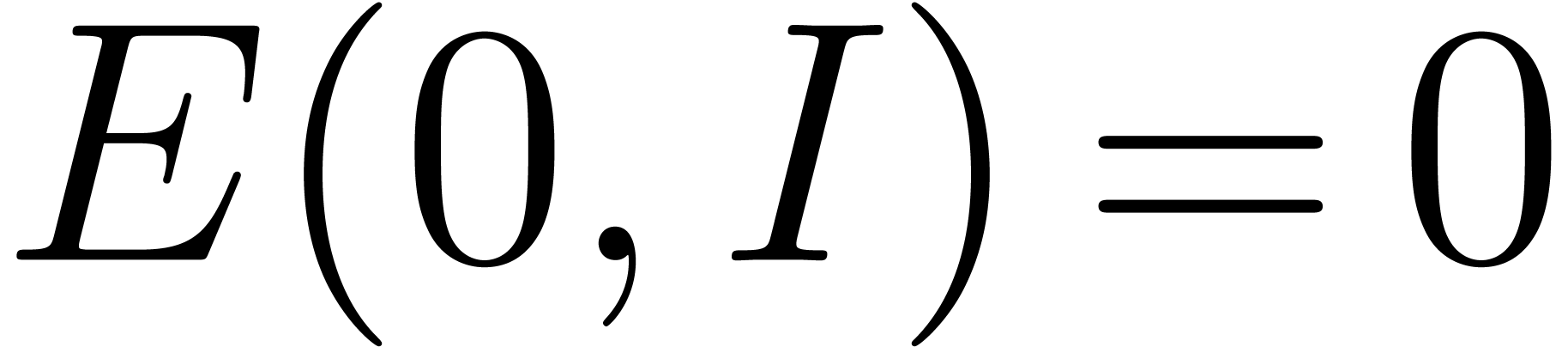

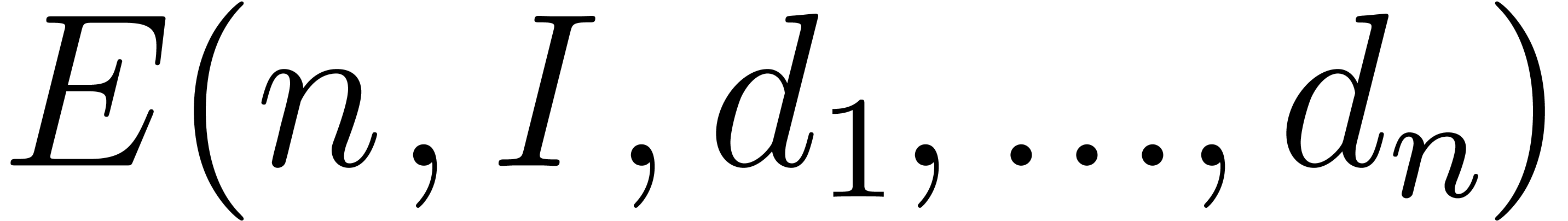

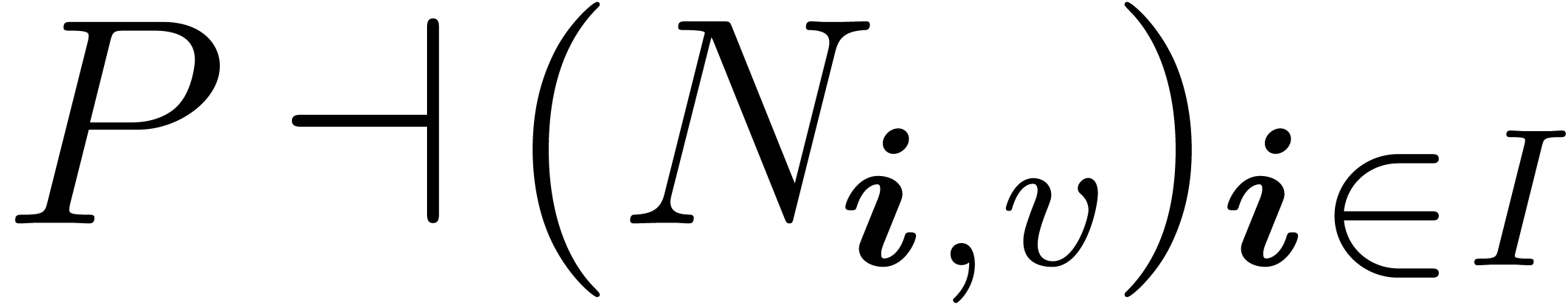

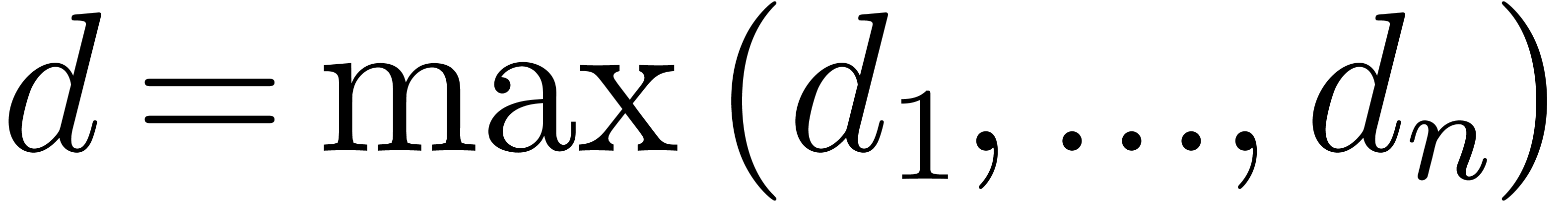

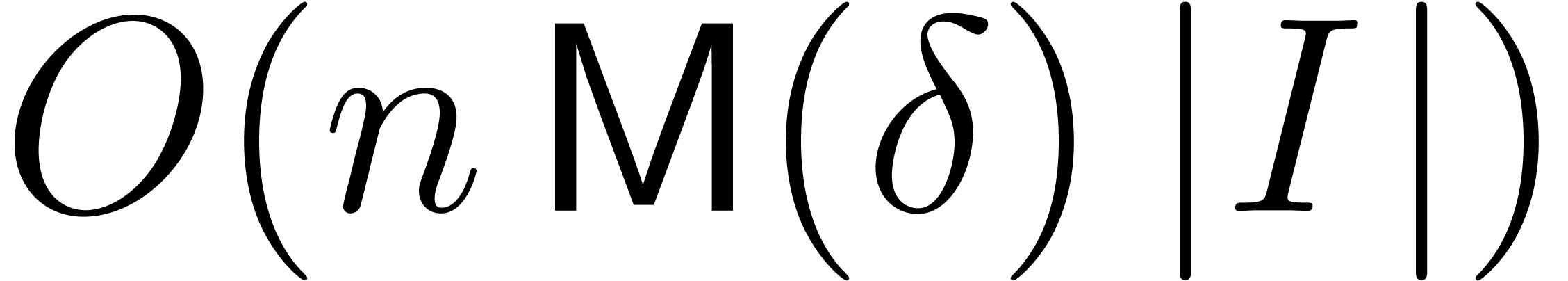

interpolation can both be done in time  ,

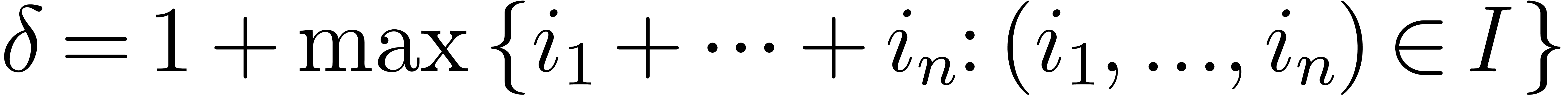

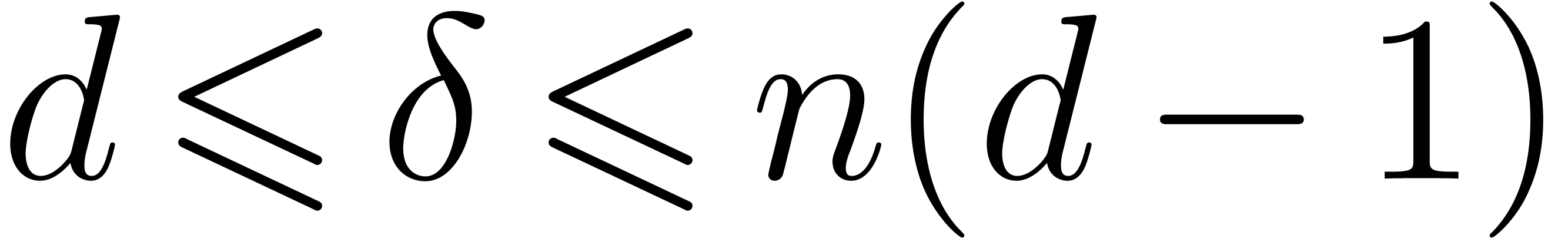

where

,

where  is the number of variables and

is the number of variables and  is the size of the evaluation set (and of the monomial

basis we consider). Remark that this result directly generalizes the

univariate case. In many cases,

is the size of the evaluation set (and of the monomial

basis we consider). Remark that this result directly generalizes the

univariate case. In many cases,  is logarithmic

in

is logarithmic

in  ; then, our result is

optimal, up to logarithmic factors.

; then, our result is

optimal, up to logarithmic factors.

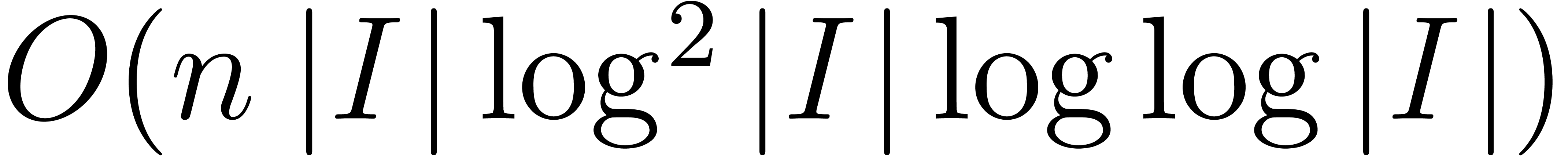

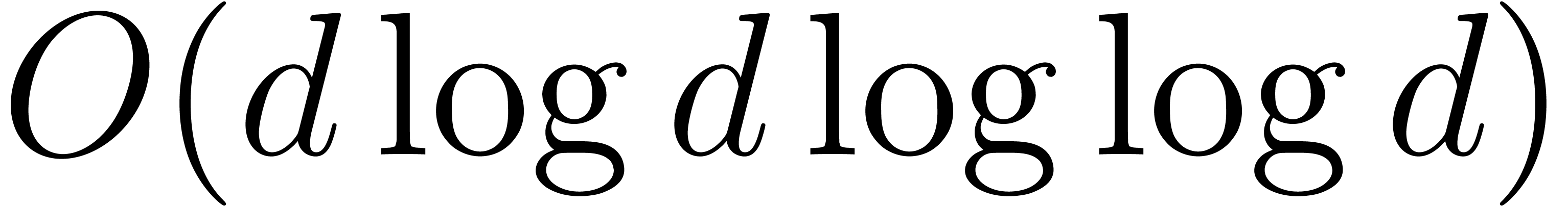

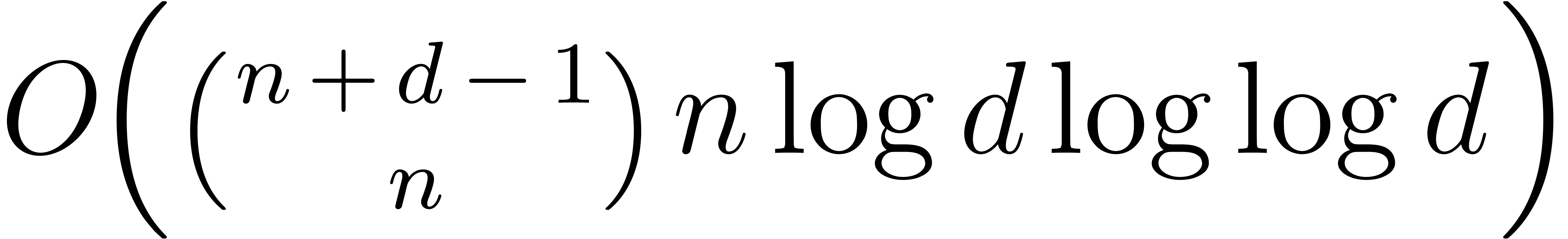

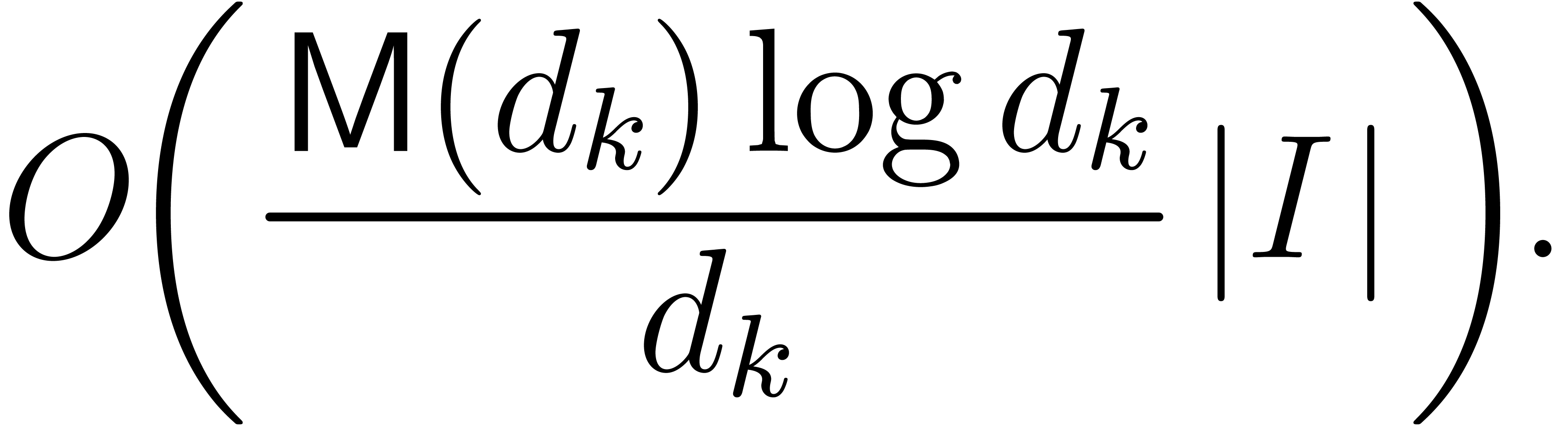

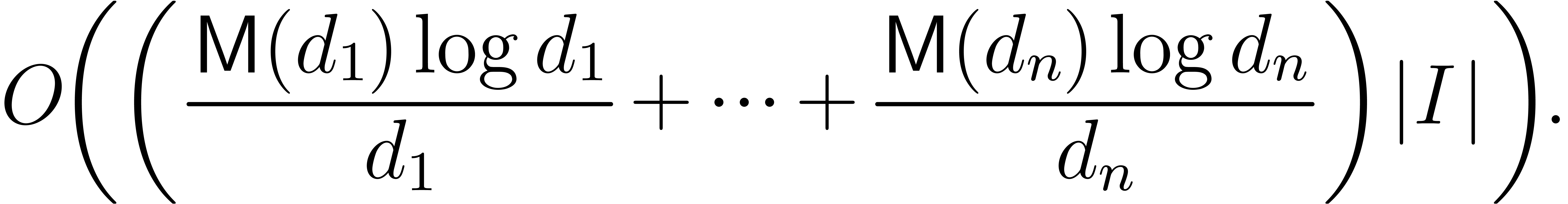

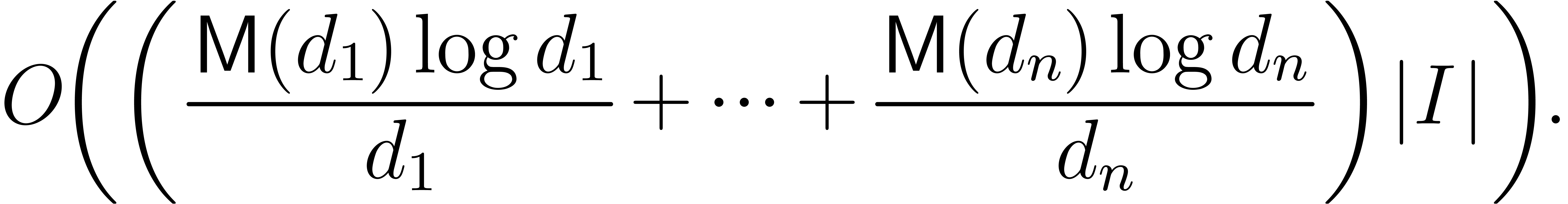

Moreover, for specific types of evaluation points, such as roots of

unity or points in a geometric progression, even faster algorithms can

be used in the univariate case, of time complexity  . These algorithms will also be generalized to

the multivariate case and result in evaluation and interpolation

algorithms of time complexity

. These algorithms will also be generalized to

the multivariate case and result in evaluation and interpolation

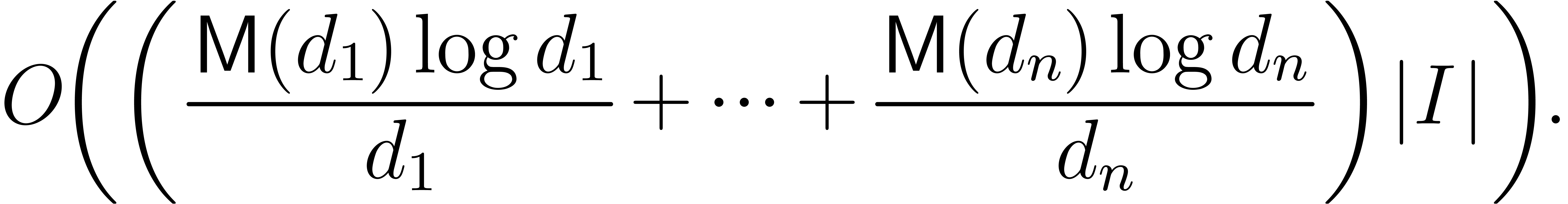

algorithms of time complexity  ,

where

,

where  is the maximal partial degree. In

particular, given two dense multivariate polynomials in

is the maximal partial degree. In

particular, given two dense multivariate polynomials in  variables of total degree

variables of total degree  can be multiplied in

time

can be multiplied in

time  . To the best of our

knowledge, this is the best currently available complexity bound for

this problem. We also expect the new algorithms to be efficient in

practice, although we have not implemented them yet.

. To the best of our

knowledge, this is the best currently available complexity bound for

this problem. We also expect the new algorithms to be efficient in

practice, although we have not implemented them yet.

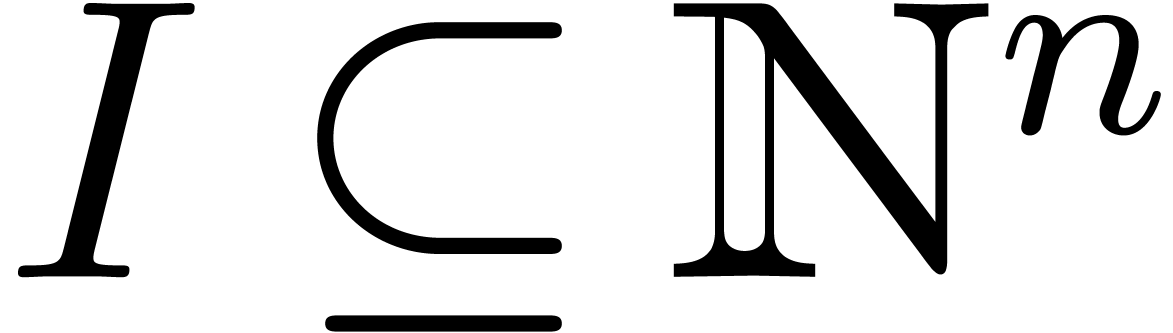

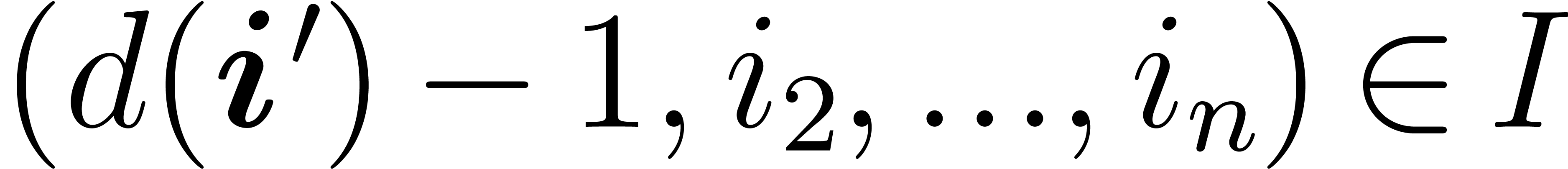

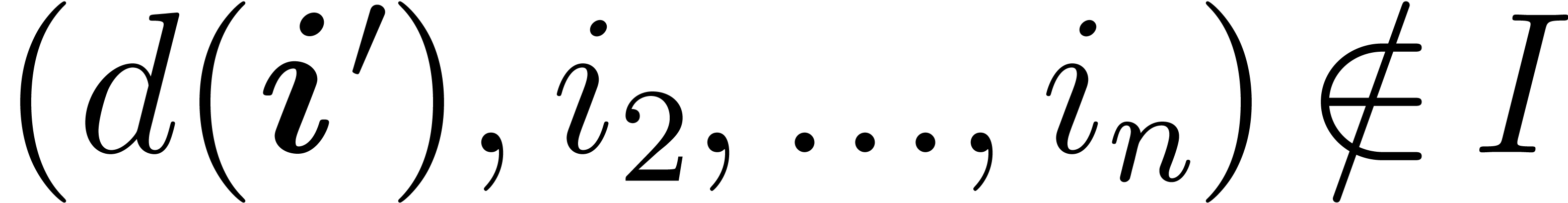

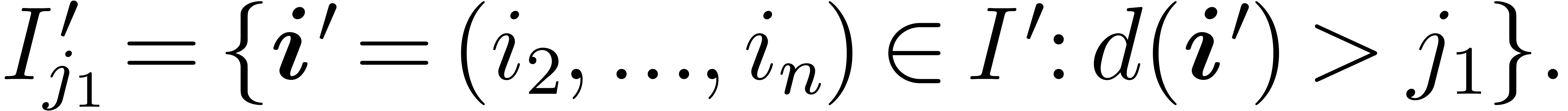

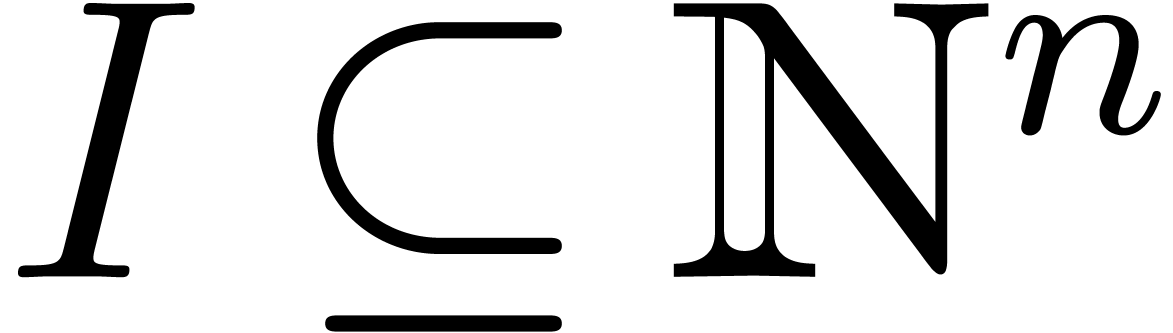

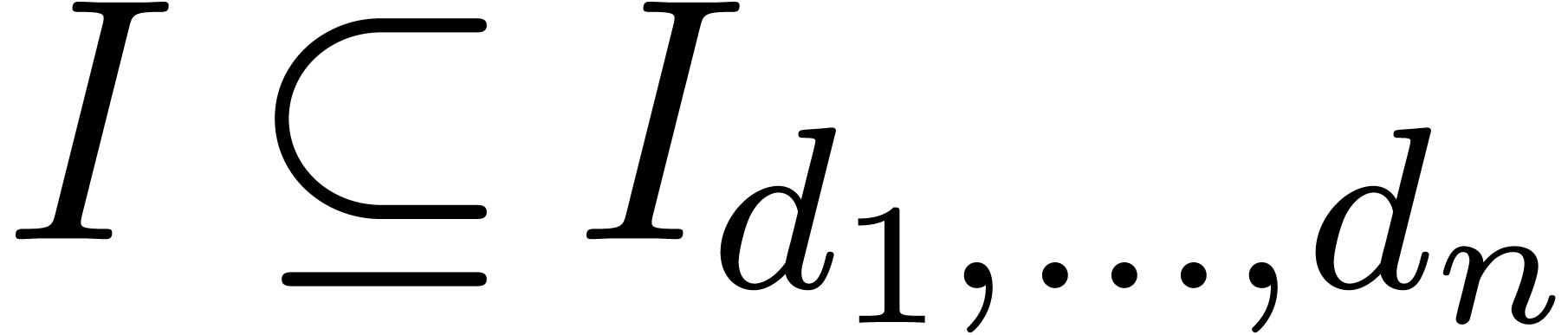

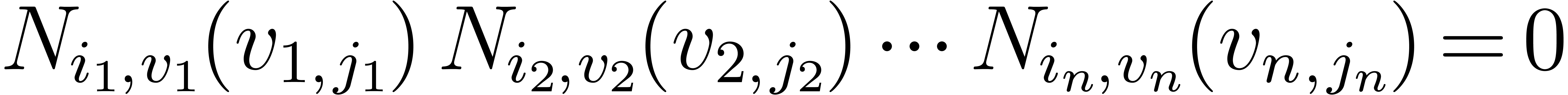

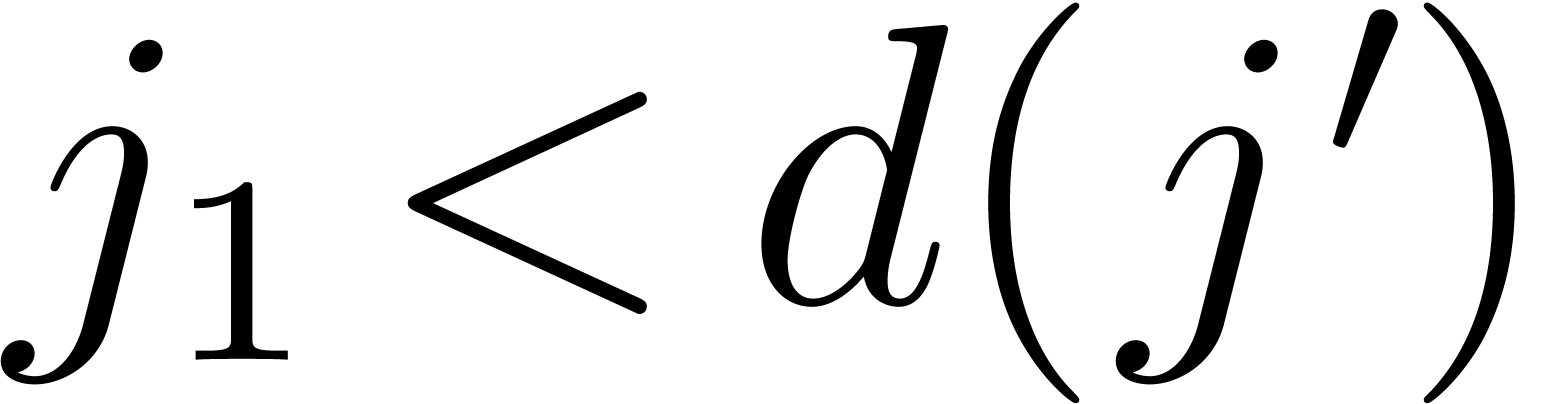

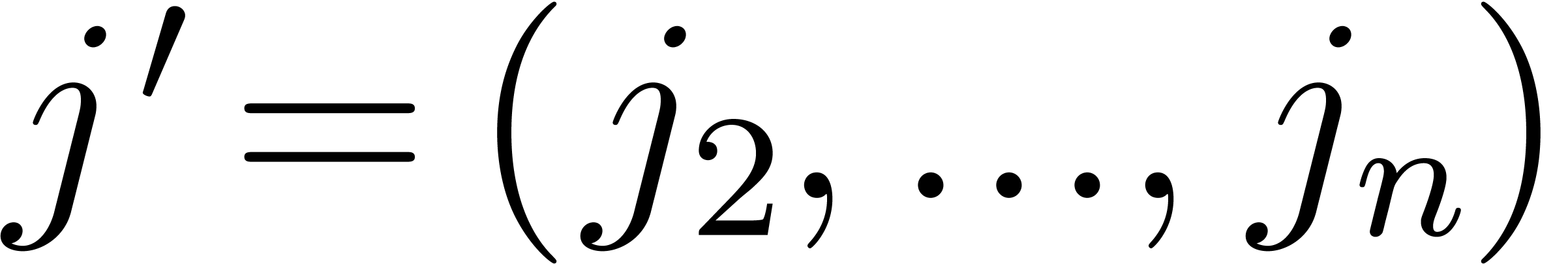

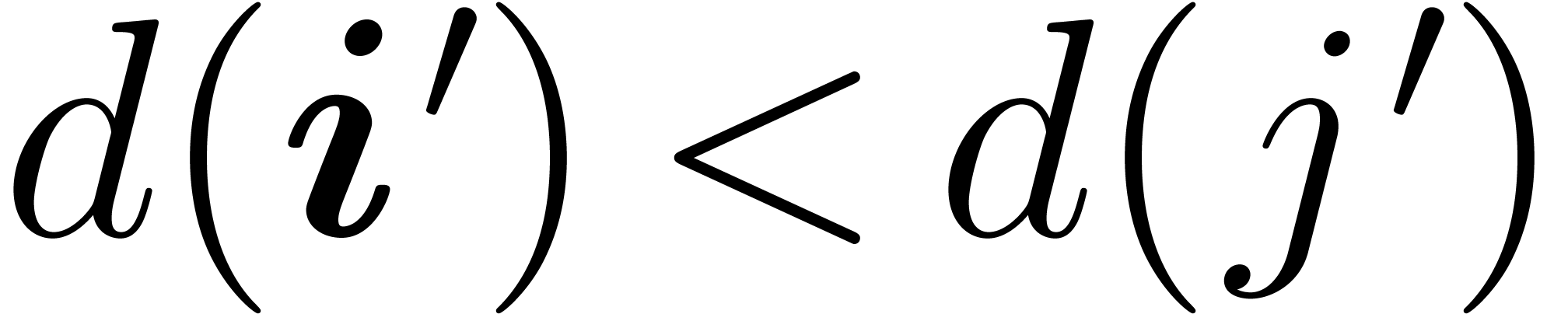

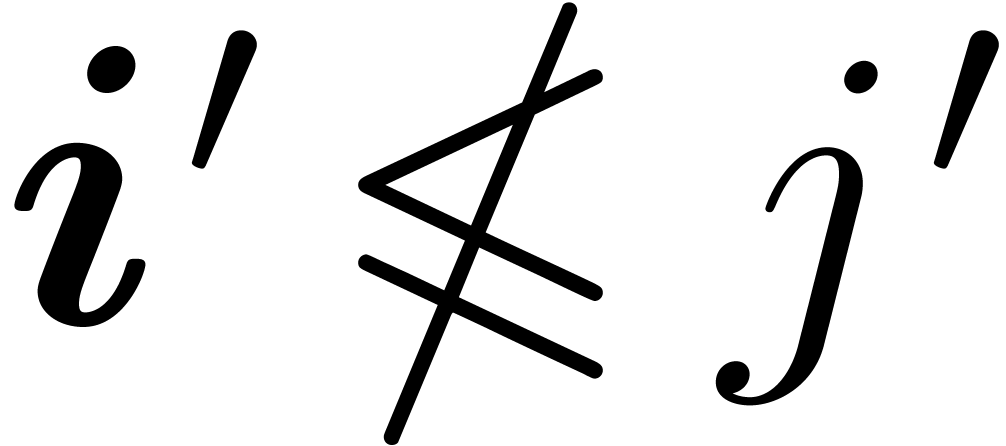

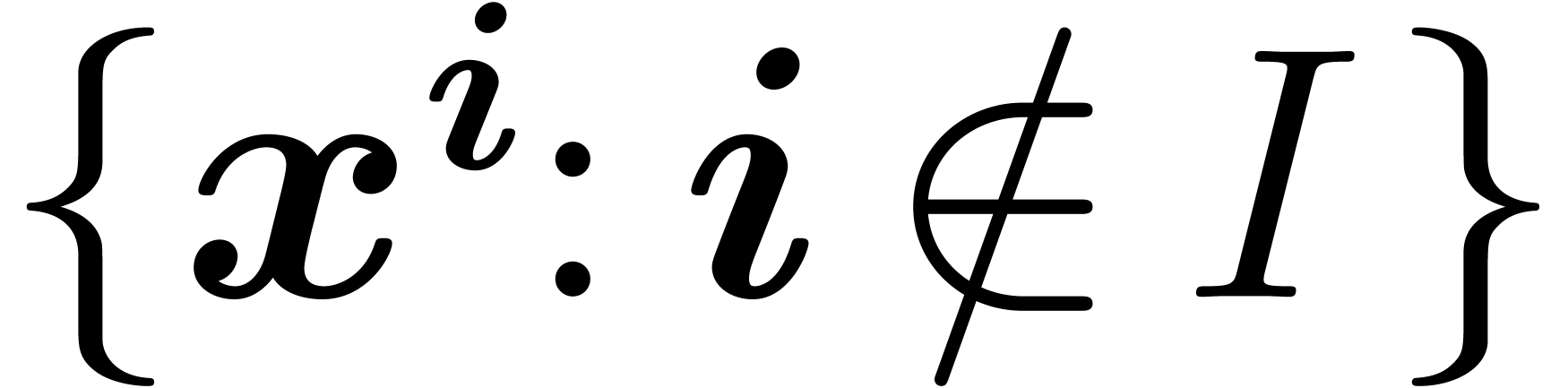

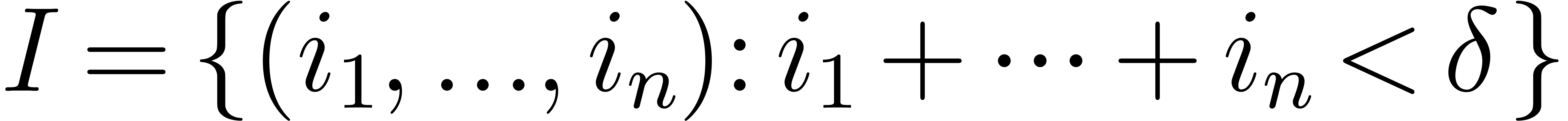

is a finite initial segment

for the partial ordering on

is a finite initial segment

for the partial ordering on  :

this means that if

:

this means that if  and

and  , then

, then  .

For instance, one may think of

.

For instance, one may think of  as the set of

standard monomials modulo a

as the set of

standard monomials modulo a  -dimensional

ideal, for a given monomial ordering. Figure 1 shows such

a set (black dots), as well as the minimal elements of

-dimensional

ideal, for a given monomial ordering. Figure 1 shows such

a set (black dots), as well as the minimal elements of  (green squares).

(green squares).

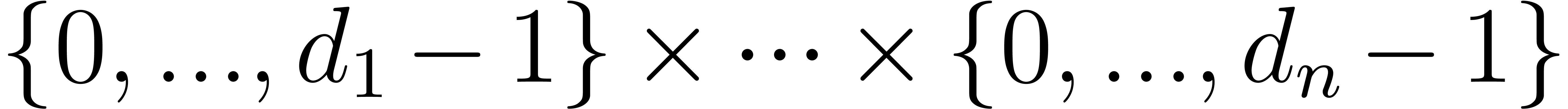

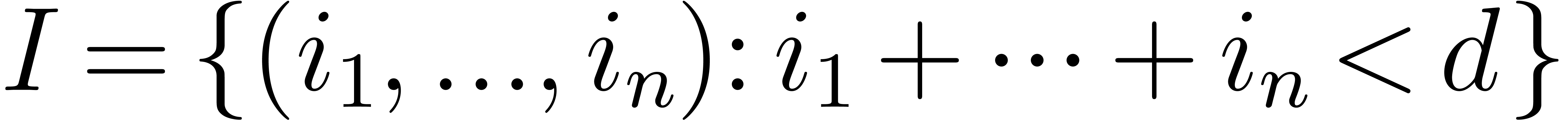

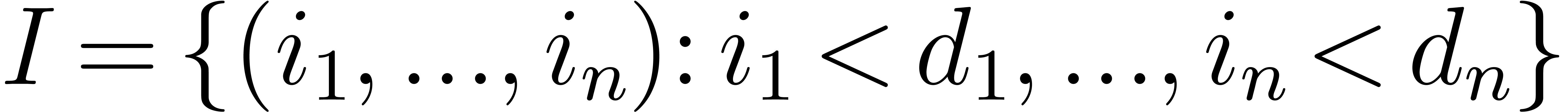

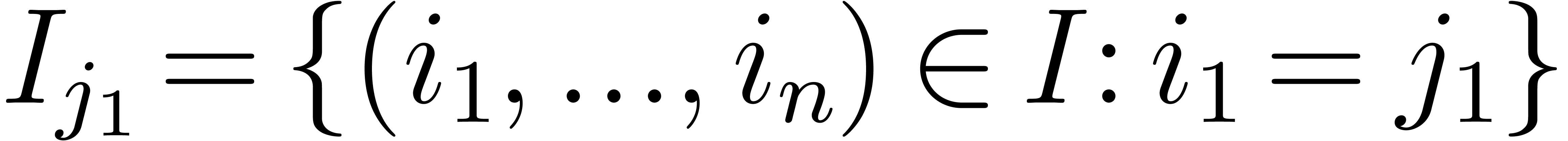

As a very particular example, for positive integers  , let

, let  denote the set

denote the set

; this is an

; this is an  -dimensional grid.

-dimensional grid.

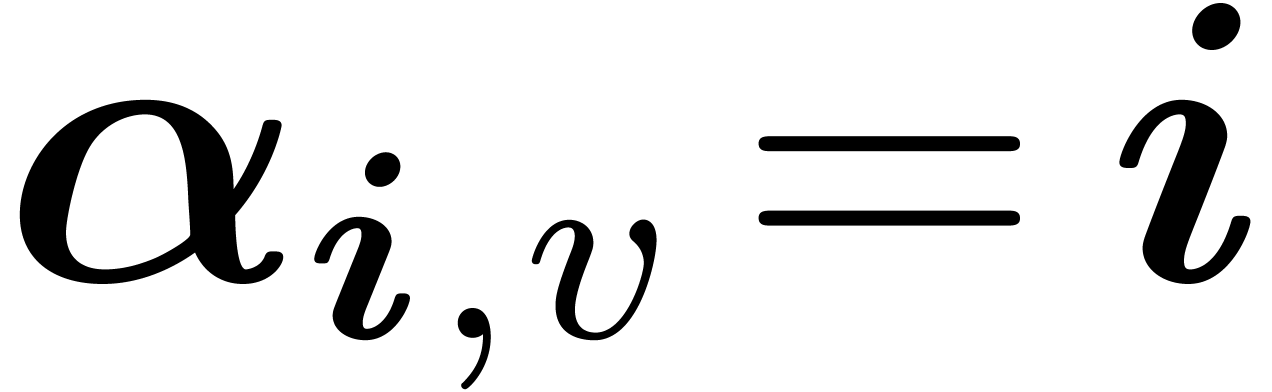

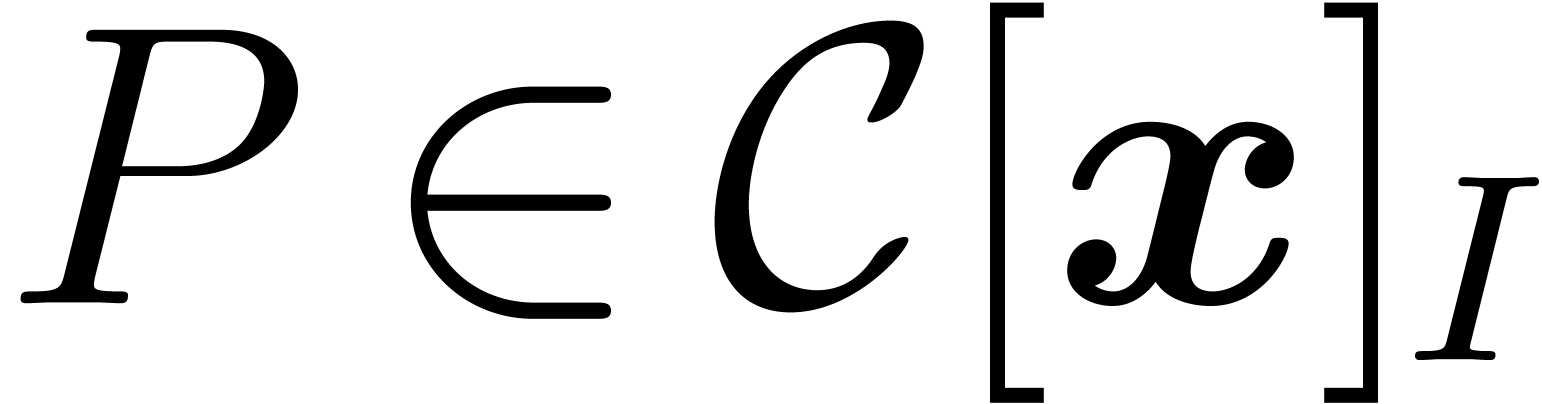

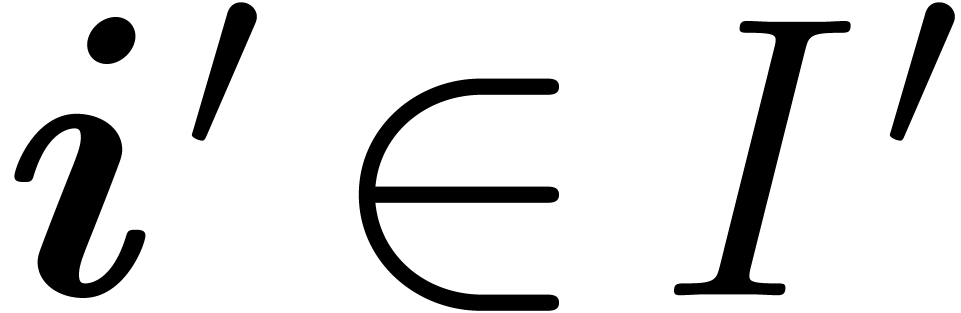

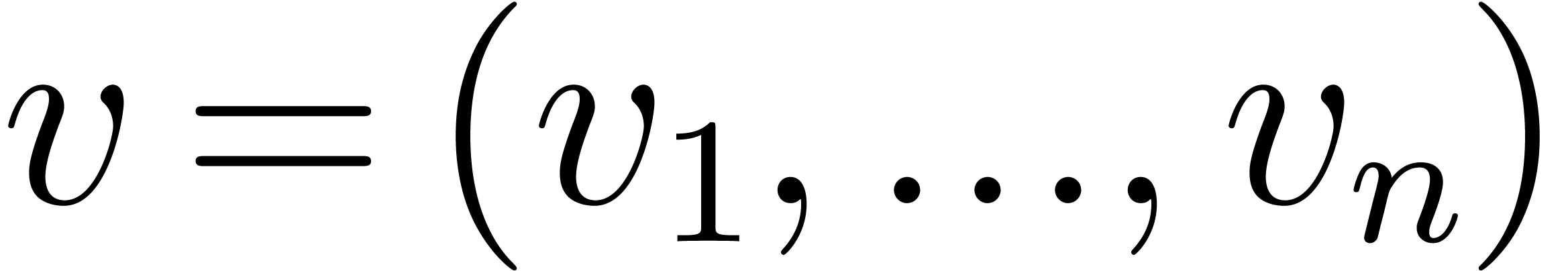

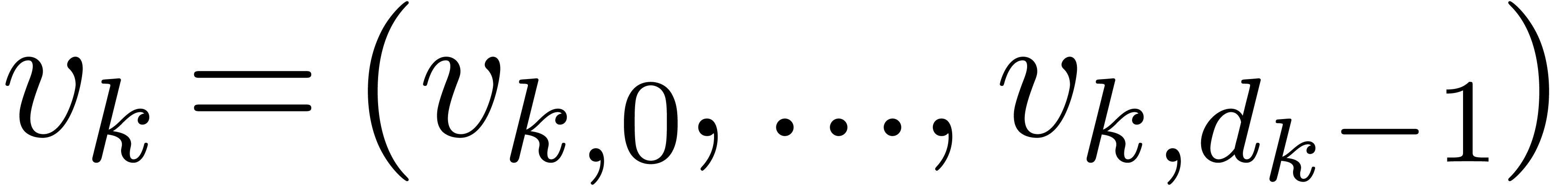

The set  will be used as an index set for both

the evaluation points and the monomial basis. Let

will be used as an index set for both

the evaluation points and the monomial basis. Let  be our base field and let

be our base field and let  be such that

be such that  . For

. For  ,

assume that we are given pairwise distinct elements

,

assume that we are given pairwise distinct elements  ; we will denote by

; we will denote by  the

collection

the

collection  . To

. To  we associate the point

we associate the point  and we let

and we let

: this will be our set of

evaluation points. Remark that

: this will be our set of

evaluation points. Remark that  is contained in

the “tensor product” grid

is contained in

the “tensor product” grid

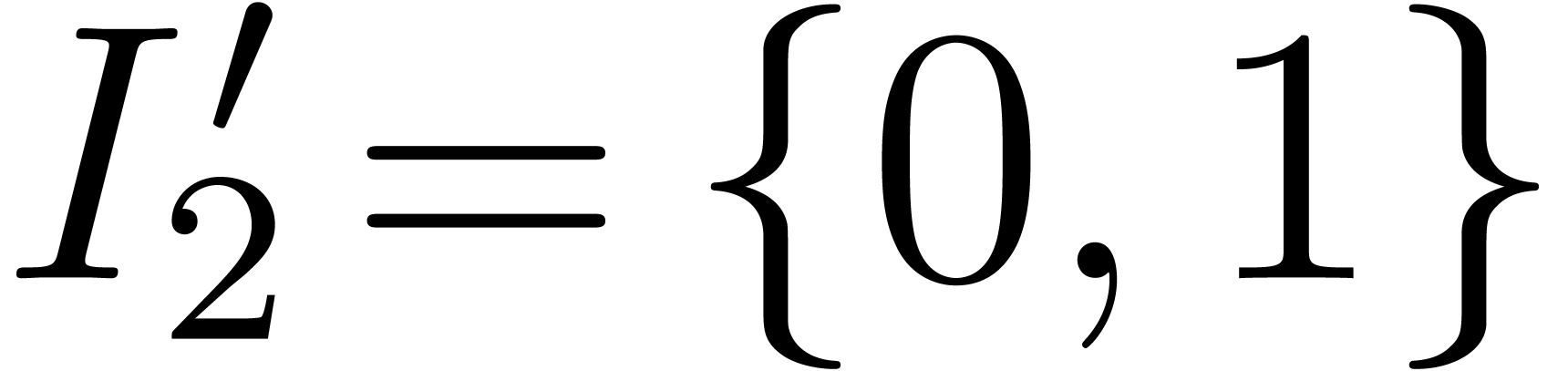

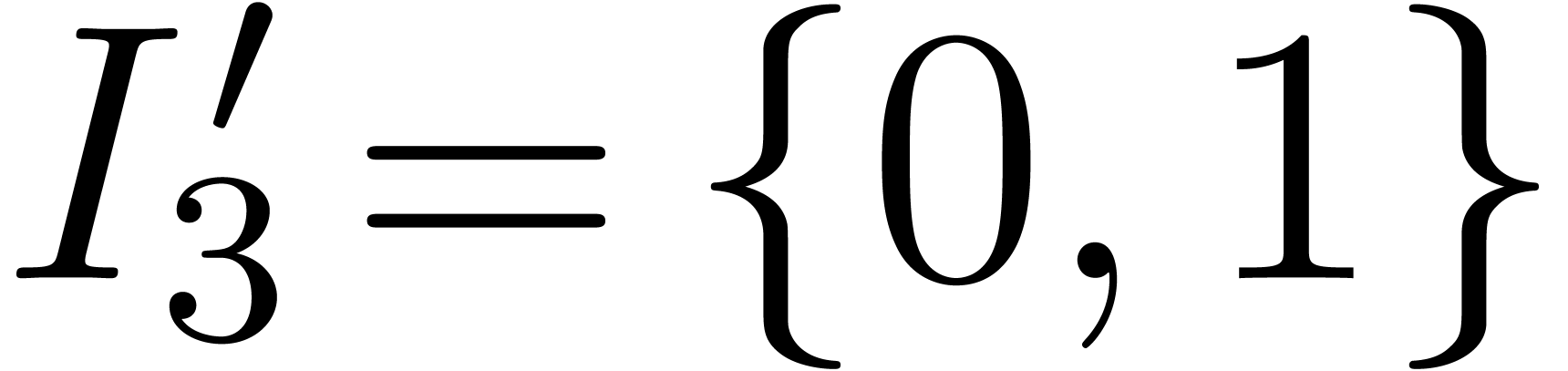

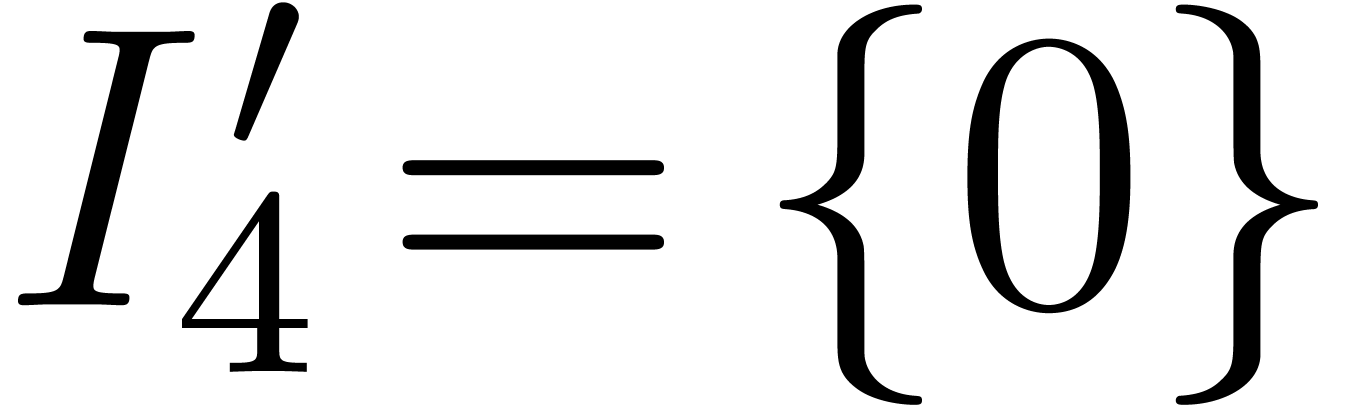

For instance, if  for all

for all  , then

, then  and

and  .

.

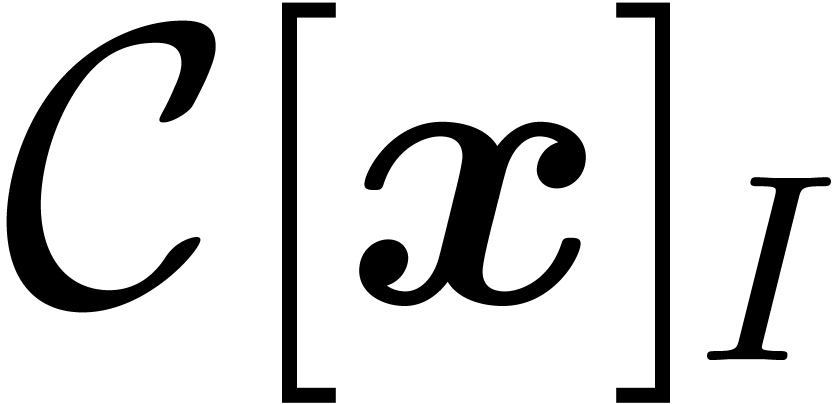

Let further  be the polynomial ring in

be the polynomial ring in  variables over

variables over  ;

for

;

for  , we write

, we write  . Then,

. Then,  denotes the

denotes the

-vector space of polynomials

-vector space of polynomials

with support in

with support in  .

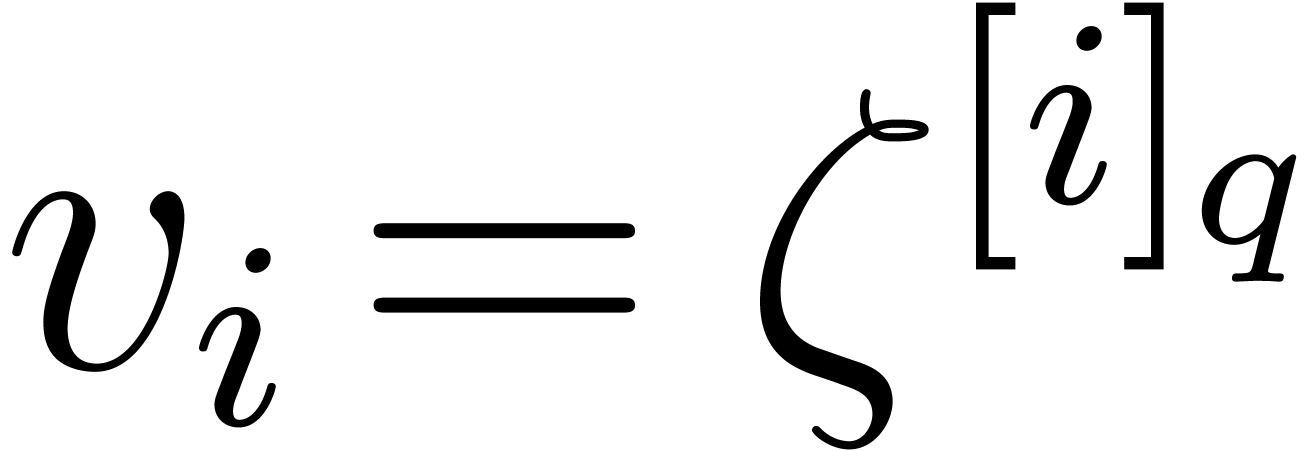

On the example of Figure 1,

.

On the example of Figure 1,  admits

the monomial basis

admits

the monomial basis

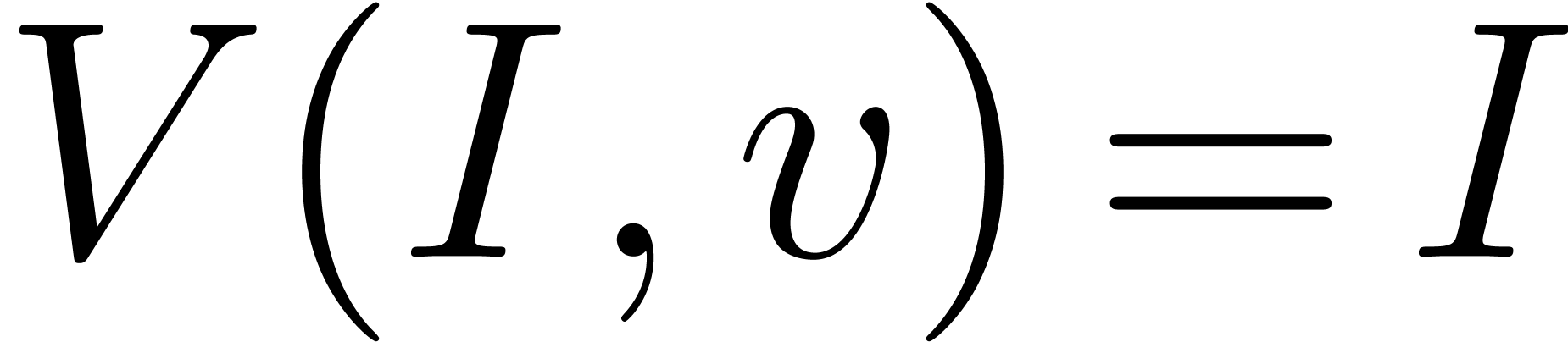

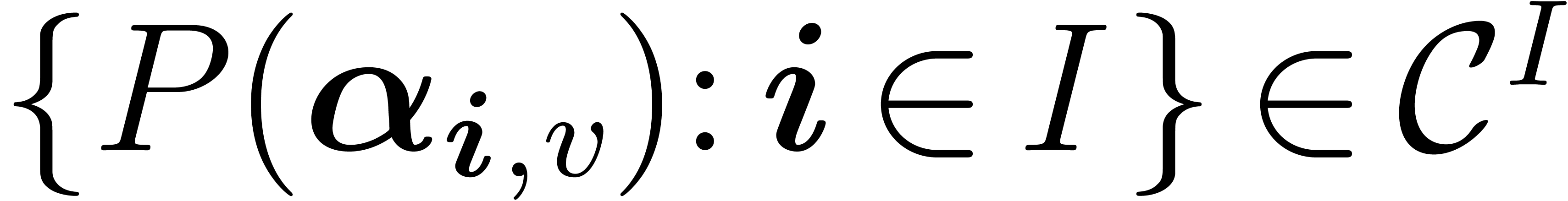

Given a polynomial  , written

on the monomial basis, our problem of multidimensional multi-point

evaluation is the computation of the vector

, written

on the monomial basis, our problem of multidimensional multi-point

evaluation is the computation of the vector  .

.

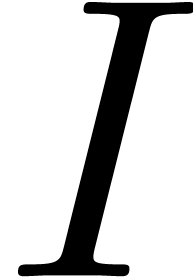

Both the domain  and the codomain

and the codomain  of the evaluation map are

of the evaluation map are  -vector

spaces of dimension

-vector

spaces of dimension  , so it

makes sense to ask whether this map is invertible. Indeed, let

, so it

makes sense to ask whether this map is invertible. Indeed, let  be the defining ideal of

be the defining ideal of  .

A result going back to Macaulay (see [Mor03] for a proof)

shows that the monomials

.

A result going back to Macaulay (see [Mor03] for a proof)

shows that the monomials  form a monomial basis

of

form a monomial basis

of  . As a consequence, the

former evaluation map is invertible; the inverse problem is an instance

of multivariate interpolation.

. As a consequence, the

former evaluation map is invertible; the inverse problem is an instance

of multivariate interpolation.

To obtain a quasi-linear result, we rely on the fast univariate

algorithms of [BM74]. In the special case where  is the grid

is the grid  ,

Pan [Pan94] solves the multivariate problem by applying a

“tensored” form of the univariate algorithms, evaluating or

interpolating one variable after the other. The key contribution of our

paper is the use of a multivariate Newton basis, combined with fast

change of basis algorithms between the Newton basis and the monomial

basis; this will allow us to follow an approach similar to Pan's in our

more general situation. The Newton basis was already used in many

previous works on our interpolation problem [Wer80, Müh88], accompanied by divided differences computations:

we avoid divided differences, as they lead to quadratic time algorithms.

,

Pan [Pan94] solves the multivariate problem by applying a

“tensored” form of the univariate algorithms, evaluating or

interpolating one variable after the other. The key contribution of our

paper is the use of a multivariate Newton basis, combined with fast

change of basis algorithms between the Newton basis and the monomial

basis; this will allow us to follow an approach similar to Pan's in our

more general situation. The Newton basis was already used in many

previous works on our interpolation problem [Wer80, Müh88], accompanied by divided differences computations:

we avoid divided differences, as they lead to quadratic time algorithms.

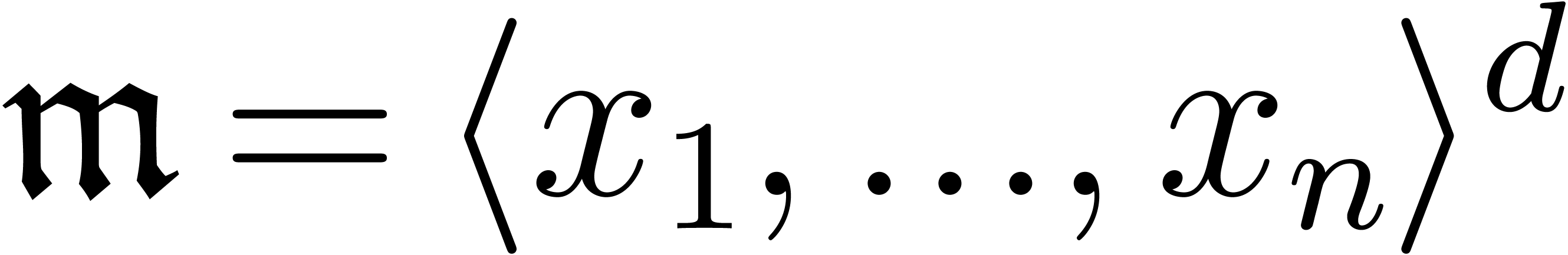

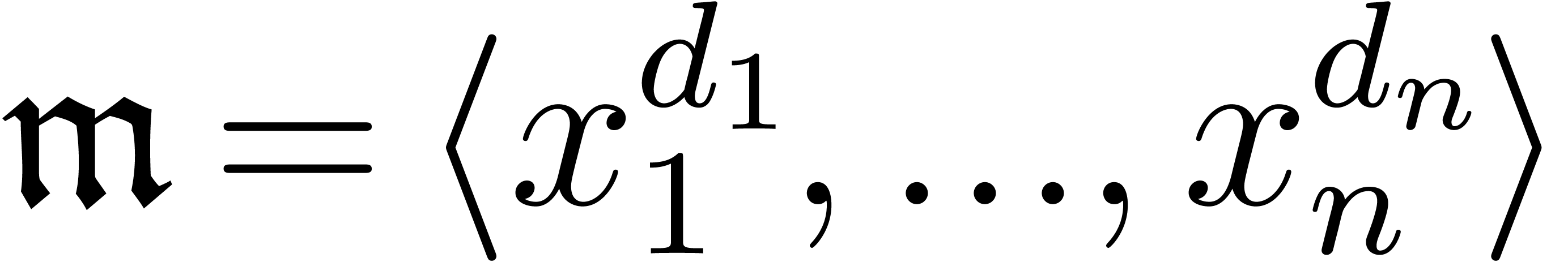

The results in this paper have a direct application to multivariate

power series multiplication. Let  be as above,

and let

be as above,

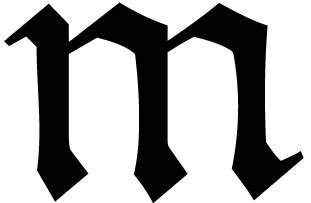

and let  be the monomial ideal generated by

be the monomial ideal generated by  ; equivalently,

; equivalently,  is generated by all minimal elements of

is generated by all minimal elements of  .

Then, one is interested the complexity of multiplication modulo

.

Then, one is interested the complexity of multiplication modulo  , that is, in

, that is, in  . Suitable choices of

. Suitable choices of  lead to total degree truncation (take

lead to total degree truncation (take  , so

, so  ),

which is used in many forms of Newton-Hensel lifting, or partial

degree truncation (take

),

which is used in many forms of Newton-Hensel lifting, or partial

degree truncation (take  ,

so

,

so  ).

).

There is no known algorithm with quasi-linear cost for this question in general. Inspired by the sparse multiplication algorithm of [CKL89], Lecerf and Schost gave such an algorithm for total degree truncation [LS03]. It was extended to weighted total degree in [vdH02] and further improved from the bit-complexity point of view in [vdHL09]. Further speed-ups are possible in small dimensions, when using the Truncated Fourier Transform or TFT [vdH04, vdH05]. For more general truncation patterns, Schost [Sch05] introduced an algorithm based on deformation techniques that uses evaluation and interpolation of the form described in this paper. At the time of writing [Sch05], no efficient algorithm was known for evaluation and interpolation; the present paper fills this gap and completes the results of [Sch05].

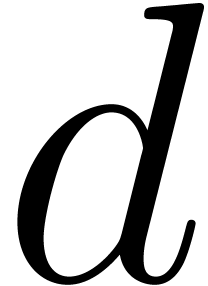

denote a

multiplication time function, in the sense that univariate polynomials

of degree less than

denote a

multiplication time function, in the sense that univariate polynomials

of degree less than  can be multiplied in

can be multiplied in  operations in

operations in  .

As in [GG03], we impose the condition that

.

As in [GG03], we impose the condition that  is an increasing function (and freely use all

consequences of this assumption), and we note that

is an increasing function (and freely use all

consequences of this assumption), and we note that  can be taken in

can be taken in  using the algorithm of [CK91].

using the algorithm of [CK91].

We will use big-Oh notation for expressions that depend on an unbounded

number of variables (e.g., for Lemma 6 below). In such

cases, the notation  means that there exists a

universal constant

means that there exists a

universal constant  such that for all

such that for all  and all

and all  , the

inequality

, the

inequality  holds.

holds.

This section describes some mostly classical algorithms for univariate

polynomials over  . We denote

by

. We denote

by  the set of univariate polynomials of degree

less than

the set of univariate polynomials of degree

less than  . Given pairwise

distinct points

. Given pairwise

distinct points  in

in  ,

we write

,

we write  for

for  .

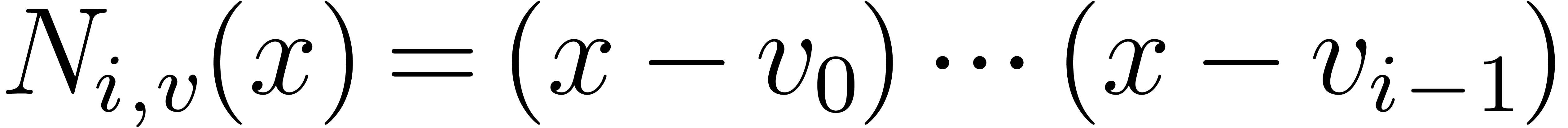

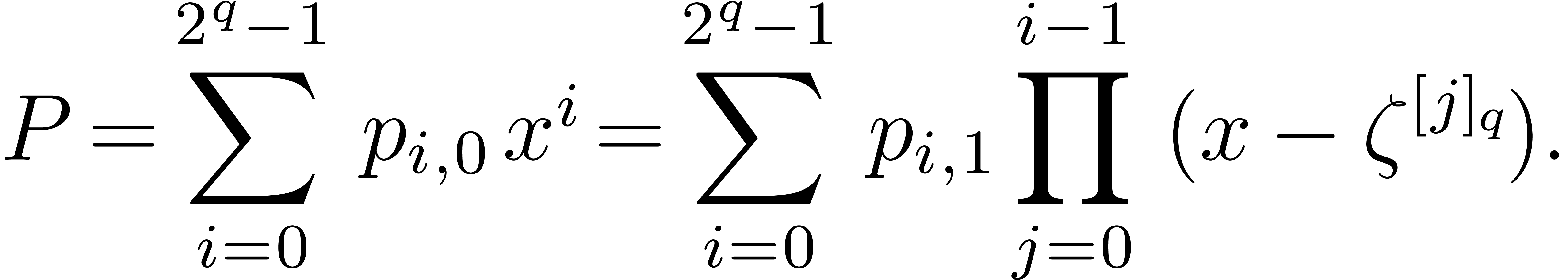

The polynomials

.

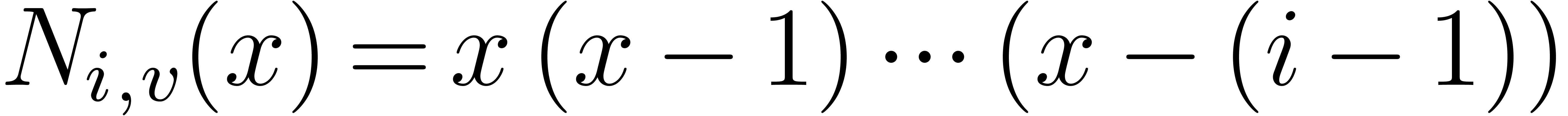

The polynomials  are called the Newton

basis associated to

are called the Newton

basis associated to  ;

they form a

;

they form a  -basis of

-basis of  . For instance, for

. For instance, for  , we have

, we have  .

.

Because we will have to switch frequently between the monomial and the

Newton bases, it will be convenient to use the notation  , for

, for  ,

with

,

with

We write  to indicate that a polynomial

to indicate that a polynomial  is written on the basis

is written on the basis  ;

remember that when no value

;

remember that when no value  is mentioned in

subscript, we are working in the Newton basis.

is mentioned in

subscript, we are working in the Newton basis.

The following classical lemma [BP94, Ex. 15 p. 67] gives complexity estimates for conversion between these bases.

Evaluation and interpolation in the monomial basis can be done in time

, by the algorithms of [BM74]; combining this to the previous lemma, we obtain a

similar estimate for evaluation and interpolation with respect to the

Newton basis.

, by the algorithms of [BM74]; combining this to the previous lemma, we obtain a

similar estimate for evaluation and interpolation with respect to the

Newton basis.

If the points  are in geometric progression, we

may remove a factor

are in geometric progression, we

may remove a factor  in all estimates. Indeed,

under these assumptions, the conversions of Lemma 1 and the

evaluation or interpolation of Lemma 2 take time

in all estimates. Indeed,

under these assumptions, the conversions of Lemma 1 and the

evaluation or interpolation of Lemma 2 take time  [BS05]. The following subsection studies in

detail another particular case, TFT (Truncated Fourier Transform)

points. In this case we may also remove the factor

[BS05]. The following subsection studies in

detail another particular case, TFT (Truncated Fourier Transform)

points. In this case we may also remove the factor  in all estimates, but the constant factor is even better than for points

in geometric progression.

in all estimates, but the constant factor is even better than for points

in geometric progression.

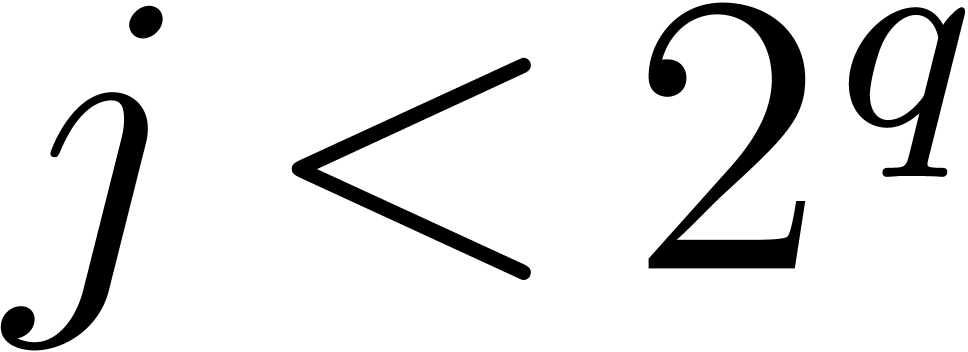

In this subsection, we are going to assume that  contains suitable roots of unity, and prove refined complexity bounds

for such points.

contains suitable roots of unity, and prove refined complexity bounds

for such points.

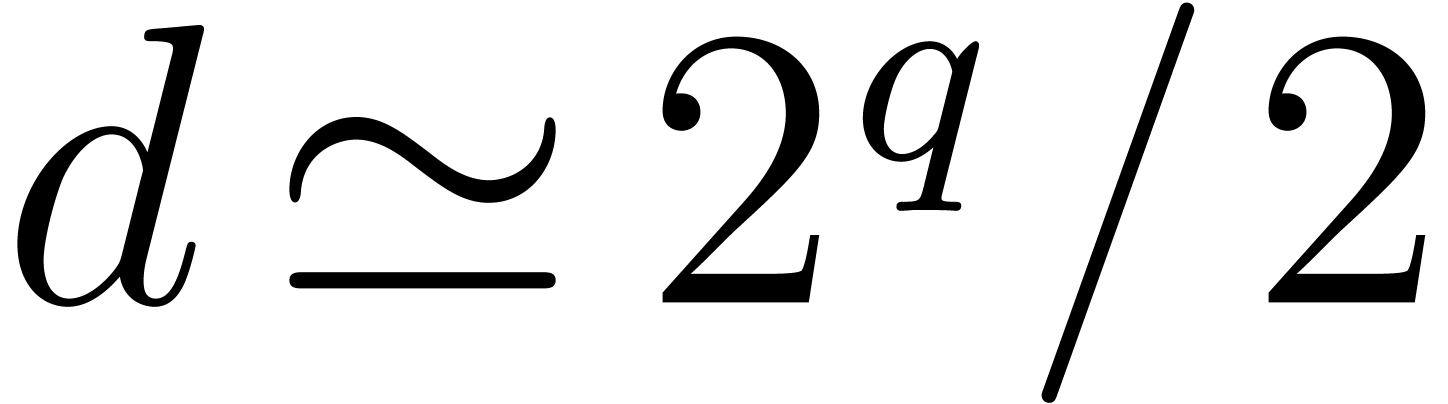

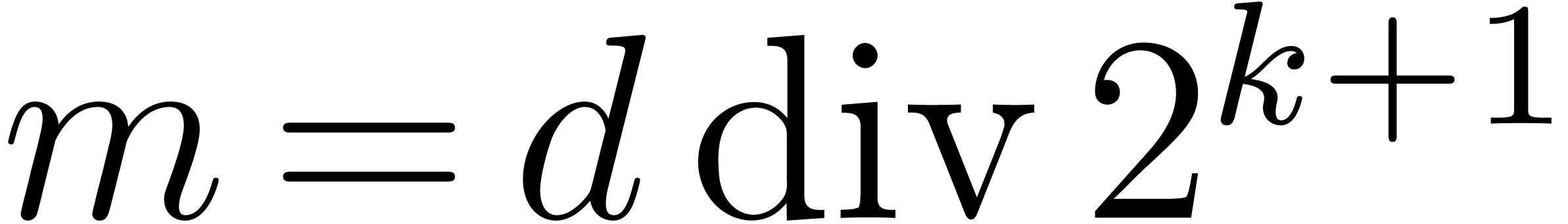

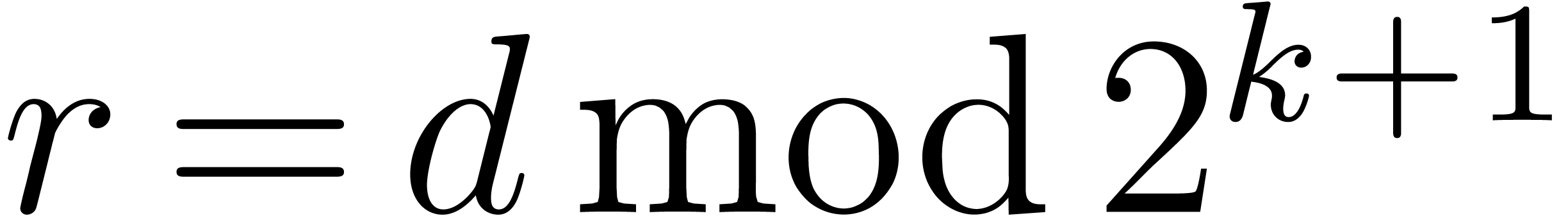

Let  be as above, let

be as above, let  be

the smallest integer such that

be

the smallest integer such that  and let us

suppose that

and let us

suppose that  contains a primitive

contains a primitive  -th root of unity

-th root of unity  .

The TFT points are

.

The TFT points are  , for

, for

, where

, where  is the binary

is the binary  -bits mirror of

-bits mirror of

. In other words, they form

an initial segment of length

. In other words, they form

an initial segment of length  for the sequence of

for the sequence of

-th roots of unity written in

the bit-reverse order; when for instance

-th roots of unity written in

the bit-reverse order; when for instance  and

and

, these points are

, these points are  . In this subsection, these points

are fixed, so we drop the subscript

. In this subsection, these points

are fixed, so we drop the subscript  in our

notation.

in our

notation.

It is known [vdH04, vdH05] that in the

monomial basis, evaluation and interpolation at the TFT points can be

done in time  . Precisely,

both operations can be done using

. Precisely,

both operations can be done using  shifted

additions and subtractions and

shifted

additions and subtractions and  multiplications

by powers of

multiplications

by powers of  . Here we recall

that a shifted addition (resp. subtraction) is a classical addition

(resp. subtraction) where any of the inputs may be premultiplied by

. Here we recall

that a shifted addition (resp. subtraction) is a classical addition

(resp. subtraction) where any of the inputs may be premultiplied by  or

or  (e.g.,

(e.g.,  ). In the most interesting case

). In the most interesting case  , the TFT roughly saves a factor of 2 over the

classical FFT; this makes it a very useful tool for e.g. polynomial

multiplication.

, the TFT roughly saves a factor of 2 over the

classical FFT; this makes it a very useful tool for e.g. polynomial

multiplication.

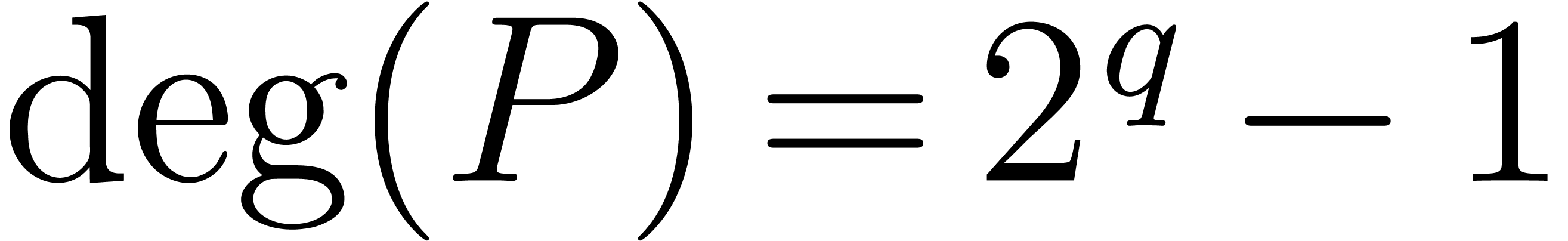

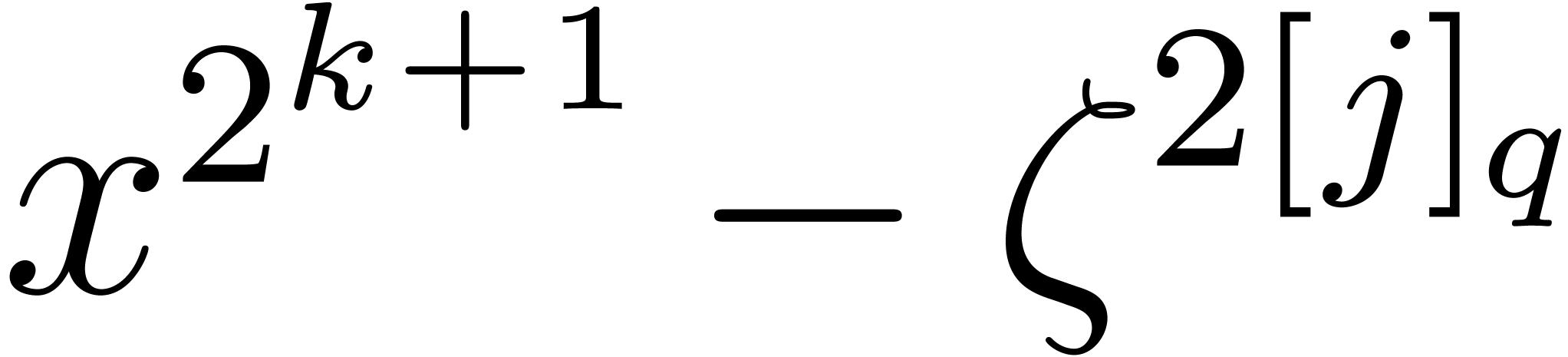

We will show here that similar results hold for the conversion between

the monomial and Newton bases. First, we give the basis of the algorithm

by assuming that  , so that

, so that

. Such a polynomial can be

written in the monomial, resp. Newton basis, as

. Such a polynomial can be

written in the monomial, resp. Newton basis, as

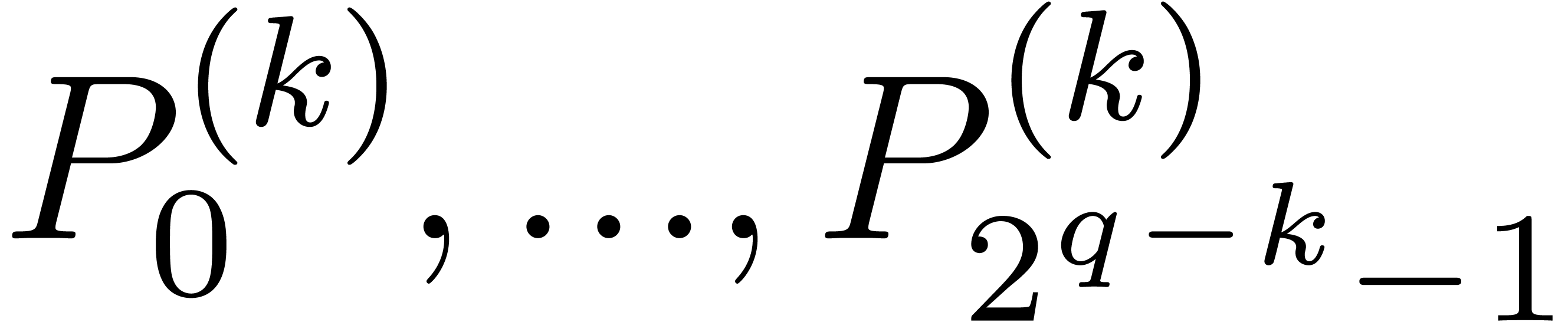

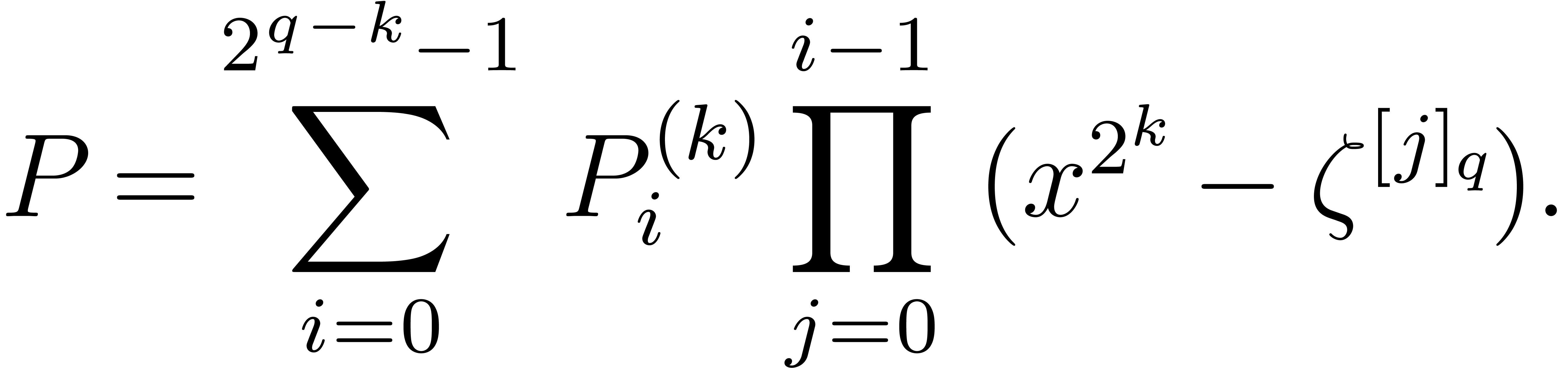

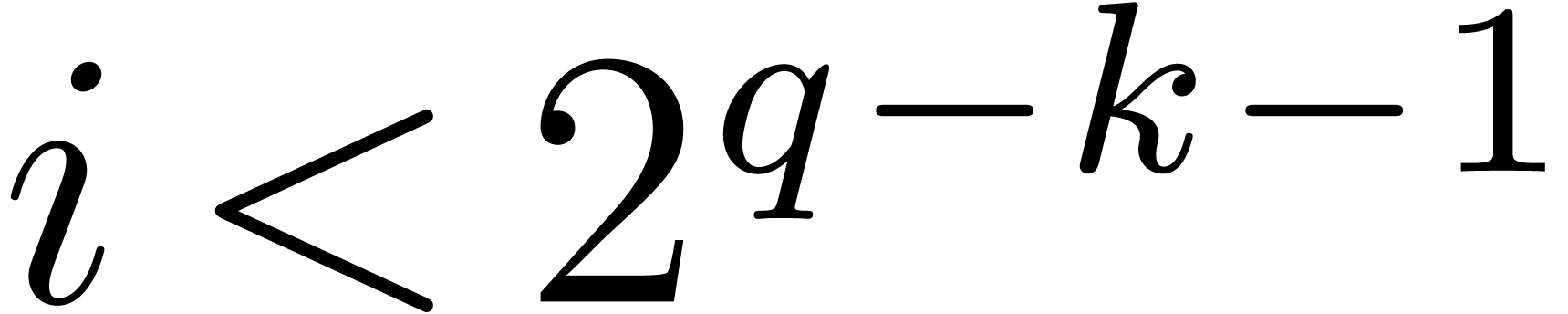

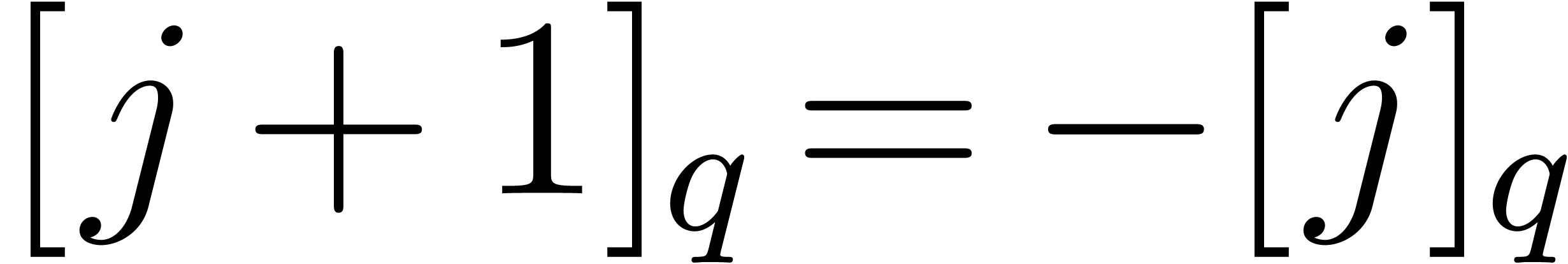

For  , let us introduce the

polynomials

, let us introduce the

polynomials  , all of degree

less than

, all of degree

less than  , such that

, such that

|

(1) |

Thus,  for

for  and

and  , whereas

, whereas  for

for  .

.

and

and  ,

we have

,

we have

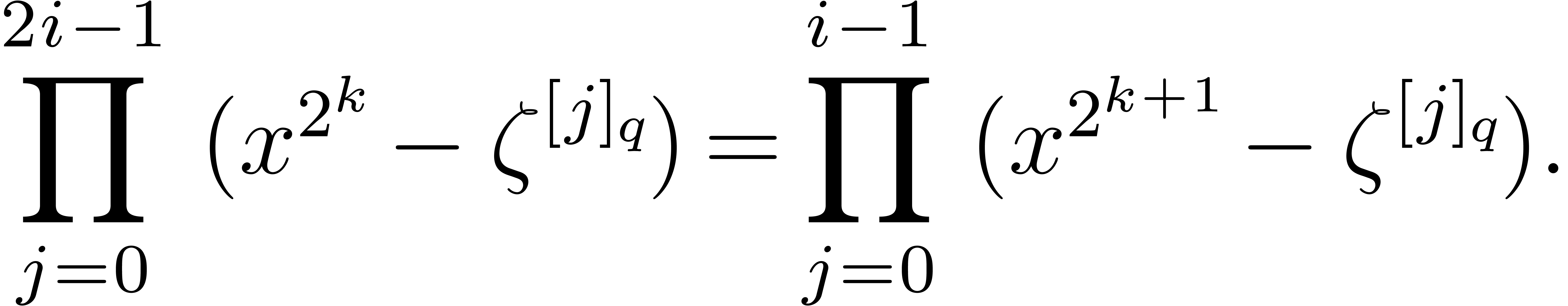

Proof. This follows by grouping the terms of

indices  and

and  in

in  , the following equality holds:

, the following equality holds:

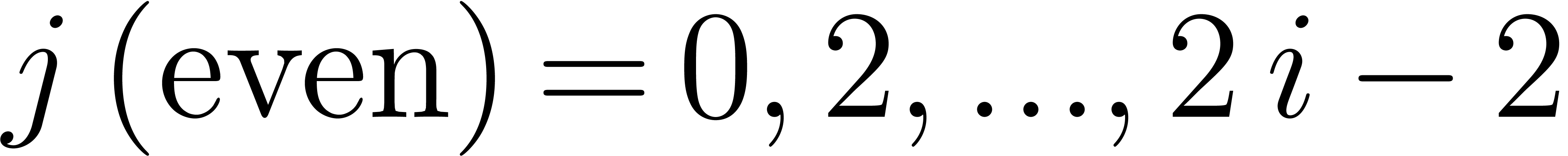

Indeed, for all even  ,

,  ; thus, the left-hand side is the

product of all

; thus, the left-hand side is the

product of all  , for

, for  . The claim follows by writing

. The claim follows by writing

, observing that

, observing that  .

.

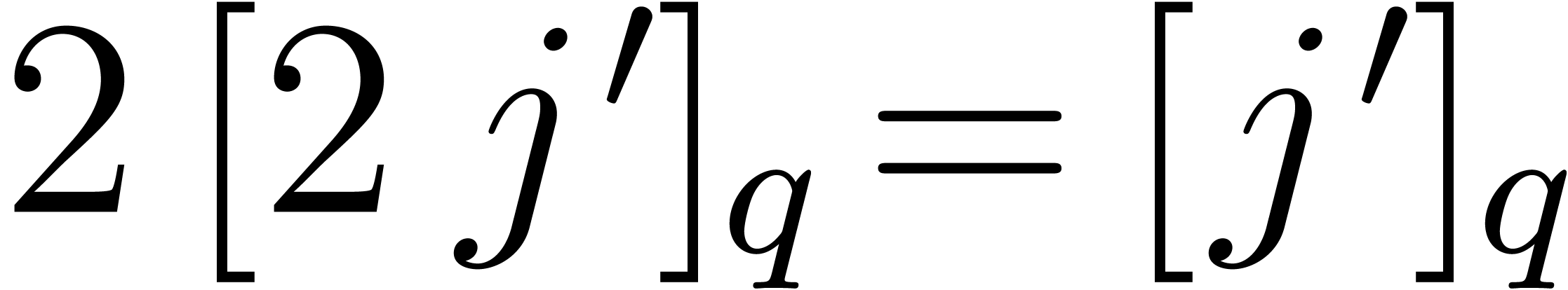

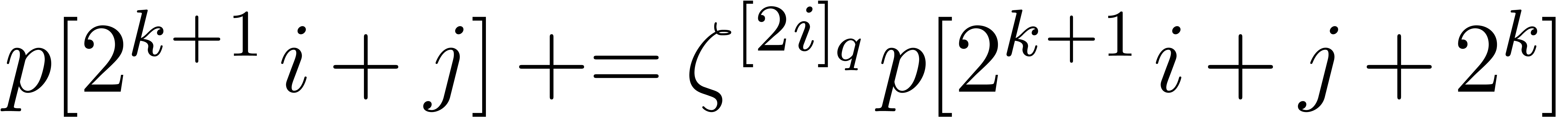

The previous lemma implies an algorithm of complexity  that takes as input the coefficients

that takes as input the coefficients  on the

Newton basis, and outputs

on the

Newton basis, and outputs  on the monomial basis.

It suffices to compute all polynomials

on the monomial basis.

It suffices to compute all polynomials  (on the

monomial basis) by means of the recursive formula. Computing

(on the

monomial basis) by means of the recursive formula. Computing  from

from  and

and  takes

takes  additions and

additions and  multiplications by powers of

multiplications by powers of  ,

so going from index

,

so going from index  to

to  takes a total of

takes a total of  additions and

additions and  multiplications. The inverse conversion takes time

multiplications. The inverse conversion takes time  as well, since knowing

as well, since knowing  , we

can recover first

, we

can recover first  for free as the high-degree

terms of

for free as the high-degree

terms of  , then

, then  using

using  additions and

additions and  multiplications.

multiplications.

The conversion algorithm from the Newton to the monomial basis can be

depicted as follows, in the case  ,

,

. The flow of the algorithm

goes down, from

. The flow of the algorithm

goes down, from  to

to  ;

the

;

the  th row contains the 16

coefficients of the polynomials

th row contains the 16

coefficients of the polynomials  (on the monomial

basis), in that order, and each oblique line corresponds to a

multiplication by a root of unity. The algorithm does only

“half-butterflies”, compared to the FFT algorithm.

(on the monomial

basis), in that order, and each oblique line corresponds to a

multiplication by a root of unity. The algorithm does only

“half-butterflies”, compared to the FFT algorithm.

If we assume that  , we will

be able to avoid useless computations, by keeping track of the zero

coefficients in the polynomials

, we will

be able to avoid useless computations, by keeping track of the zero

coefficients in the polynomials  .

The next figure shows the situation for

.

The next figure shows the situation for  ;

this is similar to what happens in van der Hoeven's TFT algorithm, but

much simpler (here, at each level, we can easily locate the zero

coefficients).

;

this is similar to what happens in van der Hoeven's TFT algorithm, but

much simpler (here, at each level, we can easily locate the zero

coefficients).

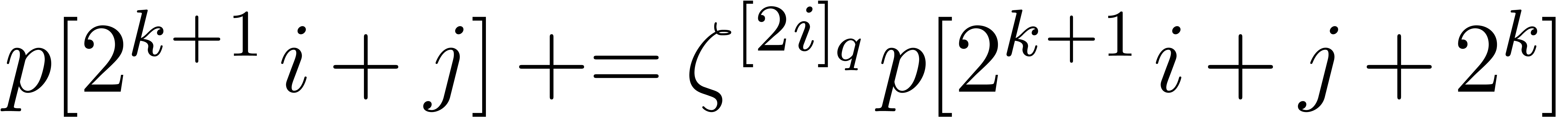

The pseudo-codes for the conversion from Newton basis to monomial basis and the inverse transformation are as follows:

Algorithm TFT-Newton-to-Monomial

of

length

of

length  that contains the coefficients

that contains the coefficients  w.r.t. the Newton basis

w.r.t. the Newton basis

now contains the coefficients

now contains the coefficients  w.r.t. the monomial basis

w.r.t. the monomial basis

for

,

,

for

for

for

Algorithm TFT-Monomial-to-Newton

of

length

of

length  that contains the coefficients

that contains the coefficients  w.r.t. the monomial basis

w.r.t. the monomial basis

now contains the coefficients

now contains the coefficients  w.r.t. the Newton basis

w.r.t. the Newton basis

for

,

,

for

for

for

We deduce the following complexity result for the conversions, which

refines Lemma 1; it is of the form  , with a tight control on the constants.

, with a tight control on the constants.

,

given

,

given  , one can compute

, one can compute

using

using  additions or

subtractions, and

additions or

subtractions, and  multiplications by roots of

unity, with

multiplications by roots of

unity, with  .

.

Proof. For any given  , we do

, we do  additions/subtractions and multiplications. This is at most

additions/subtractions and multiplications. This is at most  .

.

The equivalent of Lemma 2 for the TFT points comes by using van der Hoeven's TFT algorithms for evaluation and interpolation on the monomial basis, instead of the general algorithms.

Let  be a finite initial segment and let

be a finite initial segment and let  be such that

be such that  is contained in

is contained in

. We present here some

geometric operations on

. We present here some

geometric operations on  that will be useful for

the evaluation and interpolation algorithms.

that will be useful for

the evaluation and interpolation algorithms.

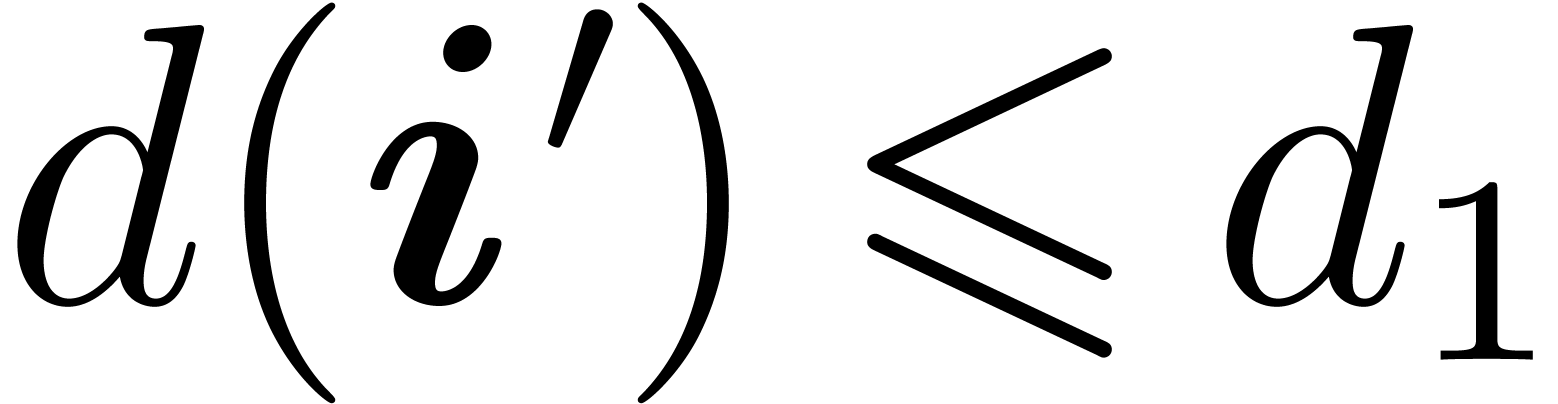

the projection

the projection

of  on the

on the  -coordinate

plane. For

-coordinate

plane. For  in

in  ,

we let

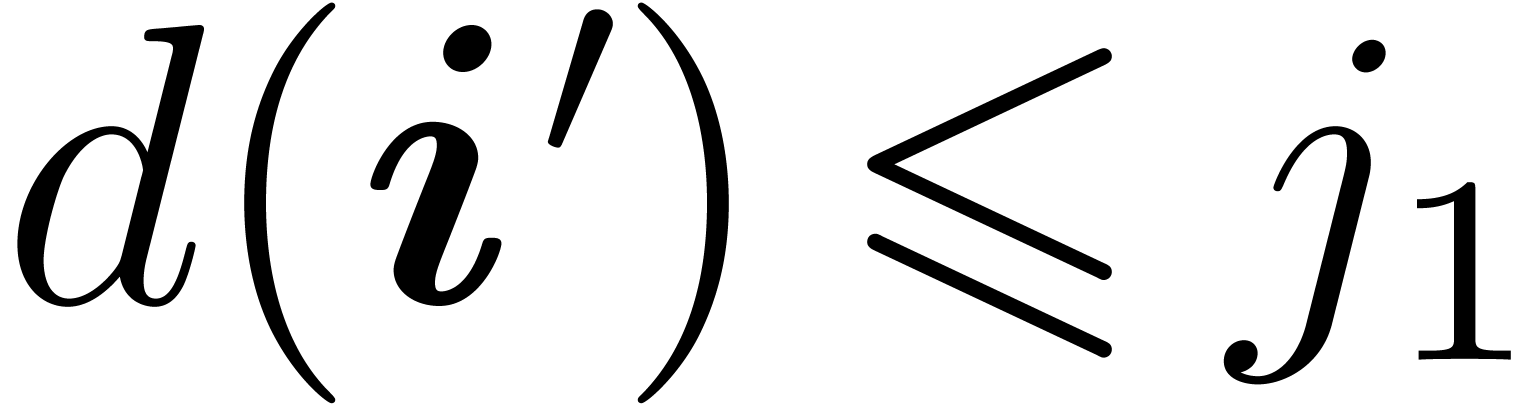

,

we let  be the unique integer such that

be the unique integer such that  and

and  . In

particular,

. In

particular,  holds for all

holds for all  .

.

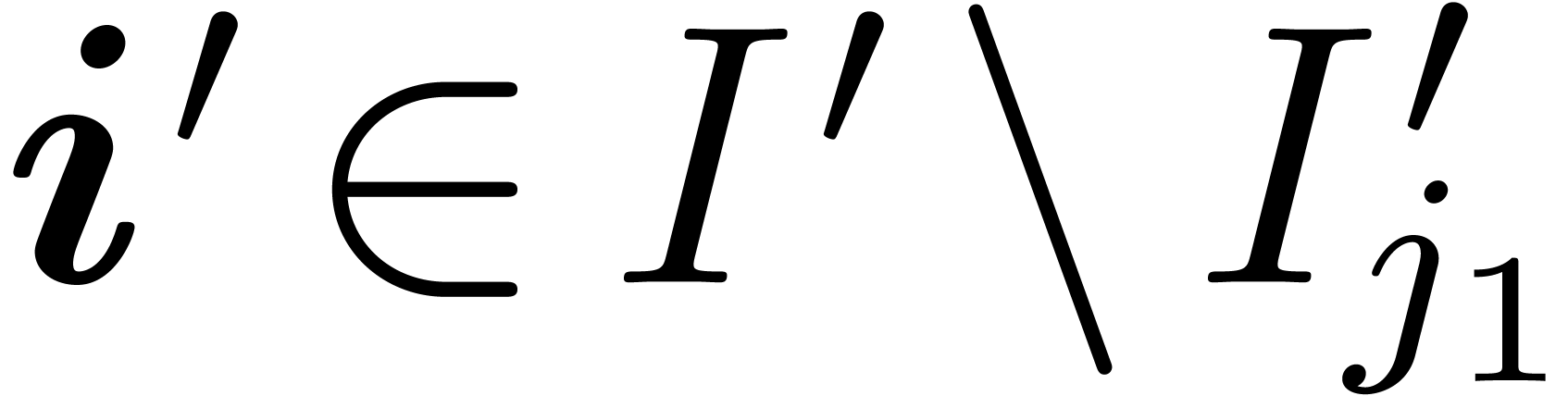

In Figure 1, we have  ;

;

consists of the points of ordinates

consists of the points of ordinates  on the vertical axis, with

on the vertical axis, with  ,

,

,

,  and

and

.

.

Finally, if  is a collection of points as defined

in the introduction, with

is a collection of points as defined

in the introduction, with  for all

for all  , then we will write

, then we will write  .

.

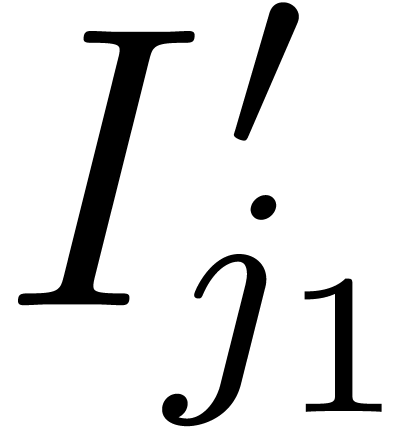

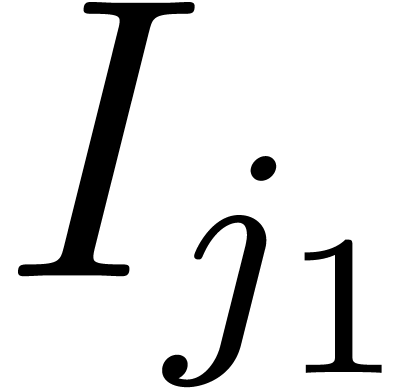

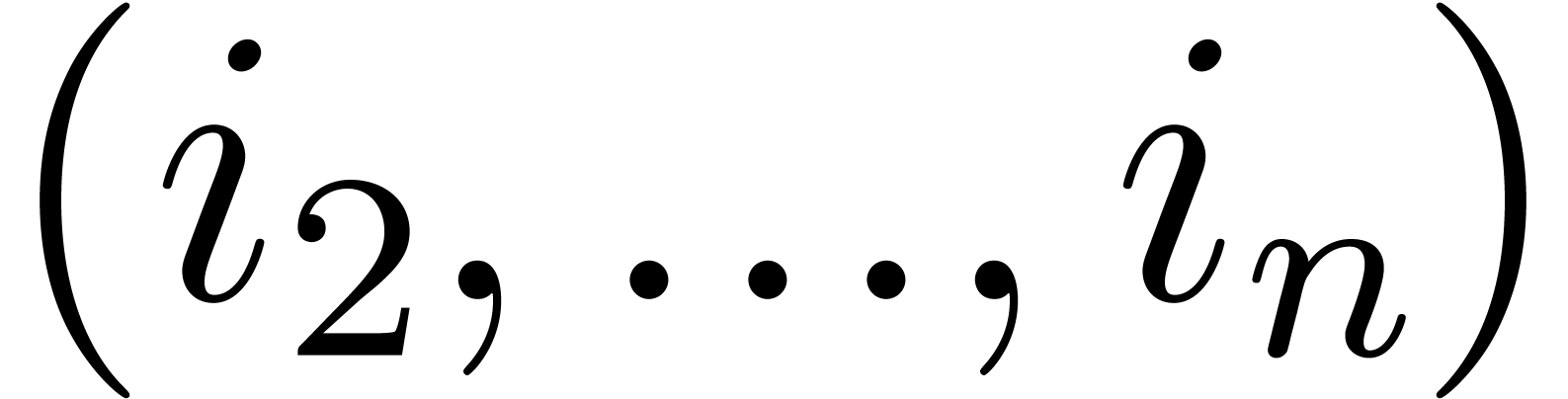

, we let

, we let  be the section

be the section

and we let  be the projection of

be the projection of  on the

on the  -coordinate plane. In

other words,

-coordinate plane. In

other words,  is in

is in  if

and only if

if

and only if  is in

is in  .

We have the following equivalent definition

.

We have the following equivalent definition

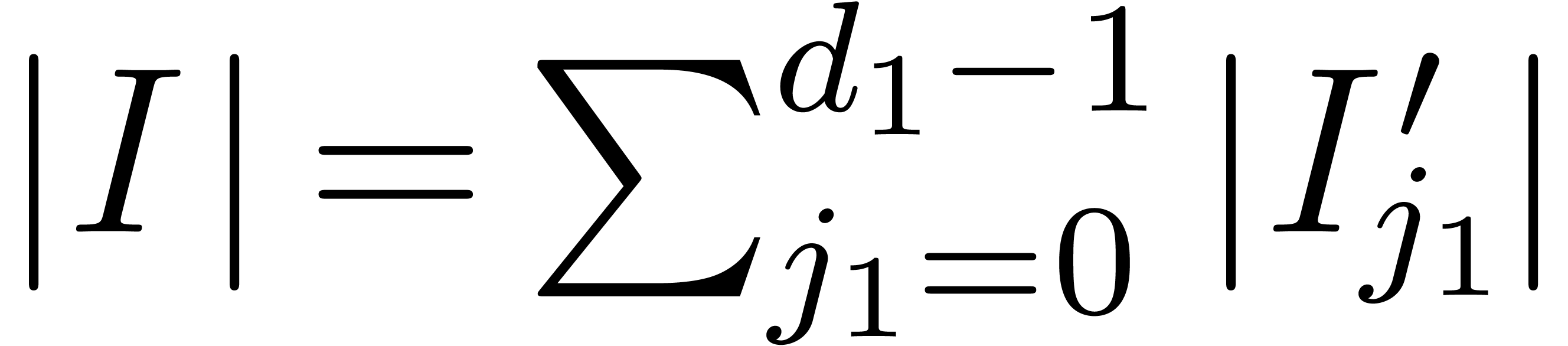

Because the sets  form a partition of

form a partition of  , we deduce the equality

, we deduce the equality  . Notice also that all

. Notice also that all  are initial segments in

are initial segments in  .

In Figure 1, we have

.

In Figure 1, we have  ,

,

,

,  ,

,  and

and  .

.

From now on, we focus on multivariate polynomials. In all this section,

we fix a finite initial segment  and

and  such that

such that  .

Naturally, polynomials in

.

Naturally, polynomials in  may be written in the

monomial basis

may be written in the

monomial basis  , but we may

also use the multivariate Newton basis

, but we may

also use the multivariate Newton basis  ,

defined by

,

defined by

Generalizing the univariate notation, given  , we will consider a mixed monomial-Newton basis

, we will consider a mixed monomial-Newton basis

with

with

As in the univariate case, we will write  to

indicate that

to

indicate that  is written on the corresponding

basis.

is written on the corresponding

basis.

It will be useful to rely on the following decomposition. Let  be in

be in  , written

on the basis

, written

on the basis  . Collecting

coefficients, we obtain

. Collecting

coefficients, we obtain

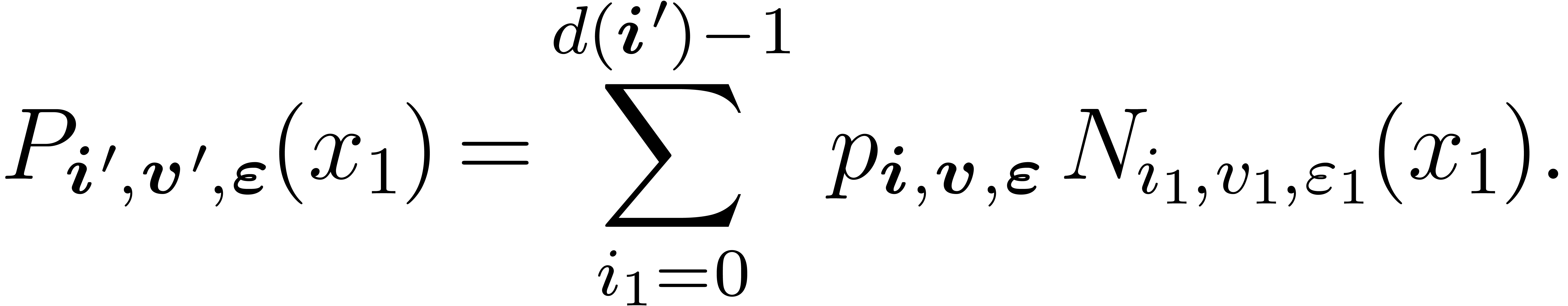

|

(2) |

with  and

and

|

(3) |

Keep in mind that if the indices  and

and  are omitted, we are using the Newton basis.

are omitted, we are using the Newton basis.

be in

be in  ,

and let

,

and let  be obtained by replacing

be obtained by replacing  by

by  in

in  , for some

, for some  in

in  . Let

. Let  be in

be in  . Given

. Given  , one can compute

, one can compute  in

time

in

time

Proof. Using a permutation of coordinates, we

reduce to the case when  .

Using the above notations, it suffices to convert

.

Using the above notations, it suffices to convert  from the basis

from the basis  to the basis

to the basis  for all

for all  . By Lemma 1,

each conversion can be done in time

. By Lemma 1,

each conversion can be done in time  ,

so the total cost is

,

so the total cost is

Since the function  is increasing, we get the

upper bound

is increasing, we get the

upper bound

the conclusion follows from the equality  .

.

Let us write  and

and  ,

where both vectors have length

,

where both vectors have length  .

Then, the basis

.

Then, the basis  is the monomial basis, whereas

the basis

is the monomial basis, whereas

the basis  is the Newton basis. Changing one

coordinate at a time, we obtain the following corollary, which shows how

to convert from the monomial basis to the Newton basis, and back.

is the Newton basis. Changing one

coordinate at a time, we obtain the following corollary, which shows how

to convert from the monomial basis to the Newton basis, and back.

The remarks in Section 2 about special families of points

apply here as well: if the points in  have

special properties (e.g.,

have

special properties (e.g.,  is in geometric

progression, or the

is in geometric

progression, or the  are TFT points), the cost

may be reduced (both in the geometric case and in the TFT case, we may

save the factors

are TFT points), the cost

may be reduced (both in the geometric case and in the TFT case, we may

save the factors  ).

).

We are now in a position to state and prove our main result.

such that

such that  , with

, with  written on the

monomial basis of

written on the

monomial basis of  , one can

evaluate

, one can

evaluate  at

at  in time

in time

Conversely, given the values of  at

at  , one can compute the

representation of

, one can compute the

representation of  on the monomial basis of

on the monomial basis of

with the same cost.

with the same cost.

Using the bound  , and the

fact that

, and the

fact that  holds for all

holds for all  , we deduce the simplified bound

, we deduce the simplified bound  claimed in the introduction. Remark also that our result matches the

cost of the algorithm of [Pan94], which applies in the

special case of evaluation-interpolation at a grid.

claimed in the introduction. Remark also that our result matches the

cost of the algorithm of [Pan94], which applies in the

special case of evaluation-interpolation at a grid.

The input  to the evaluation algorithm is given

on the monomial basis of

to the evaluation algorithm is given

on the monomial basis of  ;

however, internally to the algorithm, we use the Newton basis. Thus,

before entering the (recursive) evaluation algorithm, we switch once and

for all to the Newton basis; this does not harm complexity, in view of

Lemma 6. Similarly, the interpolation algorithm uses the

Newton basis, so we convert the result to the monomial basis after we

have completed the interpolation.

;

however, internally to the algorithm, we use the Newton basis. Thus,

before entering the (recursive) evaluation algorithm, we switch once and

for all to the Newton basis; this does not harm complexity, in view of

Lemma 6. Similarly, the interpolation algorithm uses the

Newton basis, so we convert the result to the monomial basis after we

have completed the interpolation.

Remark also that if the points  are in geometric

progression for each

are in geometric

progression for each  , then

one may eliminate the factors

, then

one may eliminate the factors  from the

complexity bound. Using TFT evaluation points allows for similar

reductions.

from the

complexity bound. Using TFT evaluation points allows for similar

reductions.

The algorithm follows a pattern similar to Pan's multivariate evaluation

and interpolation at a grid [Pan94]: e.g. for evaluation,

we evaluate at the fibers above each  ,

and proceed recursively with polynomials obtained from the sections

,

and proceed recursively with polynomials obtained from the sections

. Using the Newton basis

allows us to alleviate the issues coming from the fact that

. Using the Newton basis

allows us to alleviate the issues coming from the fact that  is not a grid.

is not a grid.

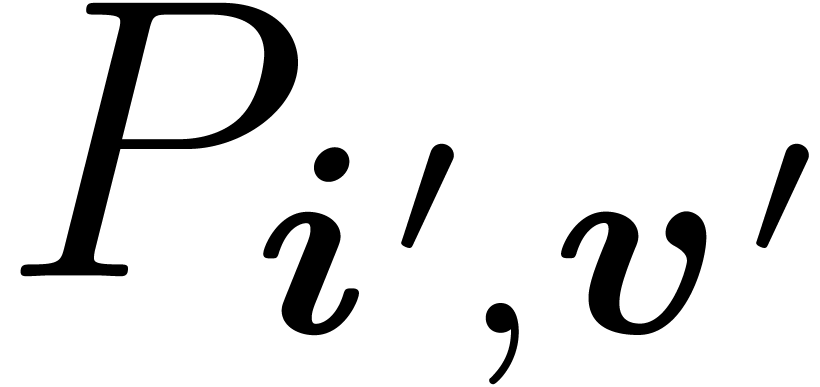

Let  be written (in the Newton basis) as in the

previous section:

be written (in the Newton basis) as in the

previous section:

where we write  and

and

To  , we associate the

, we associate the  -variate polynomial

-variate polynomial

The key to our algorithms is the following proposition.

Proof. First, we make both quantities explicit. The left-hand side is given by

whereas the right-hand side is

where in both cases we write  .

Thus, to conclude, it is enough to prove that, for

.

Thus, to conclude, it is enough to prove that, for  , we have

, we have  .

.

Indeed, recall that the assumption  implies

implies  . On the other hand, we have

. On the other hand, we have  , whence the inequality

, whence the inequality  , where we write

, where we write  . In particular, we deduce

. In particular, we deduce  , which in turn implies that

, which in turn implies that  . Thus, there exists

. Thus, there exists  such that

such that  . This implies that

. This implies that

, as requested.

, as requested.

Given  , with

, with  written in the Newton basis

written in the Newton basis  ,

we show here how evaluate

,

we show here how evaluate  at

at  . The algorithm is the following.

. The algorithm is the following.

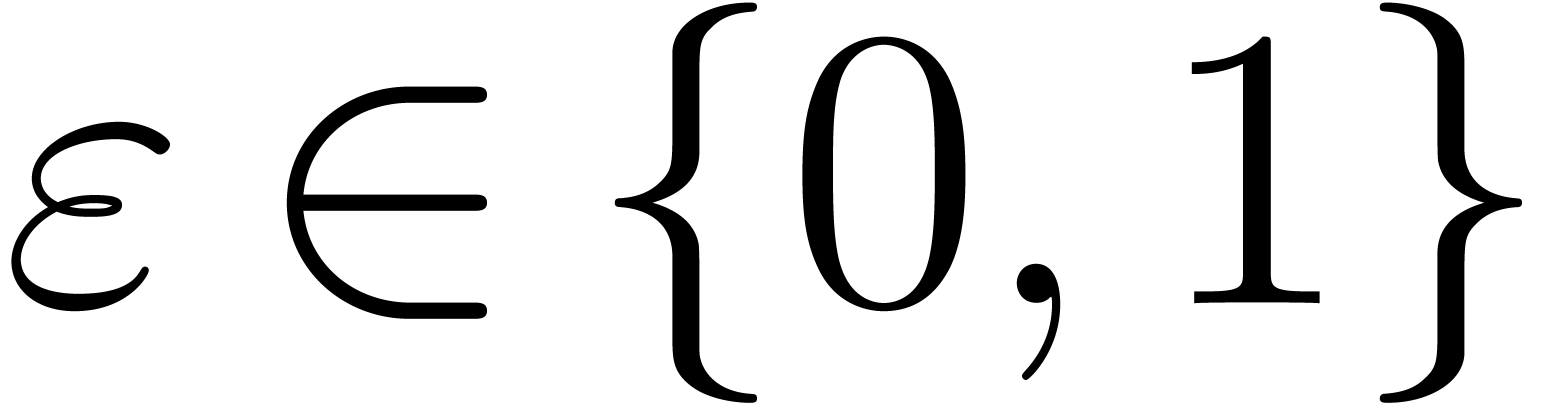

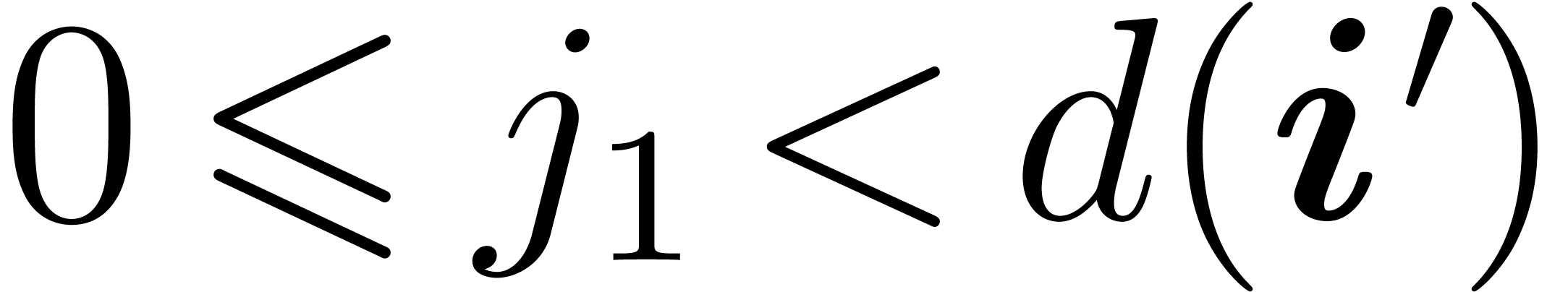

If  ,

,  is a constant; we return it unchanged.

is a constant; we return it unchanged.

Otherwise, we compute all values  ,

for

,

for  and

and  ,

by applying the fast univariate evaluation algorithm to each

,

by applying the fast univariate evaluation algorithm to each  . For

. For  , and for

, and for  ,

we have (by definition)

,

we have (by definition)  ,

so we have all the information we need to form the polynomial

,

so we have all the information we need to form the polynomial  . Then, we evaluate recursively

each

. Then, we evaluate recursively

each  at

at  ,

for

,

for  .

.

at

at  in time

in time

Proof. Correctness follows directly from

Proposition 8, so we can focus on the cost analysis. Let

denote the cost of this algorithm. The former

discussion shows that

denote the cost of this algorithm. The former

discussion shows that  and that

and that  is the sum of two contributions:

is the sum of two contributions:

the cost of computing all values  ,

for

,

for  and

and

the cost of the recursive calls on  for

for  .

.

Lemma 2 shows that the former admits the upper bound

as in the proof of Lemma 5, this can be bounded by

As to the recursive calls, notice that all  are

contained in

are

contained in  , which is

contained in

, which is

contained in  . Thus, for some

constant

. Thus, for some

constant  , we obtain the

inequality

, we obtain the

inequality

|

(4) |

To conclude, we prove that for all  ,

for all

,

for all  and for any initial segment

and for any initial segment  , we have

, we have

|

(5) |

Such an inequality clearly holds for  .

Assume by induction on

.

Assume by induction on  that for any

that for any  and any initial segment

and any initial segment  ,

we have

,

we have

|

(6) |

To prove (5), we substitute (6) in (4), to get

Since  , we are done.

, we are done.

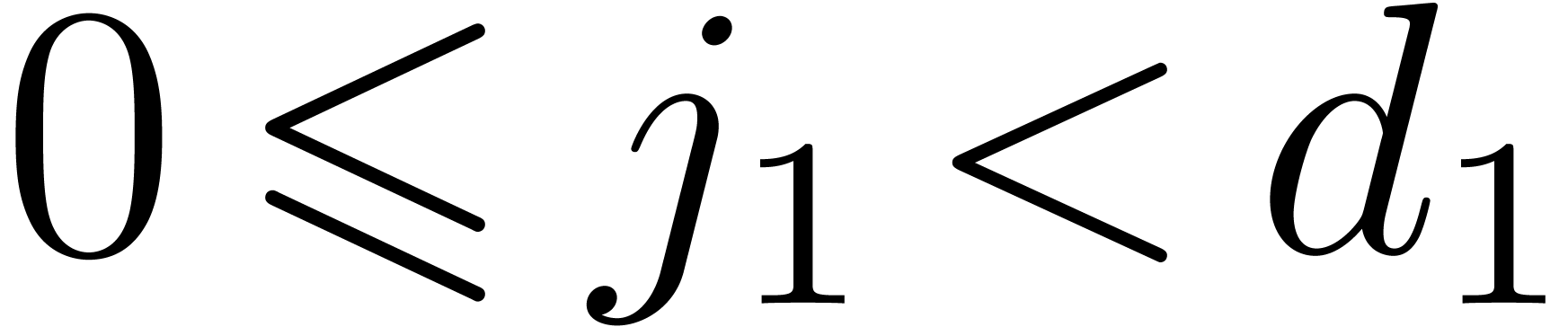

The interpolation algorithm is obtained by reversing step-by-step the

evaluation algorithm. On input, we take  ,

with

,

with  ; the output is the

unique polynomial

; the output is the

unique polynomial  such that

such that  for all

for all  .

.

If  ,

,  consists of a single entry; we return it unchanged.

consists of a single entry; we return it unchanged.

Otherwise, we recover recursively all  ,

for

,

for  . This is made

possible by Proposition 8, which shows that we actually

know the values of each

. This is made

possible by Proposition 8, which shows that we actually

know the values of each  at the corresponding

at the corresponding

. Knowing all

. Knowing all  gives us the values

gives us the values  for all

for all

and

and  .

It suffices to interpolate each

.

It suffices to interpolate each  on the

Newton basis

on the

Newton basis  to conclude.

to conclude.

Correctness of this algorithm is clear and the following complexity bound is proved in a similar way as in the case of evaluation.

in time

in time

We conclude with an application of our results to the multiplication of

polynomials and power series. Let  and

and  be as above. We let

be as above. We let  ,

as we assume that

,

as we assume that  has cardinality at least

has cardinality at least  , so that we can find

, so that we can find  , where

, where  consists of

pairwise distinct entries in

consists of

pairwise distinct entries in  .

Let

.

Let  , so that

, so that  .

.

We discuss here the case when we want to multiply two polynomials  and

and  with

with  . In this case, we may use a simple

evaluation-interpolation strategy.

. In this case, we may use a simple

evaluation-interpolation strategy.

Perform multi-point evaluations of  and

and  at

at  ;

;

Compute the componentwise product of the evaluations;

Interpolate the result at  to yield the

product

to yield the

product  .

.

By Theorem 7, this can be done in time

If  admits at least

admits at least  points in geometric progression (or at least

points in geometric progression (or at least  TFT

points), then the factor

TFT

points), then the factor  may be removed. This

result should be compared to the algorithm of [CKL89],

which has complexity

may be removed. This

result should be compared to the algorithm of [CKL89],

which has complexity  ; that

algorithm applies to more general monomial supports, but under more

restrictive conditions on the base field.

; that

algorithm applies to more general monomial supports, but under more

restrictive conditions on the base field.

Let now  be the monomial ideal generated by

be the monomial ideal generated by  . We discuss here the complexity of

multiplication modulo in

. We discuss here the complexity of

multiplication modulo in  . To

our knowledge, no general algorithm with a complexity quasi-linear in

. To

our knowledge, no general algorithm with a complexity quasi-linear in

is known.

is known.

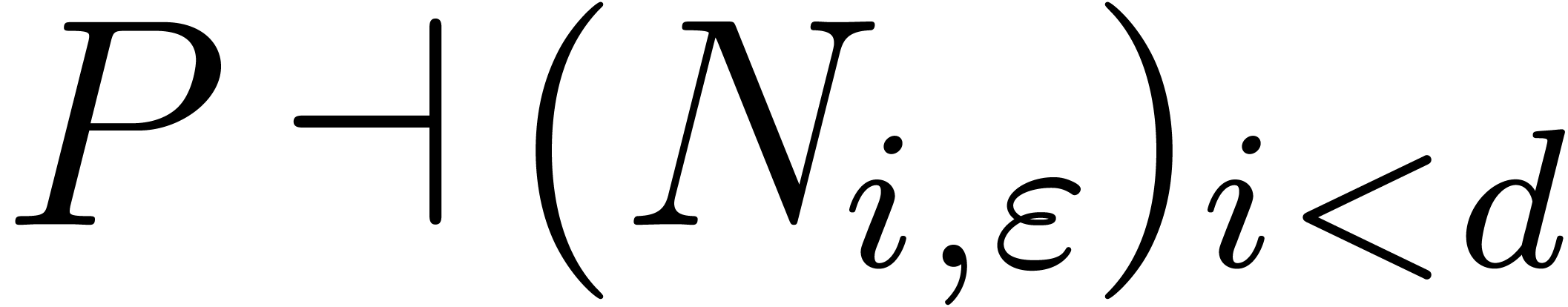

Let us first recall an algorithm of [Sch05] and show how

our results enable us to improve it. Theorem 1 of [Sch05]

gives an algorithm for multiplication in  ,

that relies on the following operations:

,

that relies on the following operations:

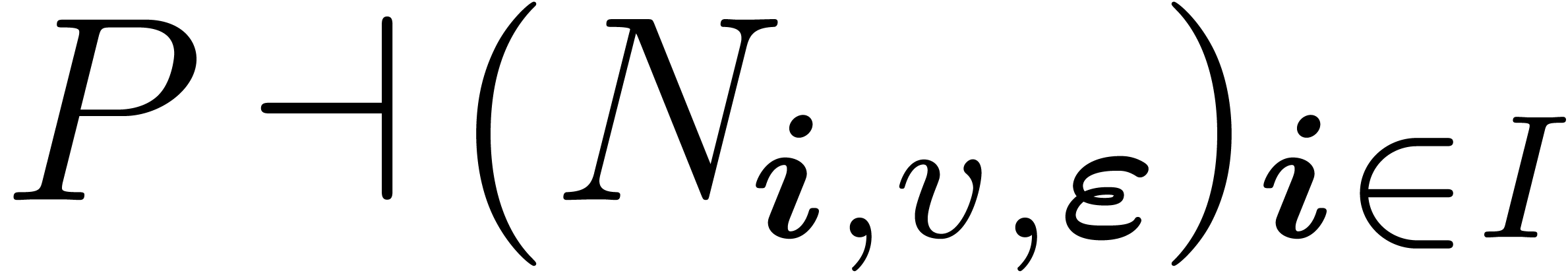

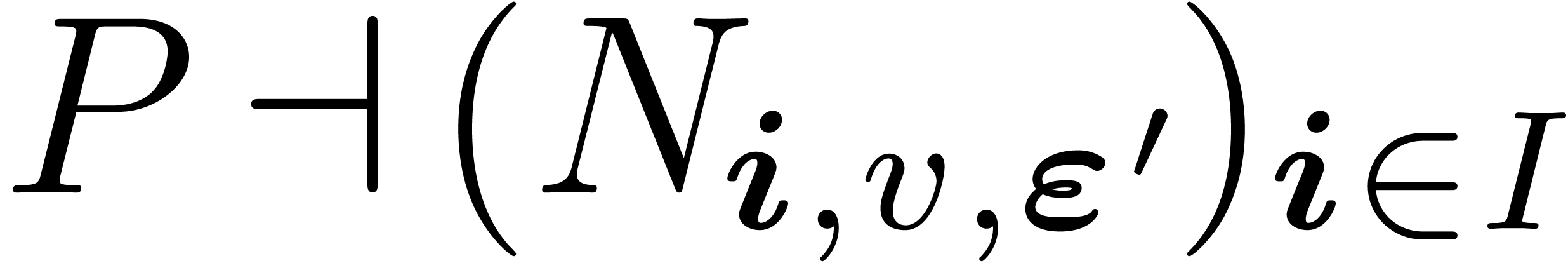

multi-point evaluations at

multi-point evaluations at  of polynomials in

of polynomials in  ;

;

univariate power series multiplication in

precision

univariate power series multiplication in

precision  ;

;

interpolations at

interpolations at  of

polynomials in

of

polynomials in  .

.

The paper [Sch05] does not specify how to do the evaluation and interpolation (for lack of an efficient solution); using our results, it becomes possible to fill all the gaps in this algorithm. Applying Theorem 7, without doing any simplification, we obtain a cost of

Using the inequality  , this

gives the upper bound

, this

gives the upper bound  . If we

can take at least

. If we

can take at least  points in geometric

progression in

points in geometric

progression in  (or at least

(or at least  TFT points), then the upper bound reduces to

TFT points), then the upper bound reduces to  .

.

The most important case of truncated power series multiplication is when

we truncate with respect to the total degree. In other words, we take

. In that case, several

alternative strategies to the one of the former subsection are available

[LS03, vdH04, vdH05, vdHL09],

and we refer to [vdH05, vdHL09] for some

benchmarks.

. In that case, several

alternative strategies to the one of the former subsection are available

[LS03, vdH04, vdH05, vdHL09],

and we refer to [vdH05, vdHL09] for some

benchmarks.

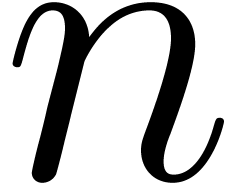

As it turns out, one can apply the result from Section 6.1,

in the special case of polynomials supported in total degree, to improve

these algorithms, when  admits at least

admits at least  points in geometric progression (or at least

points in geometric progression (or at least  TFT points). Indeed, the algorithms from [LS03,

vdHL09] rely on multivariate polynomial multiplication.

Using the result of Section 6.1 in these algorithms

(instead of sparse polynomial multiplication), we obtain a new algorithm

of time complexity

TFT points). Indeed, the algorithms from [LS03,

vdHL09] rely on multivariate polynomial multiplication.

Using the result of Section 6.1 in these algorithms

(instead of sparse polynomial multiplication), we obtain a new algorithm

of time complexity  instead of

instead of  . For constant

. For constant  ,

this removes a factor

,

this removes a factor  from the asymptotic time

complexity.

from the asymptotic time

complexity.

To finish, we would like to point out that the present paper almost repairs an error in [vdH04, Section 5], which was first announced in [vdH05]. Indeed, it was implicitly, but mistakenly, assumed that Proposition 8 also holds for monomial bases. The present “fix” simply consists of converting to the Newton basis before evaluating, and similarly for the inverse. The asymptotic time complexity analysis from [vdH04, Section 5] actually remains valid up to a small but non trivial constant factor. When using TFT transforms in combination with the algorithms from section 2.2, we expect the constant factor to be comprised between one and three in practice.

A. Borodin and R. T. Moenck. Fast modular transforms. Journal of Computer and System Sciences, 8:366–386, 1974.

D. Bini and V. Y. Pan. Polynomial and matrix computations. Vol. 1. Birkhäuser Boston Inc., Boston, MA, 1994. Fundamental algorithms.

A. Bostan and É. Schost. Polynomial evaluation and interpolation on special sets of points. Journal of Complexity, 21(4):420–446, 2005.

D. G. Cantor and E. Kaltofen. On fast multiplication of polynomials over arbitrary algebras. Acta Informatica, 28(7):693–701, 1991.

J. Canny, E. Kaltofen, and Y. Lakshman. Solving systems of non-linear polynomial equations faster. In G. Gonnet, editor, ISSAC '89, pages 121–128, Portland, Oregon, 1989. ACM.

J. von zur Gathen and J. Gerhard. Modern Computer Algebra. Cambridge University Press, 2-nd edition, 2003.

G. Lecerf and É. Schost. Fast multivariate power series multiplication in characteristic zero. SADIO Electronic Journal on Informatics and Operations Research, 5(1):1–10, September 2003.

F. Mora. De nugis Groebnerialium 2: Applying Macaulay's trick in order to easily write a Gröbner basis. Applicable Algebra in Engineering, Communication and Computing, 13(6):437–446, 2003.

G. Mühlbach. On multivariate interpolation by generalized polynomials on subsets of grids. Computing, 40(3):201–215, 1988.

V. Pan. Simple multivariate polynomial multiplication. Journal of Symbolic Computation, 18(3):183–186, 1994.

T. Sauer. Lagrange interpolation on subgrids of tensor product grids. Mathematics of Computation, 73(245):181–190, 2004.

É. Schost. Multivariate power series multiplication. In M. Kauers, editor, ISSAC '05, pages 293–300, New York, NY, USA, 2005. ACM.

J. van der Hoeven. Relax, but don't be too lazy. Journal of Symbolic Computation, 34:479–542, 2002.

J. van der Hoeven. The truncated Fourier transform and applications. In J. Gutierrez, editor, ISSAC '04, pages 290–296, Univ. of Cantabria, Santander, Spain, July 4–7 2004. ACM.

J. van der Hoeven. Notes on the Truncated Fourier Transform. Technical Report 2005-5, Université Paris-Sud, Orsay, France, 2005.

J. van der Hoeven and G. Lecerf. On the bit-complexity of sparse polynomial multiplication. Technical Report http://arxiv.org/abs/0901.4323v1, Arxiv, 2009.

H. Werner. Remarks on Newton type multivariate interpolation for subsets of grids. Computing, 25(2):181–191, 1980.