| Implementing the tangent Graeffe root finding method   |

|

| May 2, 2020 |

|

. This paper is

part of a project that has received funding from the French

“Agence de l'innovation de défense”.

. This paper is

part of a project that has received funding from the French

“Agence de l'innovation de défense”.

. This article has

been written using GNU TeXmacs [17].

. This article has

been written using GNU TeXmacs [17].

The tangent Graeffe method has been developed for the efficient computation of single roots of polynomials over finite fields with multiplicative groups of smooth order. It is a key ingredient of sparse interpolation using geometric progressions, in the case when blackbox evaluations are comparatively cheap. In this paper, we improve the complexity of the method by a constant factor and we report on a new implementation of the method and a first parallel implemenation. |

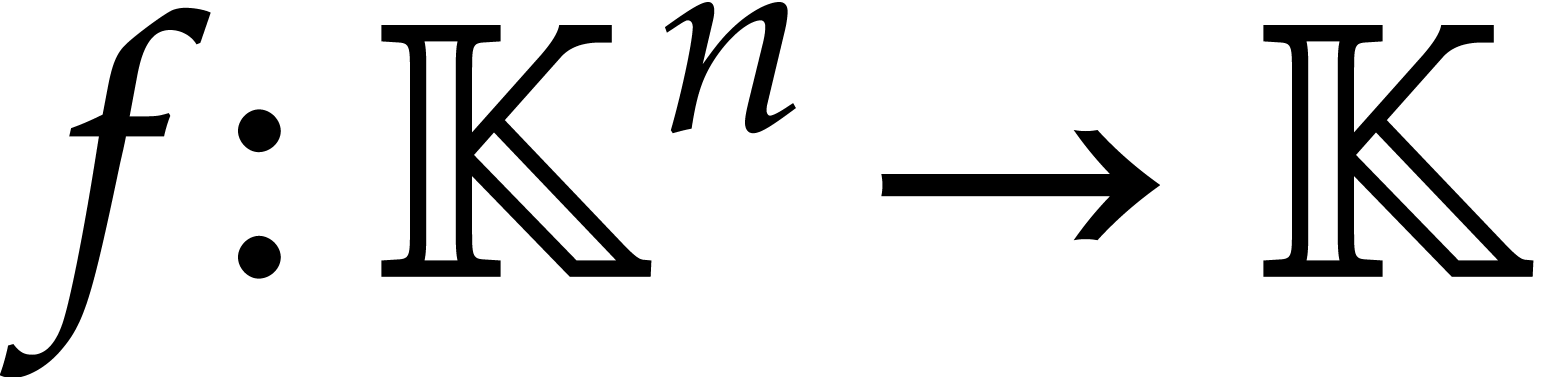

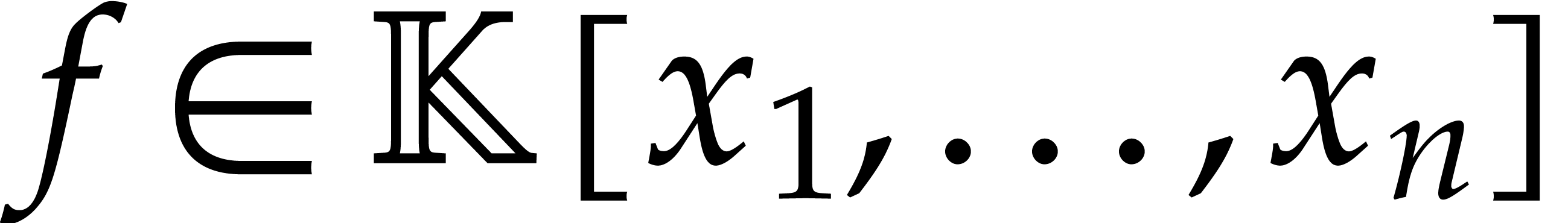

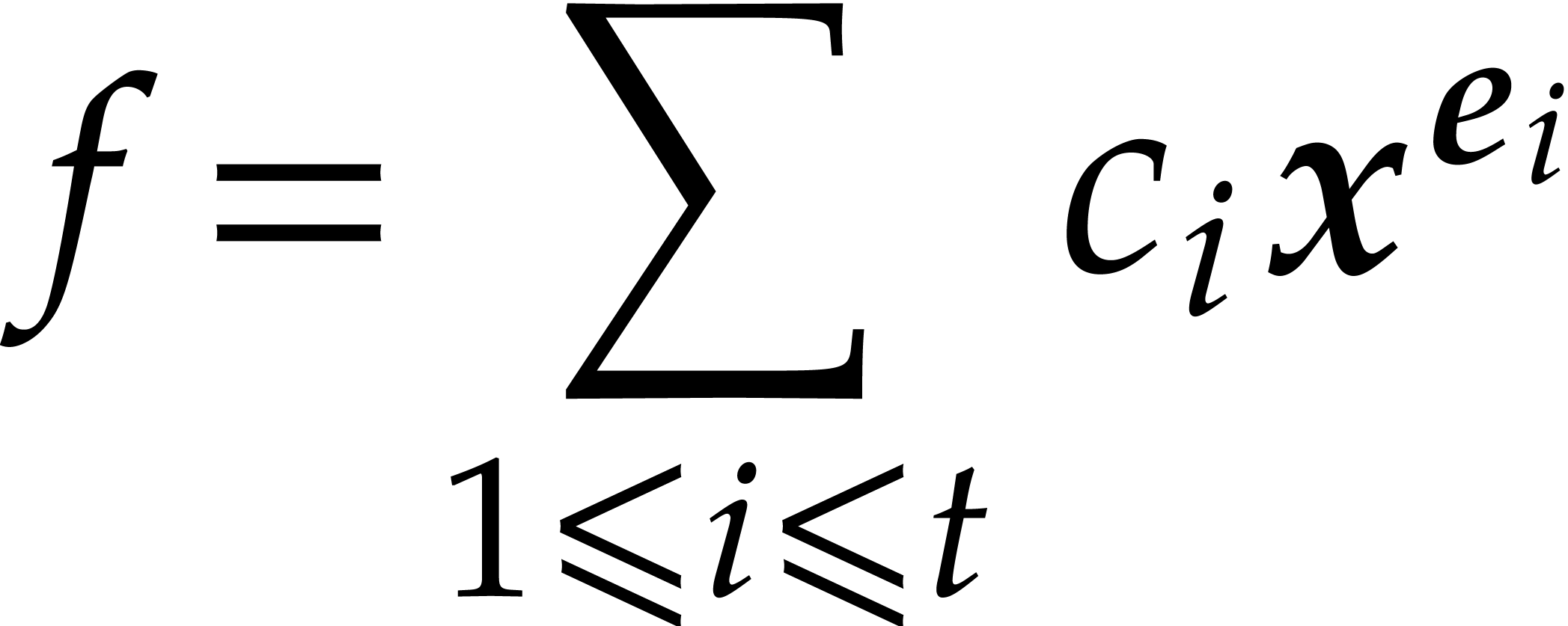

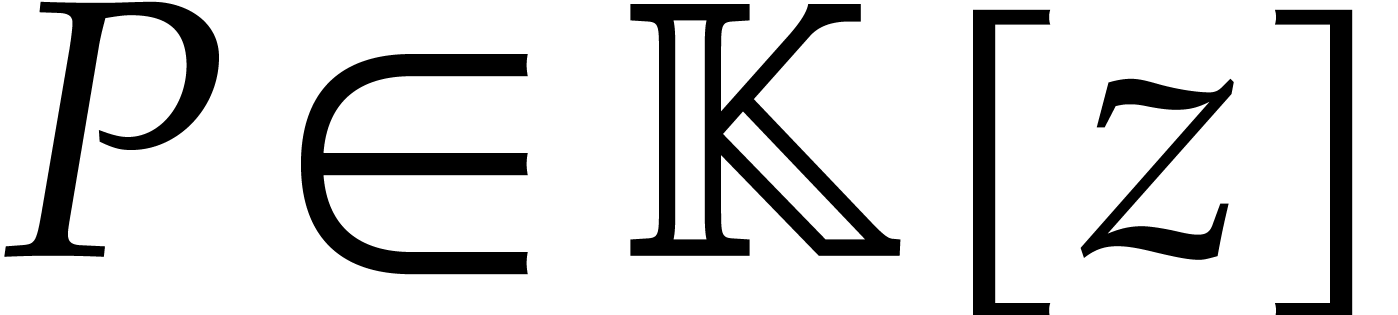

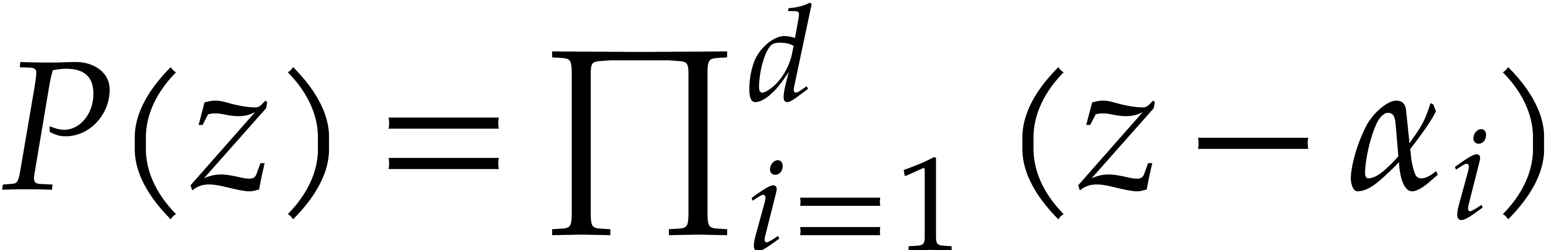

Consider a polynomial function  over a field

over a field  given through a black box capable of evaluating

given through a black box capable of evaluating  at points in

at points in  .

The problem of sparse interpolation is to recover the

representation of

.

The problem of sparse interpolation is to recover the

representation of  in its usual form, as a linear

combination

in its usual form, as a linear

combination

|

(1) |

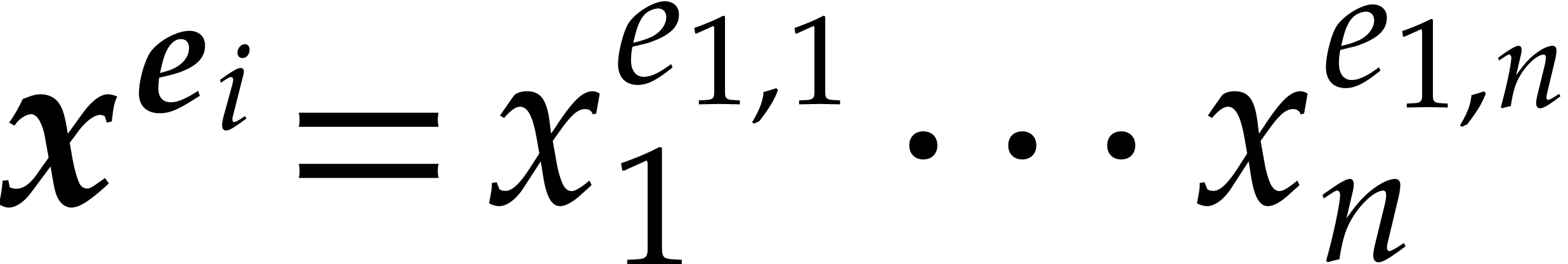

of monomials  . One popular

approach to sparse interpolation is to evaluate

. One popular

approach to sparse interpolation is to evaluate  at points in a geometric progression. This approach goes back to work of

Prony in the eighteen's century [29] and became well known

after Ben-Or and Tiwari's seminal paper [2]. It has widely

been used in computer algebra, both in theory [6, 19,

21–24, 28] and in practice

[8, 9, 15, 16, 18, 20]; see [30] for a nice survey.

at points in a geometric progression. This approach goes back to work of

Prony in the eighteen's century [29] and became well known

after Ben-Or and Tiwari's seminal paper [2]. It has widely

been used in computer algebra, both in theory [6, 19,

21–24, 28] and in practice

[8, 9, 15, 16, 18, 20]; see [30] for a nice survey.

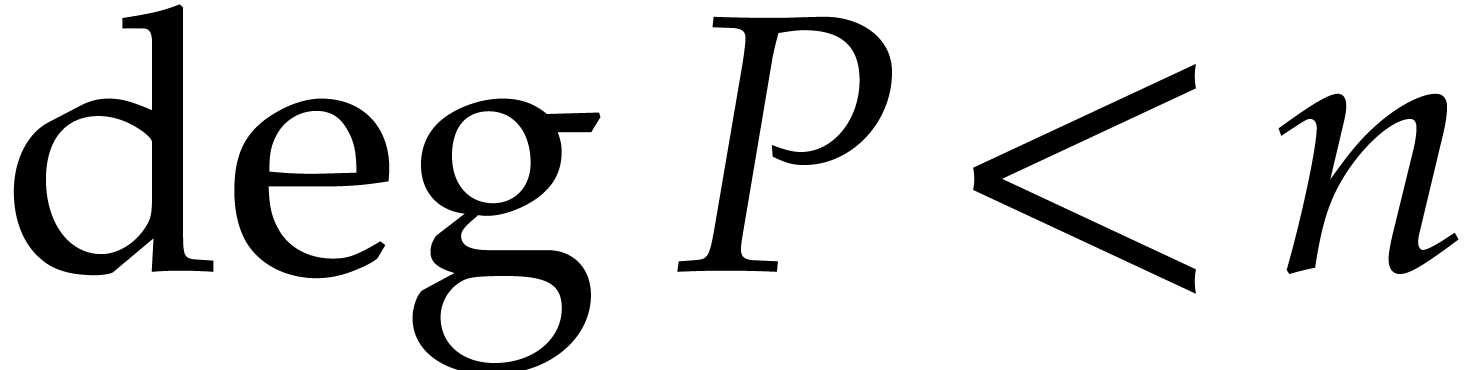

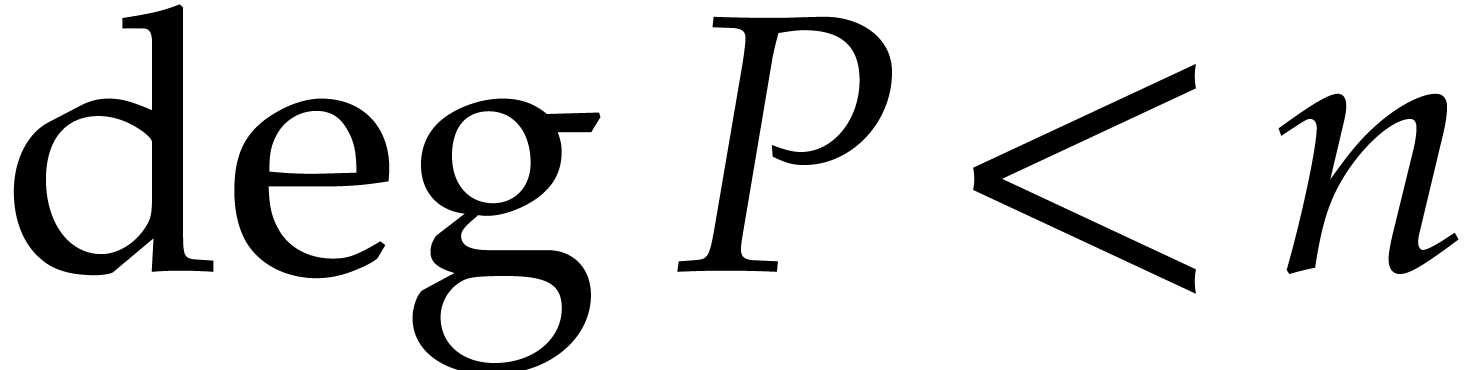

More precisely, if a bound  for the number of

terms

for the number of

terms  is known, then we first evaluate

is known, then we first evaluate  at

at  pairwise distinct points

pairwise distinct points  , where

, where  and

and

for all

for all  .

The generating function of the evaluations at

.

The generating function of the evaluations at  satisfies the identity

satisfies the identity

where  and

and  is of degree

is of degree

. The rational function

. The rational function  can be recovered from

can be recovered from  using

fast Padé approximation [5, 27]. For

well chosen points

using

fast Padé approximation [5, 27]. For

well chosen points  , it is

often possible to recover the exponents

, it is

often possible to recover the exponents  from the

values

from the

values  . If the exponents

. If the exponents

are known, then the coefficients

are known, then the coefficients  can also be recovered using a transposed version of fast

multipoint interpolation [4, 6]. This leaves

us with the question how to compute the roots

can also be recovered using a transposed version of fast

multipoint interpolation [4, 6]. This leaves

us with the question how to compute the roots  of

of

in an efficient way.

in an efficient way.

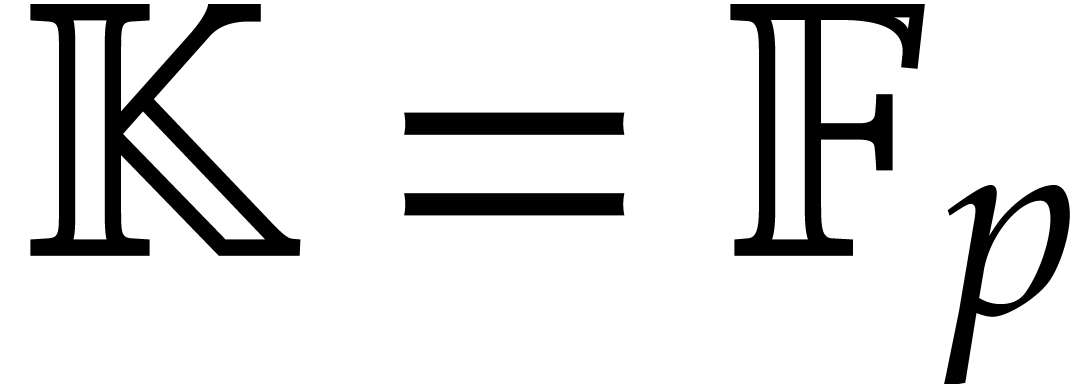

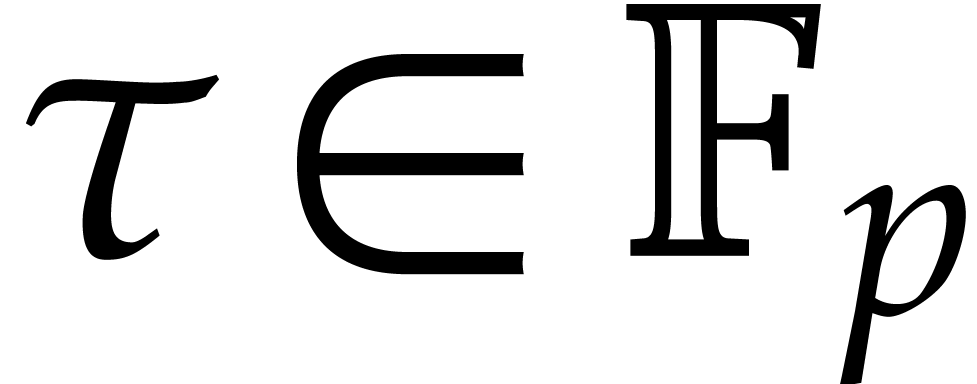

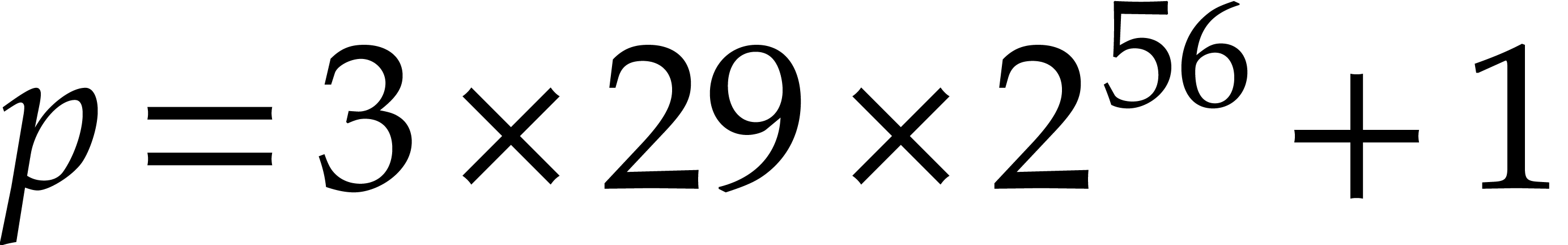

For practical applications in computer algebra, we usually have  , in which case it is most

efficient to use a multi-modular strategy. This means that we rather

work with coefficients in a finite field

, in which case it is most

efficient to use a multi-modular strategy. This means that we rather

work with coefficients in a finite field  ,

where

,

where  is a prime number that we are free to

choose. It is well known that polynomial arithmetic over

is a prime number that we are free to

choose. It is well known that polynomial arithmetic over  can be implemented most efficiently using FFTs when the

order

can be implemented most efficiently using FFTs when the

order  of the multiplicative group is smooth. In

practice, this prompts us to choose

of the multiplicative group is smooth. In

practice, this prompts us to choose  of the form

of the form

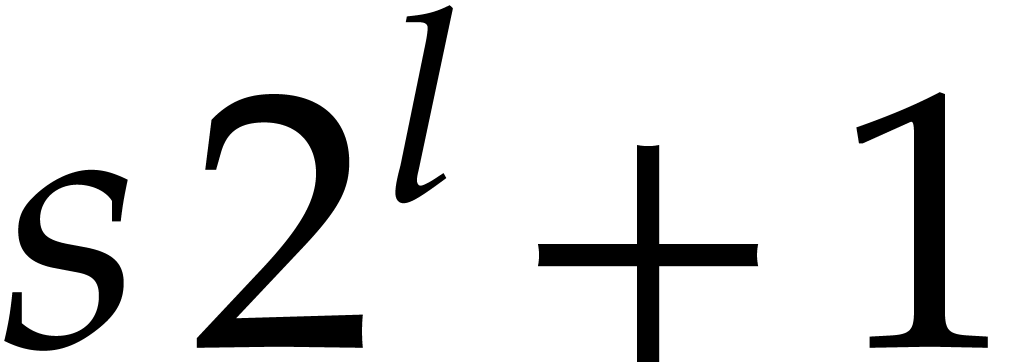

for some small

for some small  and such

that

and such

that  fits into a machine word.

fits into a machine word.

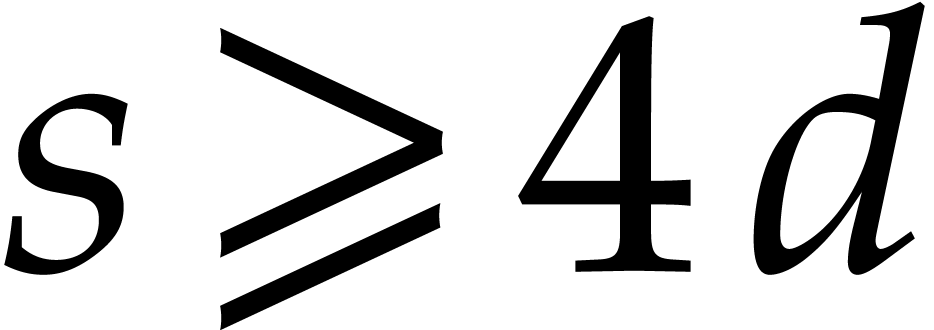

The traditional way to compute roots of polynomials over finite fields

is using Cantor and Zassenhaus' method [7]. In [12,

13], alternative algorithms were proposed for our case of

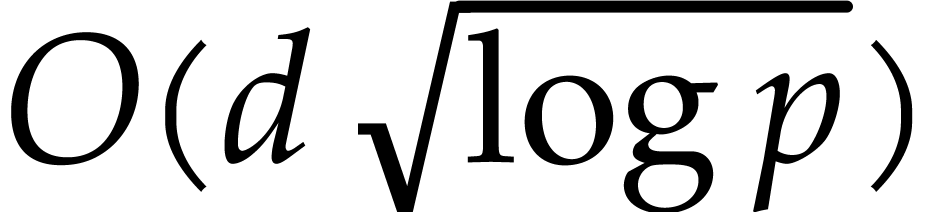

interest when  is smooth. The fastest algorithm

was based on the tangent Graeffe transform and it gains a

factor

is smooth. The fastest algorithm

was based on the tangent Graeffe transform and it gains a

factor  with respect to Cantor–Zassenhaus'

method. The aim of the present paper is to report on a parallel

implementation of this new algorithm and on a few improvements that

allow for a further constant speed-up.

with respect to Cantor–Zassenhaus'

method. The aim of the present paper is to report on a parallel

implementation of this new algorithm and on a few improvements that

allow for a further constant speed-up.

In section 2, we start by recalling generalities about the Graeffe transform and the heuristic root finding method based on the tangent Graeffe transform from [12]. In section 3, we present the main new theoretical improvements, which all rely on optimizations in the FFT-model for fast polynomial arithmetic. Our contributions are threefold:

In the FFT-model, one backward transform out of four can be saved for Graeffe transforms of order two (see section 3.2).

When composing a large number of Graeffe transforms of order two,

FFT caching can be used to gain another factor of  (see section 3.3).

(see section 3.3).

All optimizations still apply in the TFT model, which can save a factor between one and two, depending on the number of roots (see section 3.5).

We also indicate how to generalize our methods to Graeffe transforms of general orders in section 3.4.

Section 4 is devoted to our new implementation. We first implemented a sequential version of the tangent Graeffe method in C, with the optimizations from sections 3.2 and 3.3. We also started experimenting with a parallel implementation in Cilk C. Our sequential implementation confirms the major performance improvement with respect to Cantor–Zassenhaus' algorithm. We also observed the gain of a new factor of two when using the new optimizations. So far, we have achieved a parallel speed-up by a factor of 4.6 on an 8-core machine. Our implementation is freely available at:

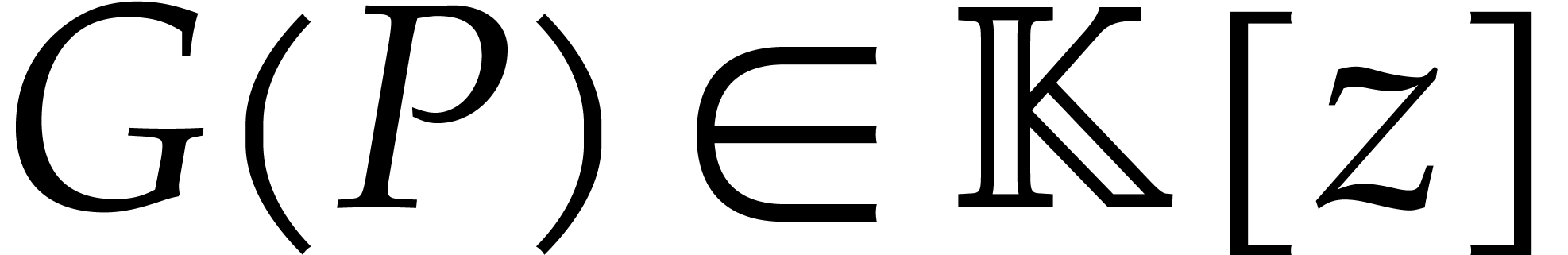

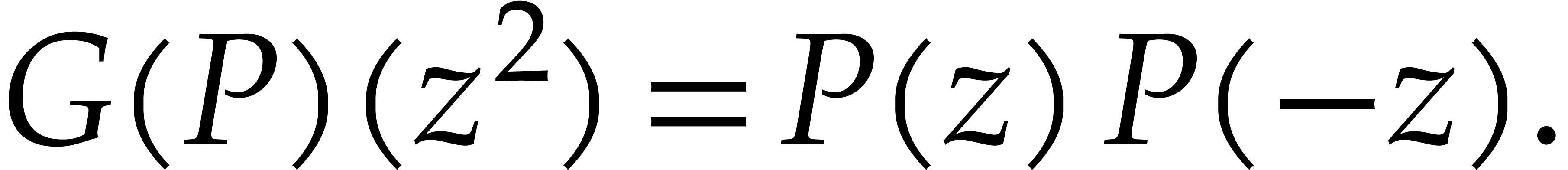

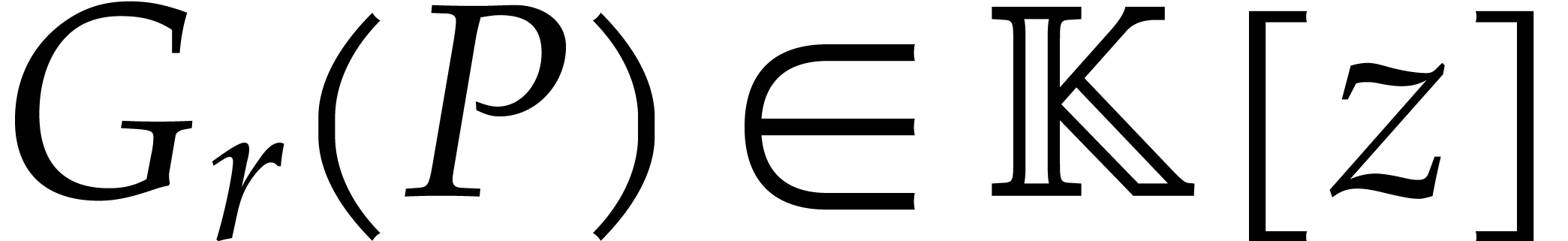

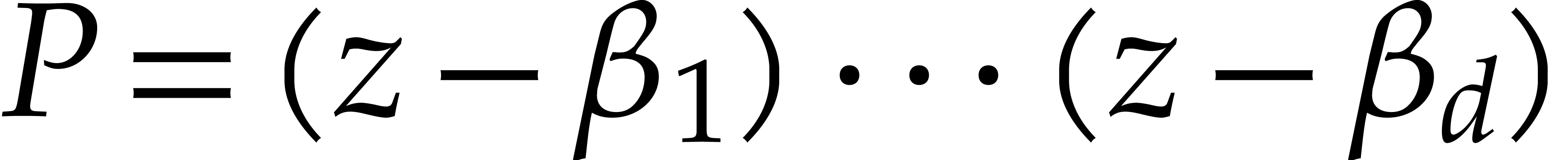

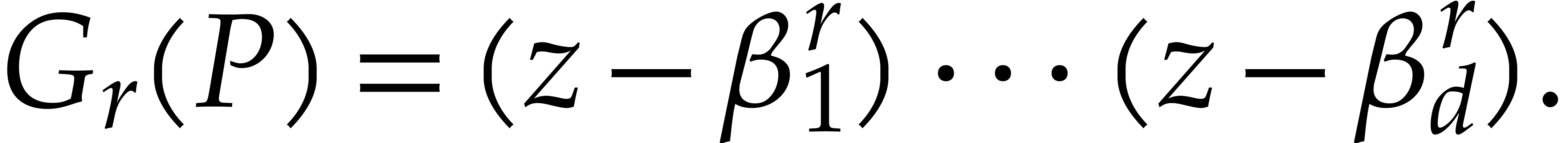

The traditional Graeffe transform of a monic polynomial  of degree

of degree  is the unique monic

polynomial

is the unique monic

polynomial  of degree

of degree  such that

such that

|

(2) |

If  splits over

splits over  into

linear factors

into

linear factors  , then one has

, then one has

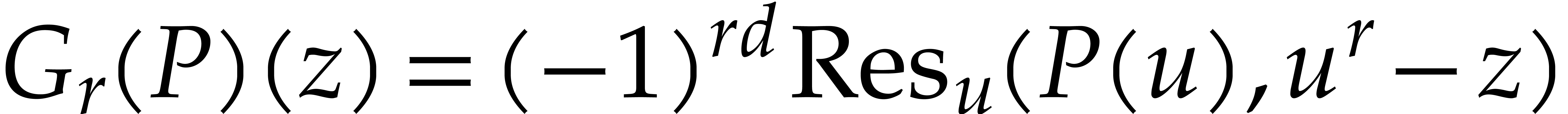

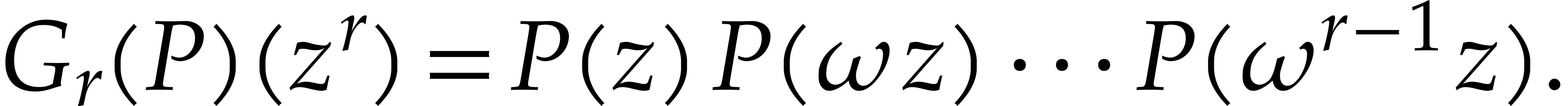

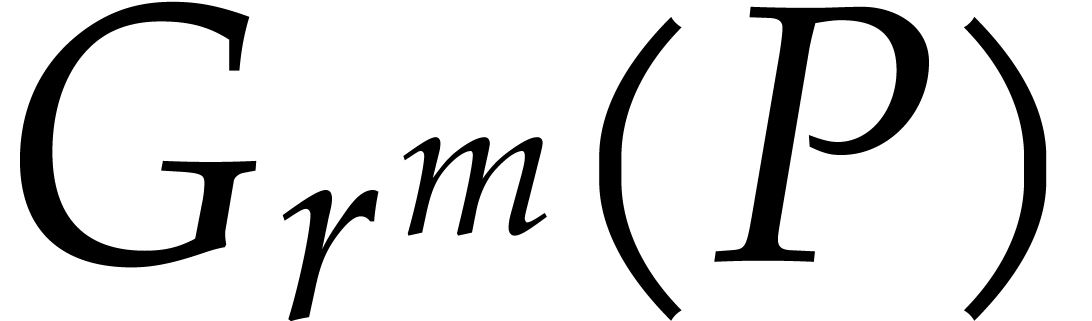

More generally, given  , we

define the Graeffe transform of order

, we

define the Graeffe transform of order  to be the unique monic polynomial

to be the unique monic polynomial  of degree

of degree  such that

such that

If  , then

, then

If  is a primitive

is a primitive  -th

root of unity in

-th

root of unity in  , then we

also have

, then we

also have

|

(3) |

If  , then we finally have

, then we finally have

|

(4) |

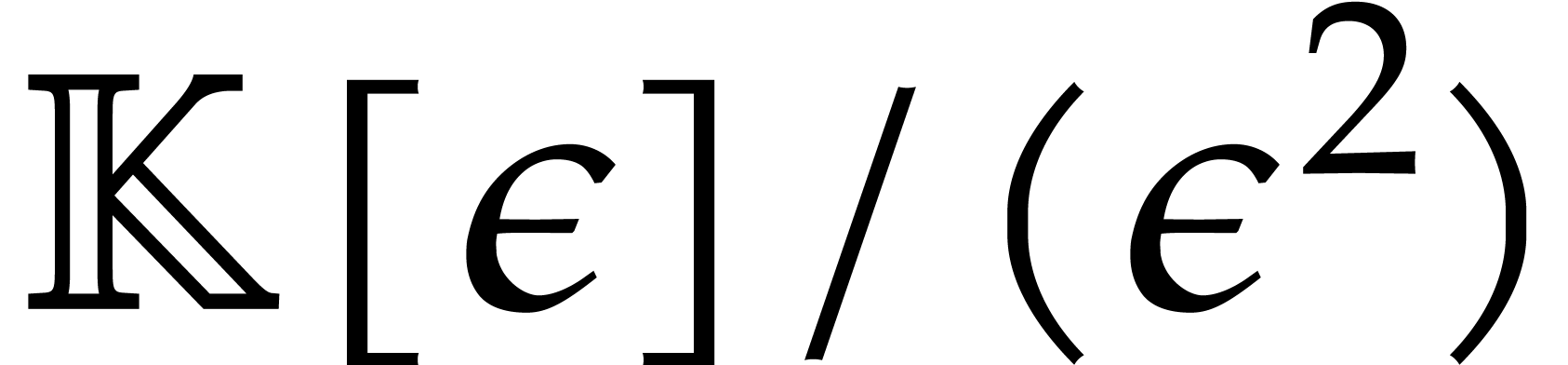

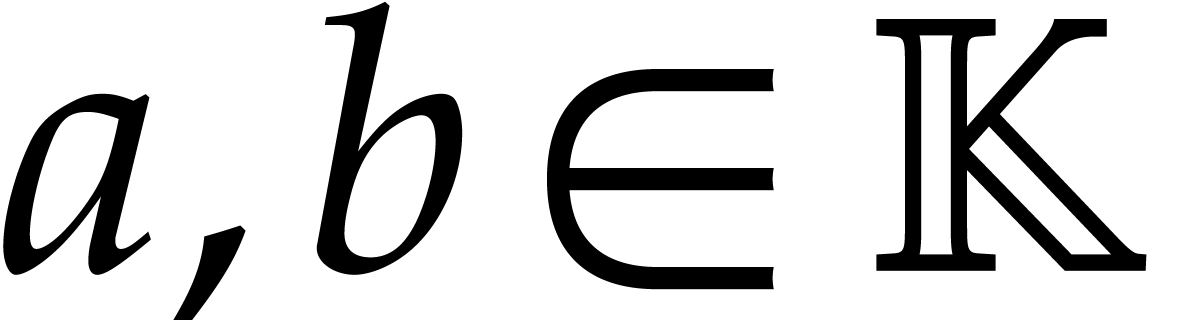

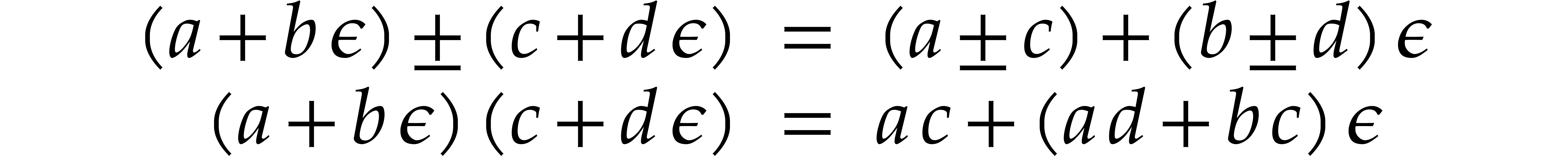

Let  be a formal indeterminate with

be a formal indeterminate with  . Elements in

. Elements in  are

called tangent numbers. They are of the form

are

called tangent numbers. They are of the form  with

with  and basic arithmetic operations go as

follows:

and basic arithmetic operations go as

follows:

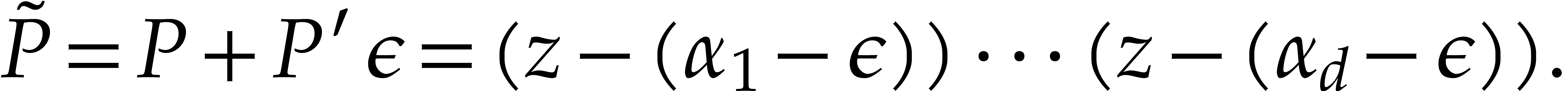

Now let  be a monic polynomial of degree

be a monic polynomial of degree  that splits over

that splits over  :

:

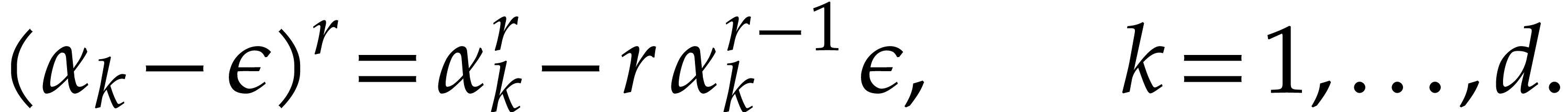

where  are pairwise distinct. Then the

tangent deformation

are pairwise distinct. Then the

tangent deformation  satisfies

satisfies

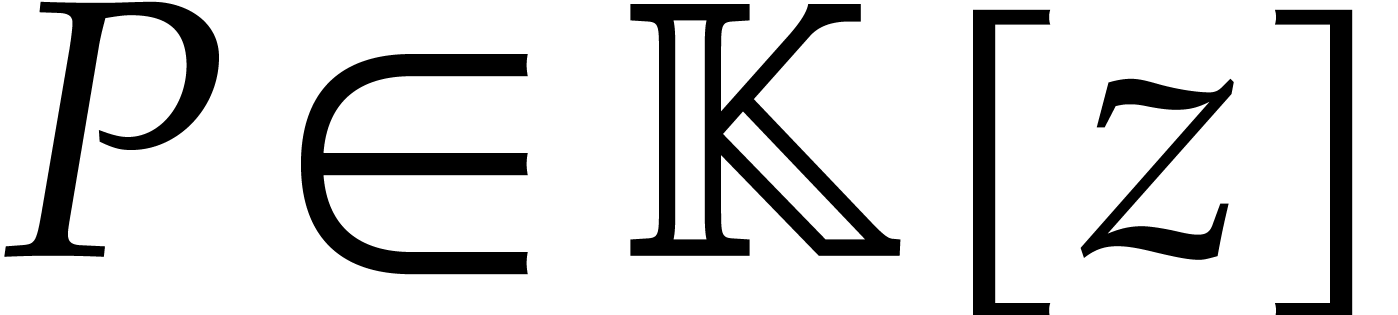

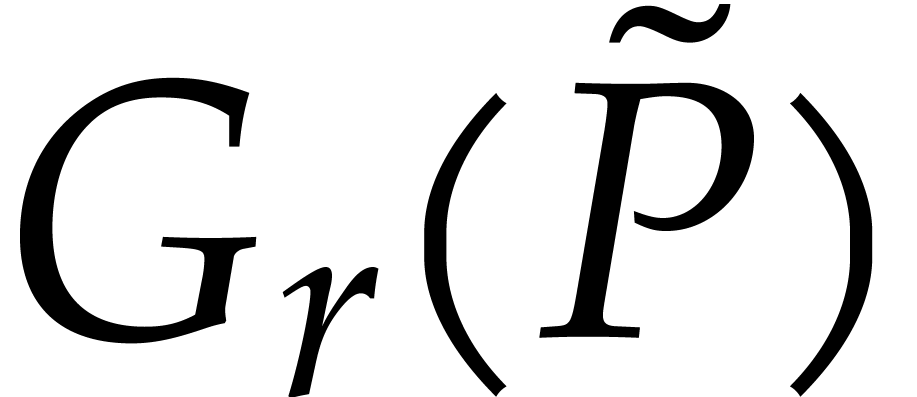

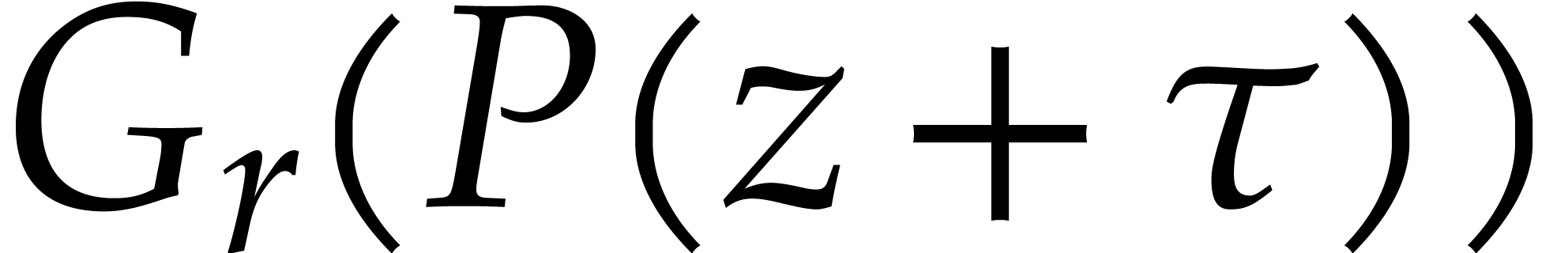

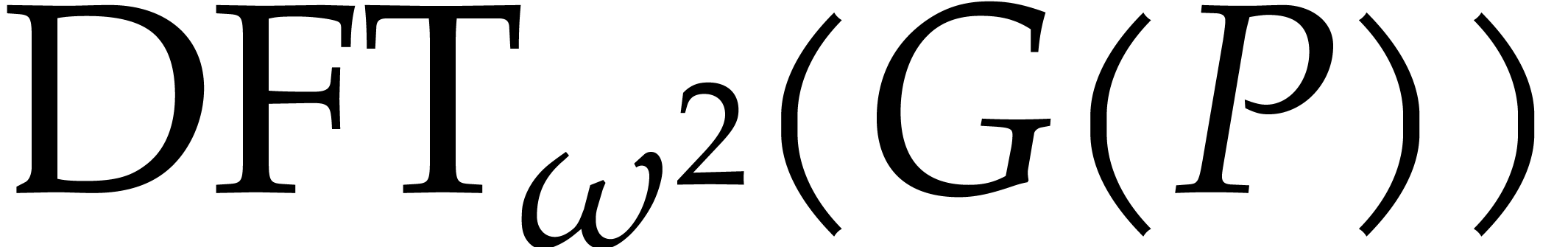

The definitions from the previous subsection readily extend to

coefficients in  instead of

instead of  . Given

. Given  ,

we call

,

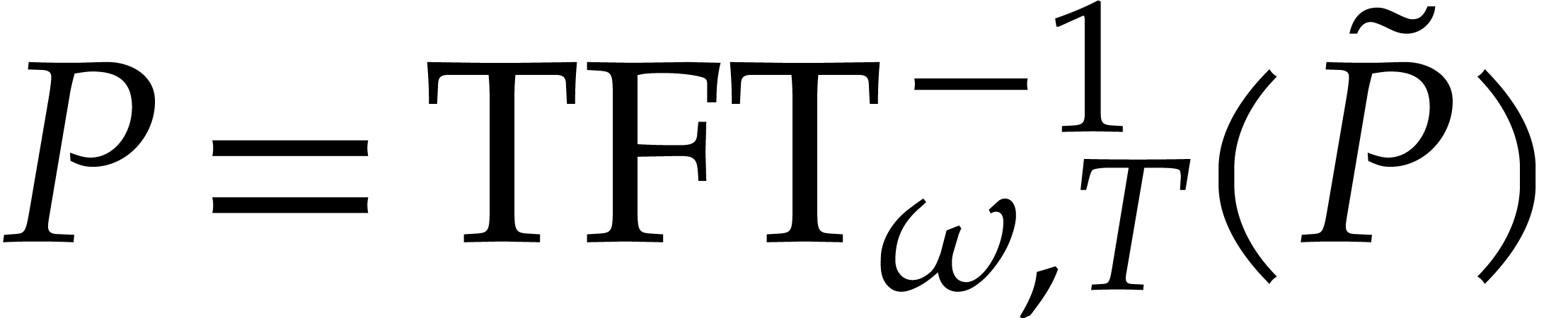

we call  the tangent Graeffe transform

of

the tangent Graeffe transform

of  of order

of order  .

We have

.

We have

where

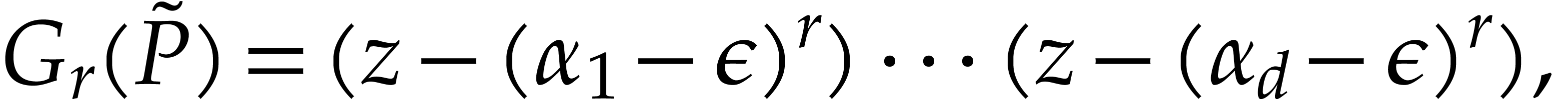

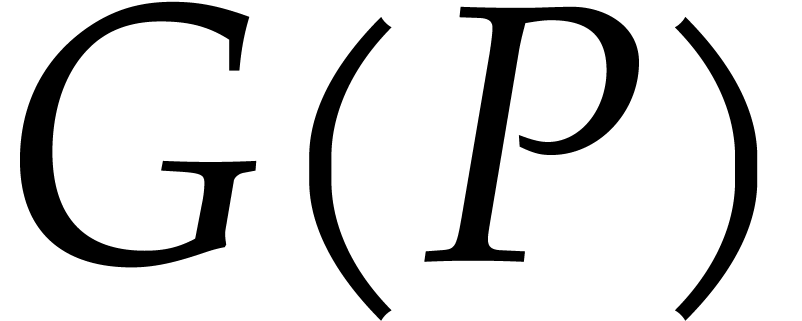

Now assume that we have an efficient way to determine the roots  of

of  . For

some polynomial

. For

some polynomial  , we may

decompose

, we may

decompose

For any root  of

of  ,

we then have

,

we then have

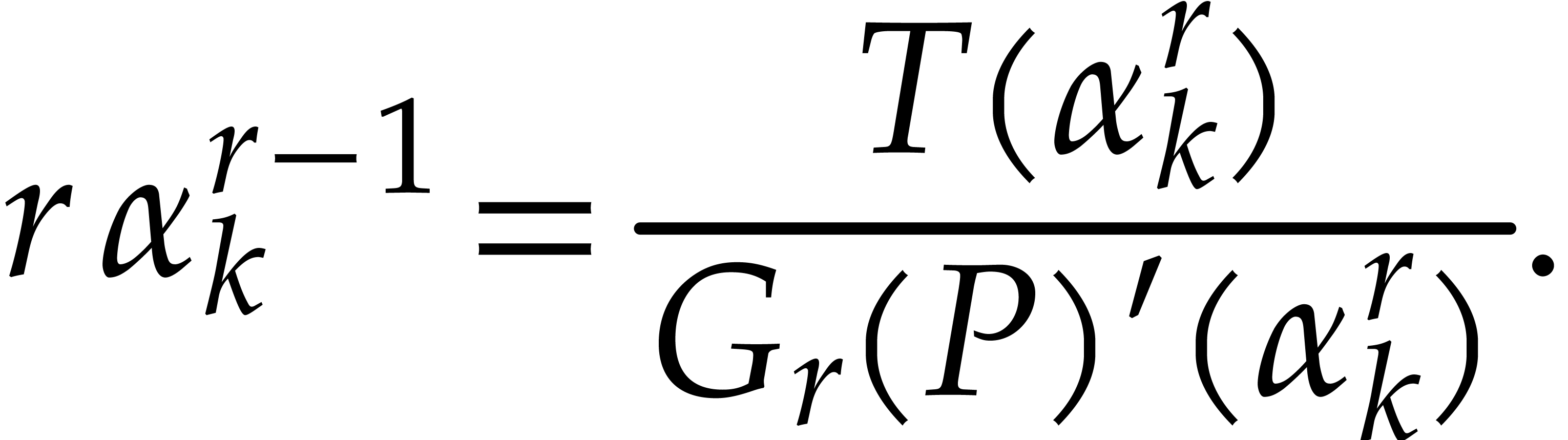

Whenever  happens to be a single root of

happens to be a single root of  , it follows that

, it follows that

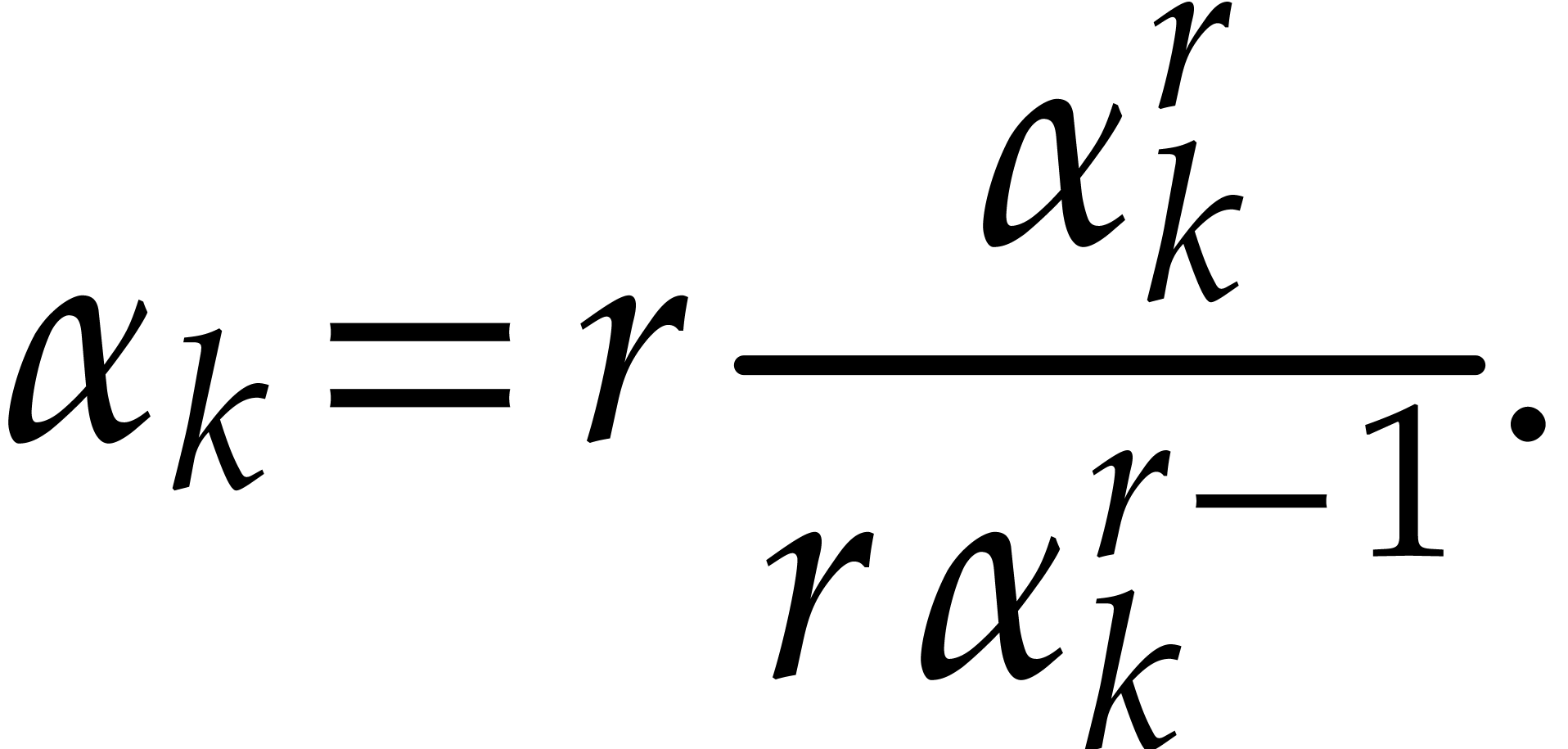

If  , this finally allows us

to recover

, this finally allows us

to recover  as

as

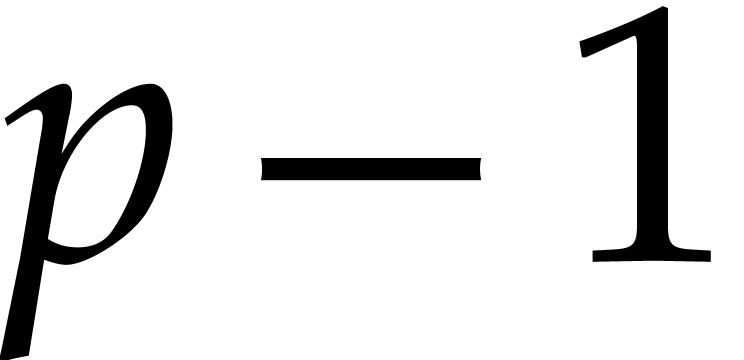

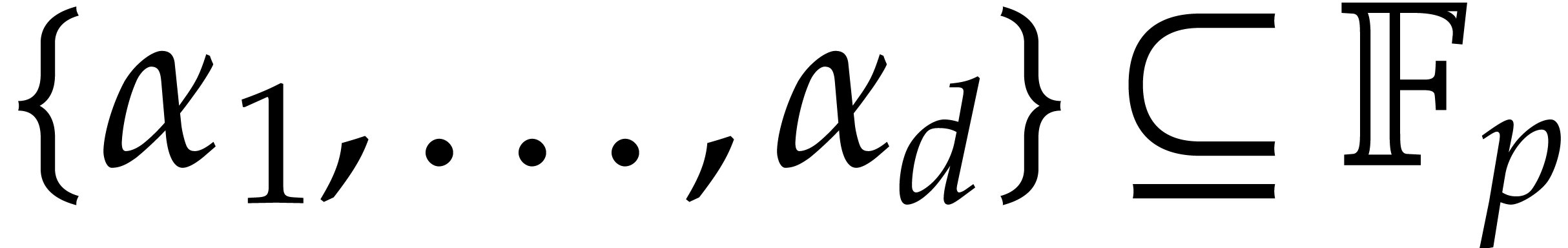

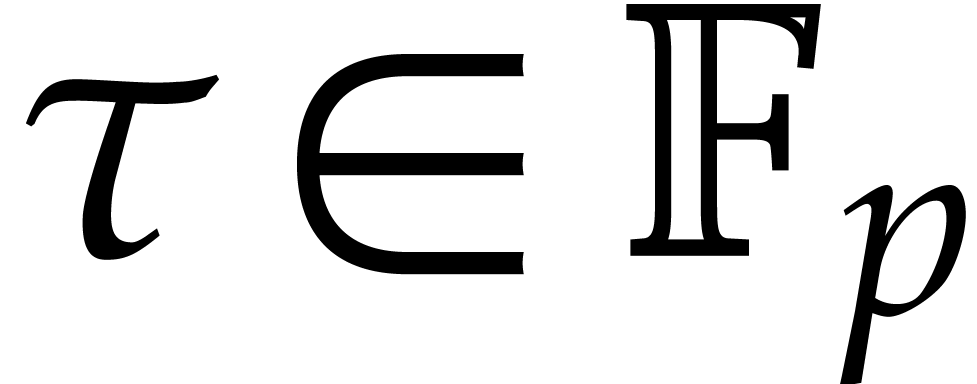

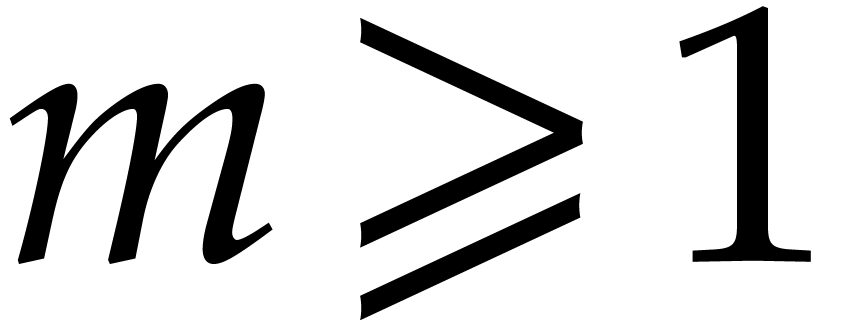

Assume now that  is a finite field, where

is a finite field, where  is a prime number of the form

is a prime number of the form  for some small

for some small  . Assume also

that

. Assume also

that  be a primitive element of order

be a primitive element of order  for the multiplicative group of

for the multiplicative group of  .

.

Let  be as in the previous subsection. The

tangent Graeffe method can be used to efficiently compute those

be as in the previous subsection. The

tangent Graeffe method can be used to efficiently compute those  of

of  for which

for which  is a single root of

is a single root of  . In

order to guarantee that there are a sufficient number of such roots, we

first replace

. In

order to guarantee that there are a sufficient number of such roots, we

first replace  by

by  for a

random shift

for a

random shift  , and use the

following heuristic:

, and use the

following heuristic:

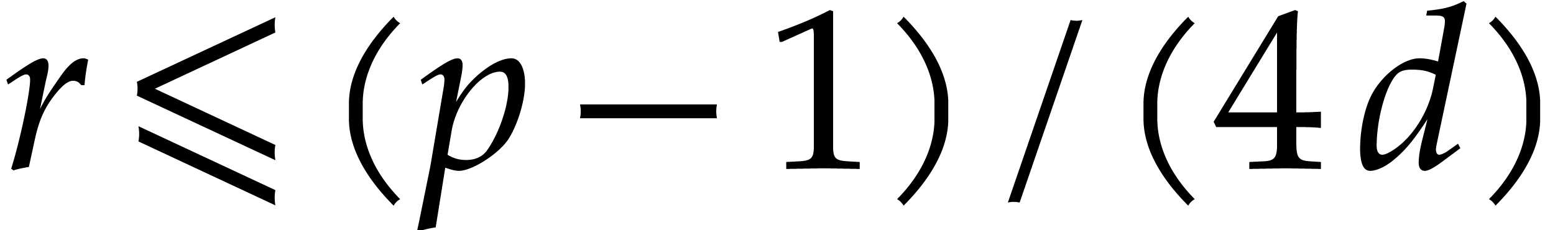

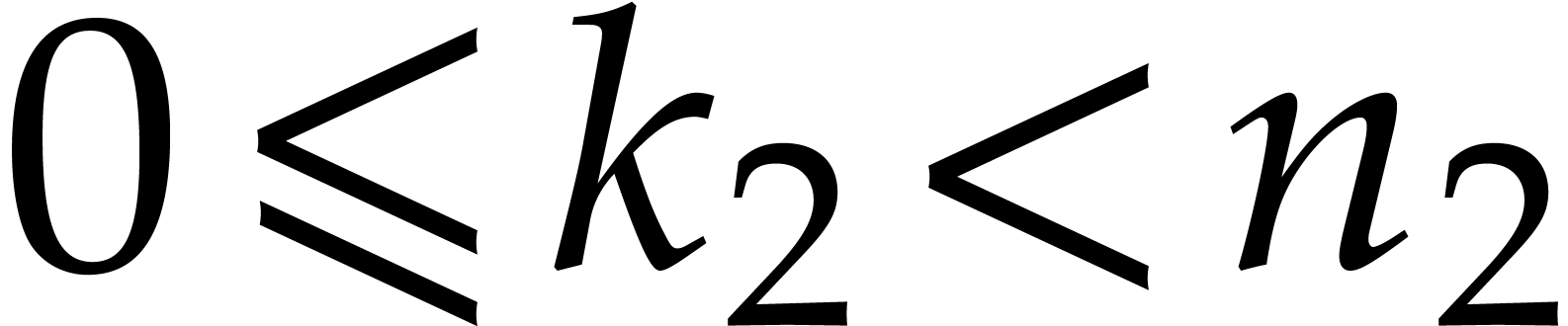

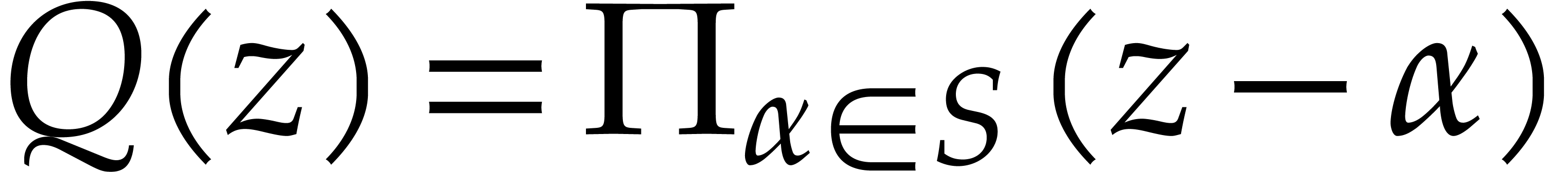

For any subset  of cardinality

of cardinality  and any

and any  ,

there exist at least

,

there exist at least  elements

elements  such that

such that  contains at least

contains at least

elements.

elements.

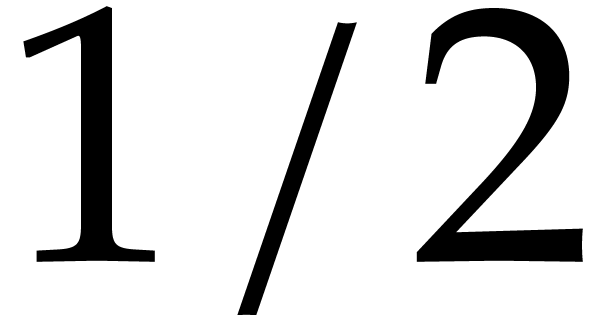

For a random shift  and any

and any  , the assumption ensures with probability at

least

, the assumption ensures with probability at

least  that

that  has at least

has at least

single roots.

single roots.

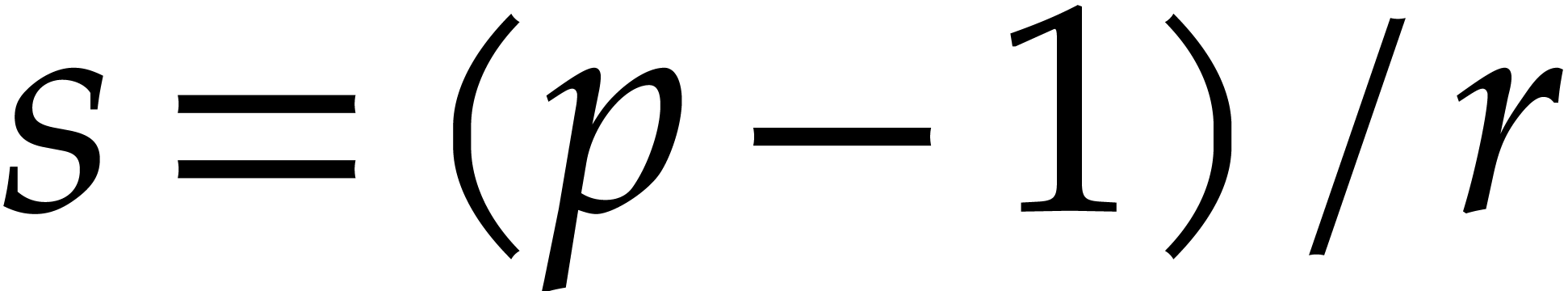

Now take  to be the largest power of two such

that

to be the largest power of two such

that  and let

and let  .

By construction, note that

.

By construction, note that  .

The roots

.

The roots  of

of  are all

are all

-th roots of unity in the set

-th roots of unity in the set

. We may thus determine them

by evaluating

. We may thus determine them

by evaluating  at

at  for

for

. Since

. Since  , this can be done efficiently using a discrete

Fourier transform. Combined with the tangent Graeffe method from the

previous subsection, this leads to the following probabilistic algorithm

for root finding:

, this can be done efficiently using a discrete

Fourier transform. Combined with the tangent Graeffe method from the

previous subsection, this leads to the following probabilistic algorithm

for root finding:

Remark  we may use

we may use  ,

which requires three polynomial multiplications in

,

which requires three polynomial multiplications in  of degree

of degree  . In total, step 5

therefore performs

. In total, step 5

therefore performs  such multiplications. We

discuss how to perform step 5 efficiently in the FFT model

in section 3.

such multiplications. We

discuss how to perform step 5 efficiently in the FFT model

in section 3.

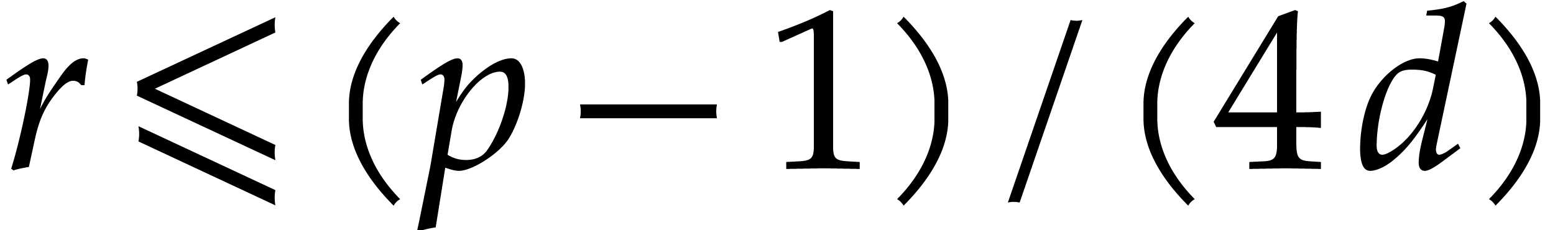

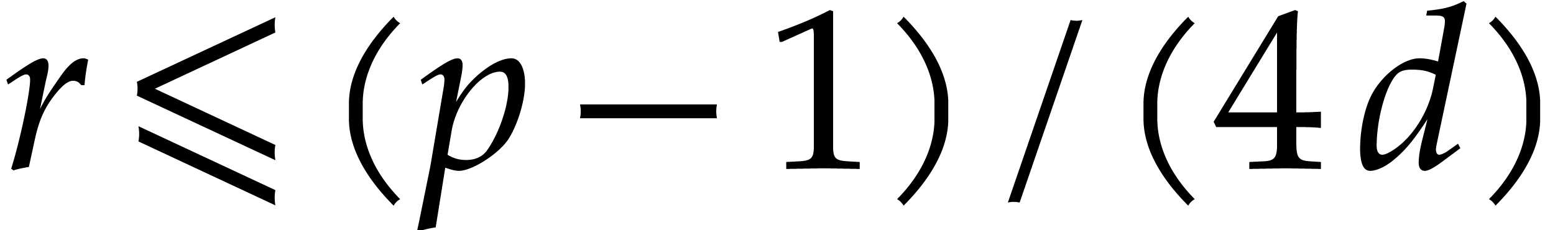

Remark  for

for

and the resulting threshold

and the resulting threshold  for

for  . For larger values of

. For larger values of

, the computations of the

DFTs in step 6 get more expensive, but the proportion of

single roots goes up, so more roots are determined at each iteration.

From an asymptotic complexity perspective, it would be best to take

, the computations of the

DFTs in step 6 get more expensive, but the proportion of

single roots goes up, so more roots are determined at each iteration.

From an asymptotic complexity perspective, it would be best to take

. In practice, we actually

preferred to take the lower threshold

. In practice, we actually

preferred to take the lower threshold  , because the constant factor of our implementation

of step 6 (based on Bluestein's algorithm [3])

is significant with respect to our highly optimized implementation of

the tangent Graeffe method. A second reason we prefer

, because the constant factor of our implementation

of step 6 (based on Bluestein's algorithm [3])

is significant with respect to our highly optimized implementation of

the tangent Graeffe method. A second reason we prefer  of size

of size  instead of

instead of  is

that the total space used by the algorithm is linear in

is

that the total space used by the algorithm is linear in  . In the future, it would be interesting to

further speed up step 6 by investing more time in the

implementation of high performance DFTs of general orders

. In the future, it would be interesting to

further speed up step 6 by investing more time in the

implementation of high performance DFTs of general orders  .

.

Remark  terms, the idea is to take

terms, the idea is to take  and

and  of order

of order  instead of

instead of  for

some constant

for

some constant  with

with  .

This reduces

.

This reduces  (and the cost of the top-level

iteration) by a factor of

(and the cost of the top-level

iteration) by a factor of  .

For the recursive calls, we still need to work with a primitive root of

unity

.

For the recursive calls, we still need to work with a primitive root of

unity  of order

of order  and

random shifts.

and

random shifts.

Assume that  is invertible in

is invertible in  and let

and let  be a primitive

be a primitive  -th root of unity. Consider a polynomial

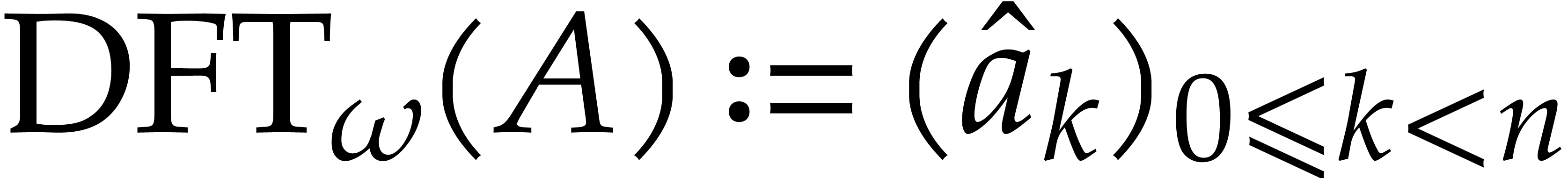

-th root of unity. Consider a polynomial  . Then the discrete Fourier

transform (DFT) of order

. Then the discrete Fourier

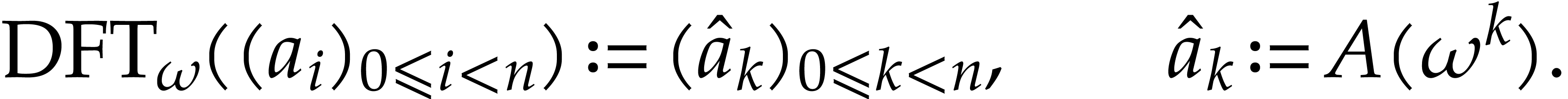

transform (DFT) of order  of the sequence

of the sequence  is defined by

is defined by

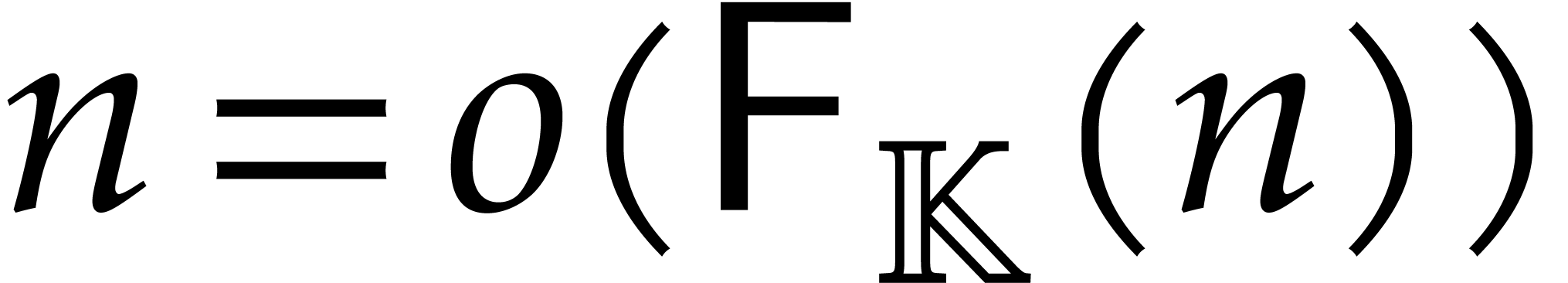

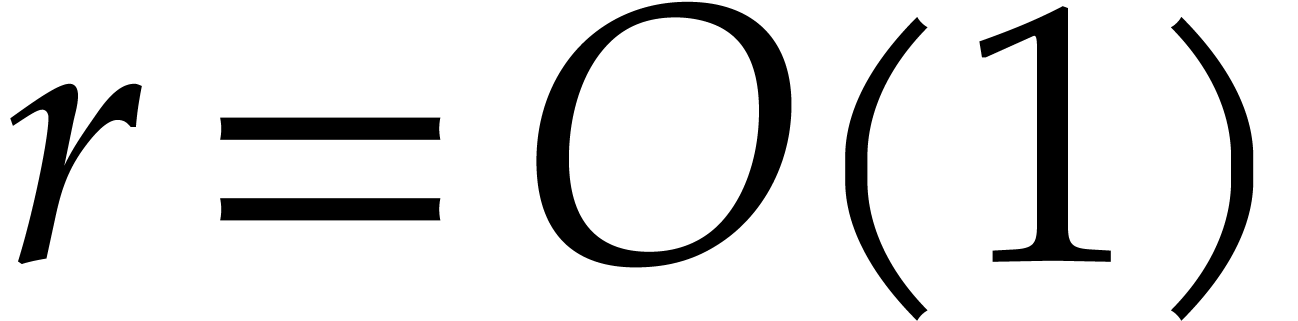

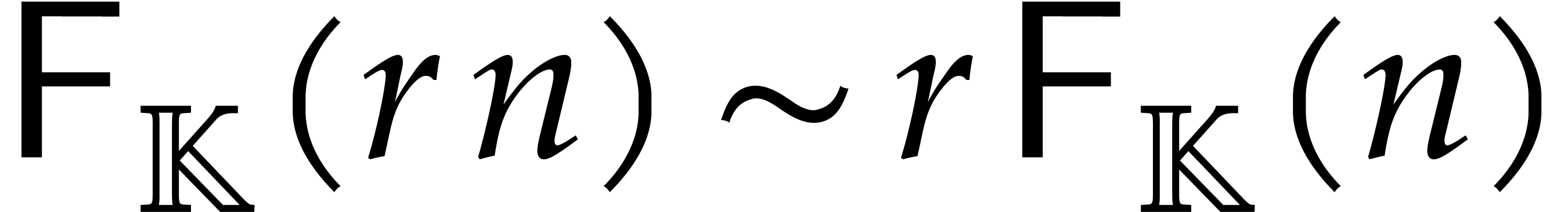

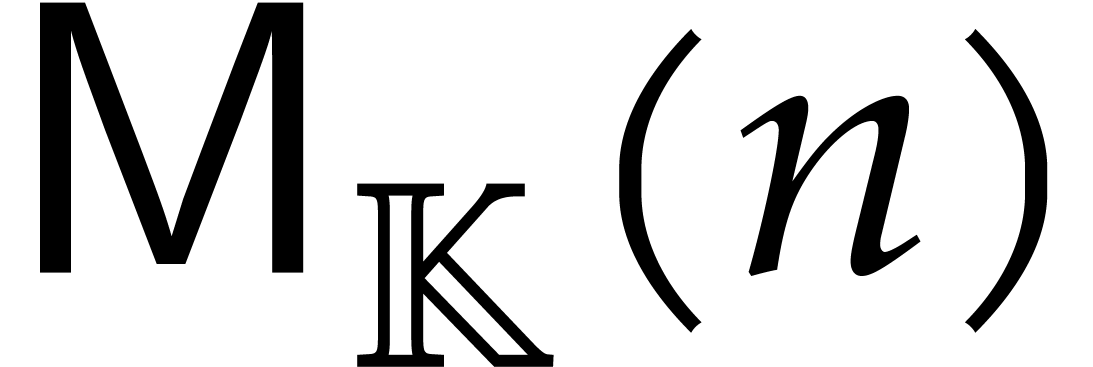

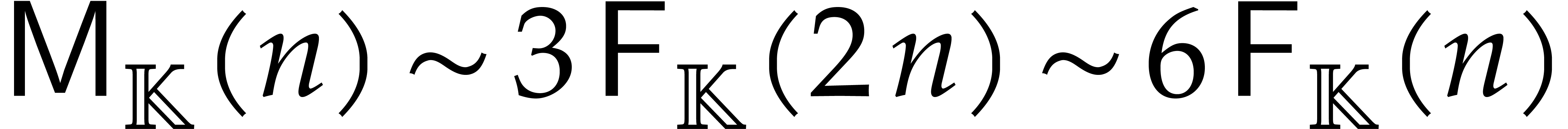

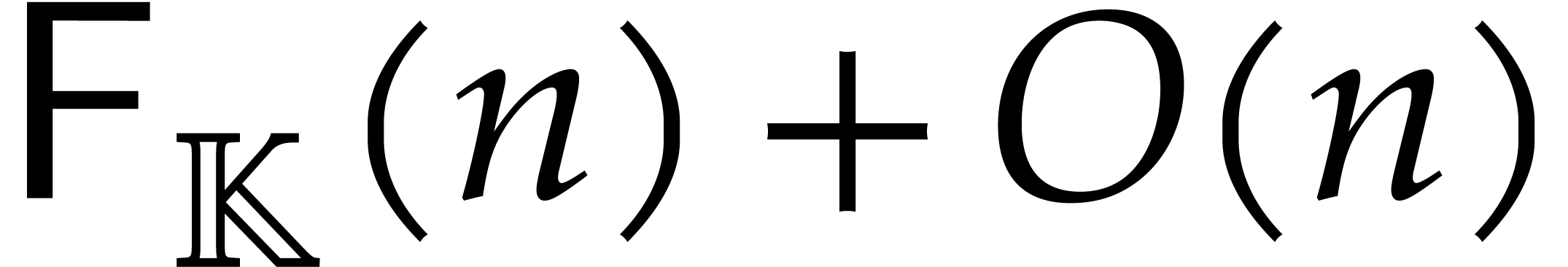

We will write  for the cost of one discrete

Fourier transform in terms of the number of operations in

for the cost of one discrete

Fourier transform in terms of the number of operations in  and assume that

and assume that  .

For any

.

For any  , we have

, we have

|

(5) |

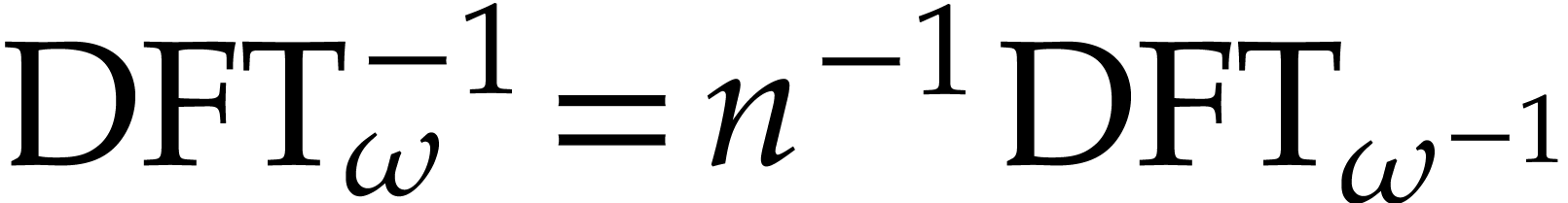

If  is invertible in

is invertible in  , then it follows that

, then it follows that  .

The costs of direct and inverse transforms therefore coincide up to a

factor

.

The costs of direct and inverse transforms therefore coincide up to a

factor  .

.

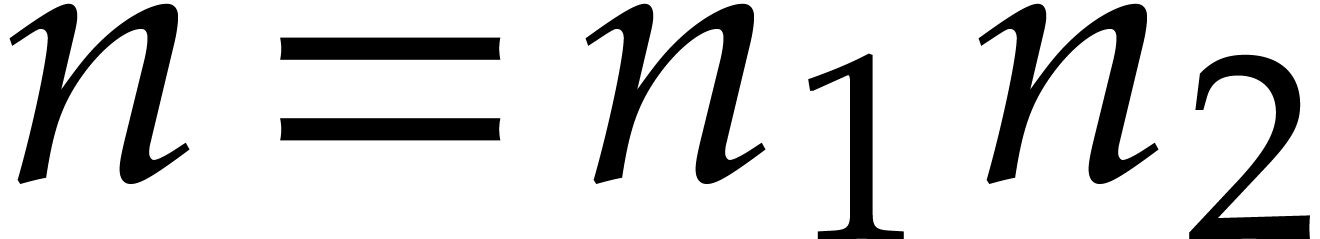

If  is composite,

is composite,  ,

and

,

and  , then we have

, then we have

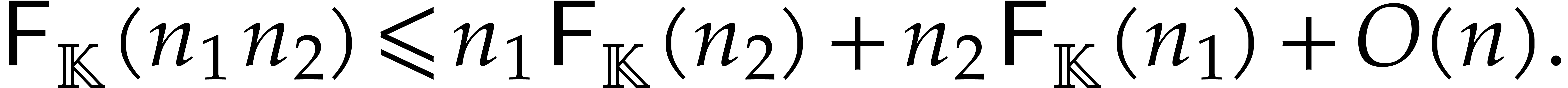

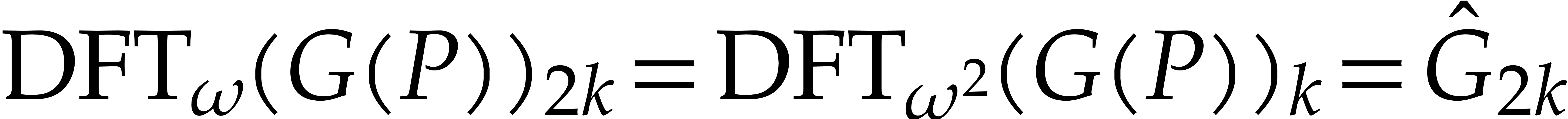

This shows that a DFT of length  reduces to

reduces to  transforms of length

transforms of length  plus

plus

transforms of length

transforms of length  plus

plus  multiplications in

multiplications in  :

:

In particular, if  , then

, then

.

.

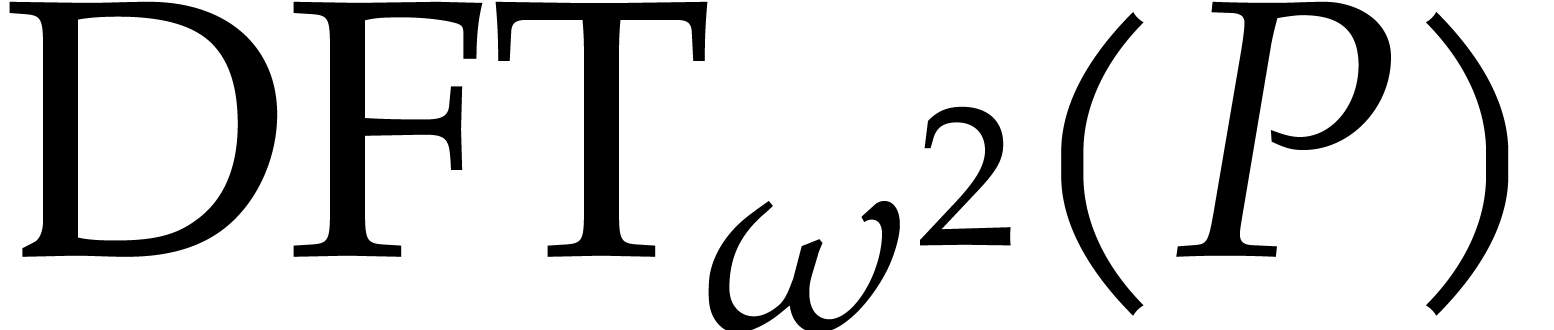

It is sometimes convenient to apply DFTs directly to polynomials as

well; for this reason, we also define  .

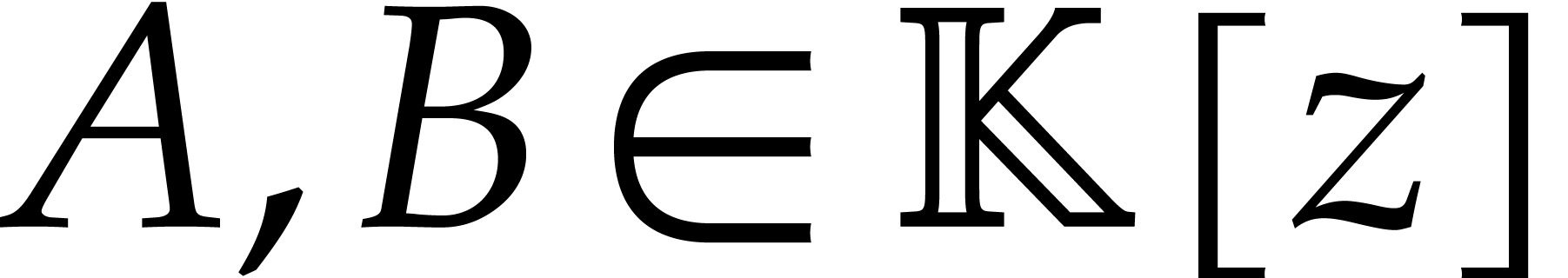

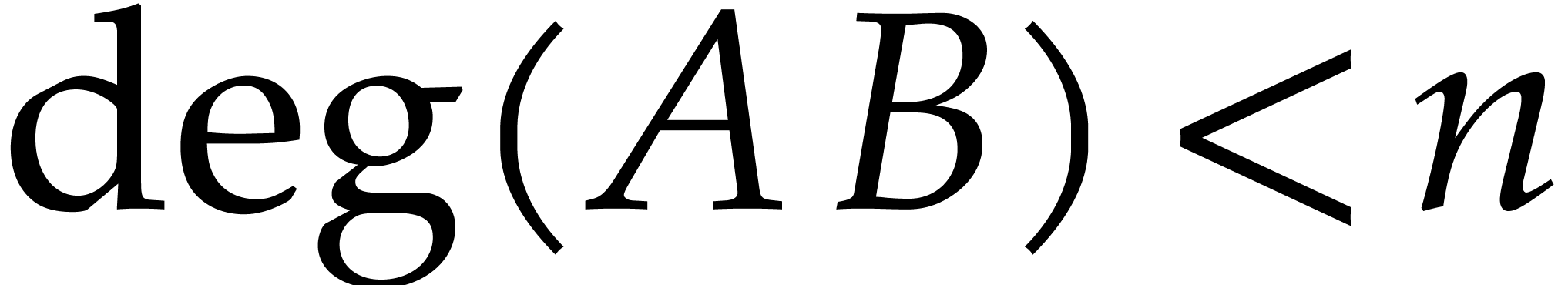

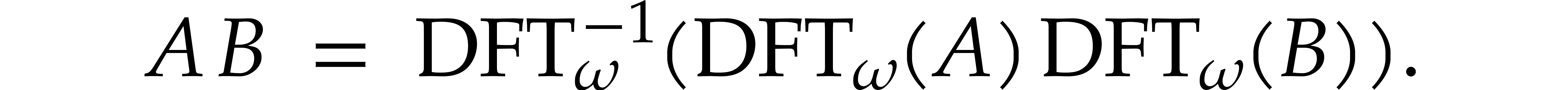

Given two polynomials

.

Given two polynomials  with

with  , we may then compute the product

, we may then compute the product  using

using

In particular, if  denotes the cost of

multiplying two polynomials of degree

denotes the cost of

multiplying two polynomials of degree  ,

then we obtain

,

then we obtain  .

.

Remark  .

Since

.

Since  is a power of two, this length is of the

form

is a power of two, this length is of the

form  for some

for some  .

In view of (6), we may therefore reduce step 6

to

.

In view of (6), we may therefore reduce step 6

to  DFTs of length

DFTs of length  plus

plus

DFTs of length

DFTs of length  .

If

.

If  is very small, then we may use a naive

implementation for DFTs of length

is very small, then we may use a naive

implementation for DFTs of length  .

In general, one may use Bluestein's algorithm [3] to reduce

the computation of a DFT of length

.

In general, one may use Bluestein's algorithm [3] to reduce

the computation of a DFT of length  into the

computation of a product in

into the

computation of a product in  ,

which can in turn be computed using FFT-multiplication and three DFTs of

length a larger power of two.

,

which can in turn be computed using FFT-multiplication and three DFTs of

length a larger power of two.

Let  be a field with a primitive

be a field with a primitive  -th root of unity

-th root of unity  .

Let

.

Let  be a polynomial of degree

be a polynomial of degree  . Then the relation (2) yields

. Then the relation (2) yields

|

(7) |

For any  , we further note

that

, we further note

that

|

(8) |

so  can be obtained from

can be obtained from  using

using  transpositions of elements in

transpositions of elements in  . Concerning the inverse transform, we also

note that

. Concerning the inverse transform, we also

note that

for  . Plugging this into (7), we conclude that

. Plugging this into (7), we conclude that

This leads to the following algorithm for the computation of  :

:

Algorithm

|

||||

|

be a primitive

be a primitive  -th root of unity in

-th root of unity in  and assume that

and assume that  is invertible in

is invertible in  . Given a monic polynomial

. Given a monic polynomial  with

with  , we can compute

, we can compute  in time

in time

Proof. We have already explained the correctness

of Algorithm 2. Step 1 requires one forward DFT of length

and cost

and cost  .

Steps 2 and 3 can be done in linear time

.

Steps 2 and 3 can be done in linear time  .

Step 4 requires one inverse DFT of length

.

Step 4 requires one inverse DFT of length  and

cost

and

cost  . The total cost of

Algorithm 2 is therefore

. The total cost of

Algorithm 2 is therefore  .

.

Remark  This

gives a

This

gives a  improvement over the previously best

known bound

improvement over the previously best

known bound  that was used in [12].

Note that the best known algorithm for computing squares of polynomials

of degree

that was used in [12].

Note that the best known algorithm for computing squares of polynomials

of degree  is

is  .

It would be interesting to know whether squares can also be computed in

time

.

It would be interesting to know whether squares can also be computed in

time  .

.

In view of (4), Graeffe transforms of power of two orders

can be computed using

can be computed using

|

(9) |

Now assume that we computed the first Graeffe transform  using Algorithm 2 and that we wish to apply a second

Graeffe transform to the result. Then we note that

using Algorithm 2 and that we wish to apply a second

Graeffe transform to the result. Then we note that

|

(10) |

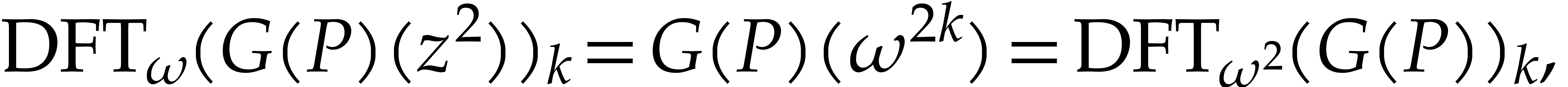

is already known for  . We can

use this to accelerate step 1 of the second application of Algorithm 2. Indeed, in view of (6) for

. We can

use this to accelerate step 1 of the second application of Algorithm 2. Indeed, in view of (6) for  and

and  , we have

, we have

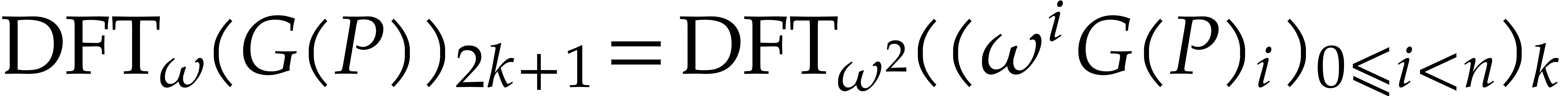

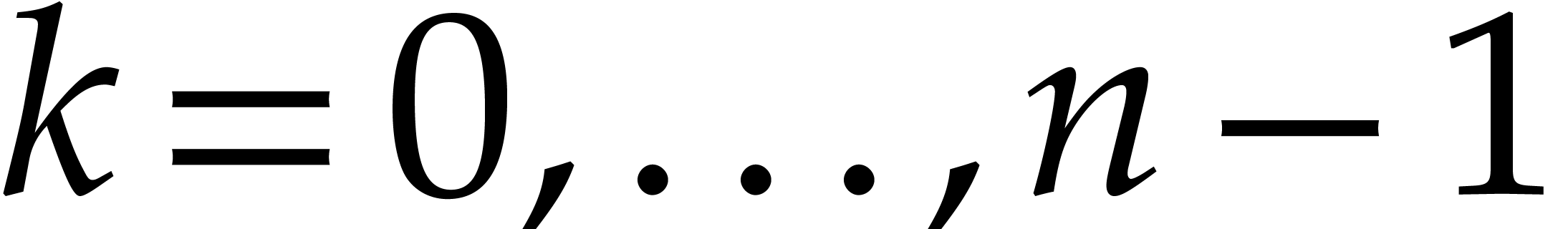

|

(11) |

for  . In order to exploit

this idea in a recursive fashion, it is useful to modify Algorithm 2 so as to include

. In order to exploit

this idea in a recursive fashion, it is useful to modify Algorithm 2 so as to include  in the input and

in the input and

in the output. This leads to the following

algorithm:

in the output. This leads to the following

algorithm:

Algorithm

|

||||||

|

be a primitive

be a primitive  -th root of unity in

-th root of unity in  and assume that

and assume that  is invertible in

is invertible in

. Given a monic polynomial

. Given a monic polynomial

with

with  and

and  , we can compute

, we can compute  in

time

in

time

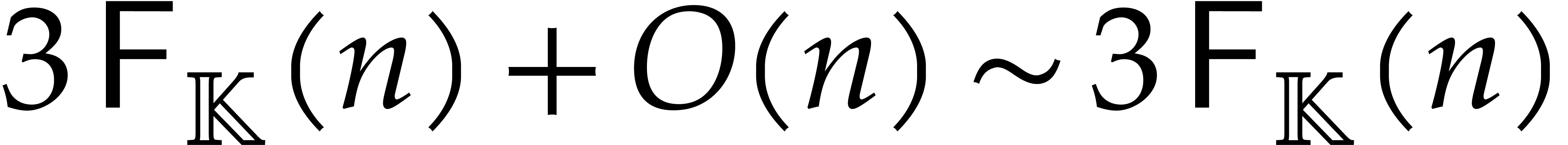

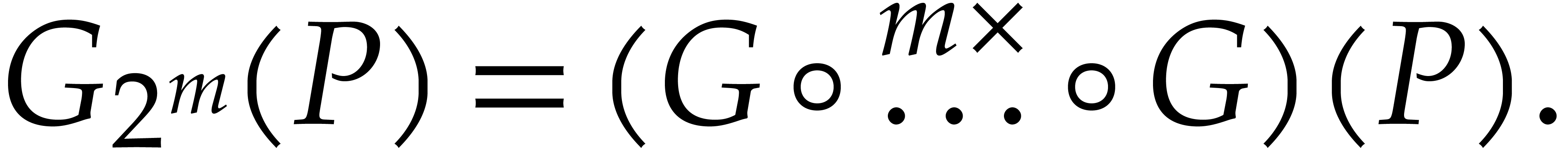

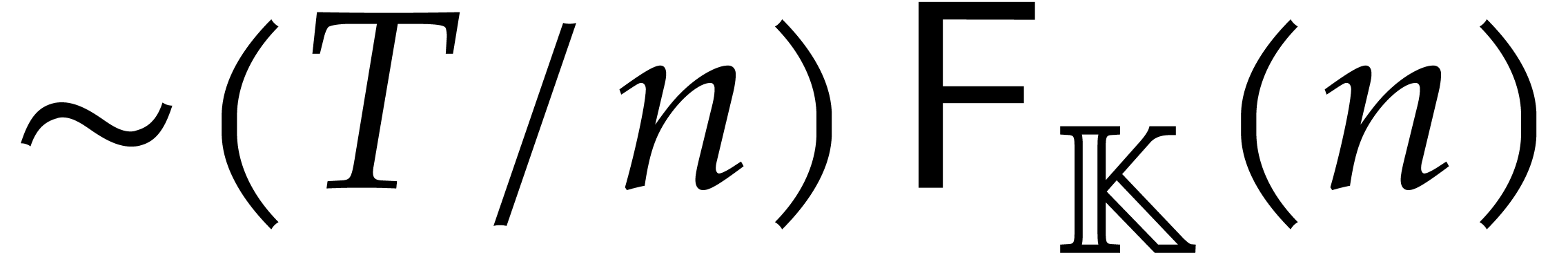

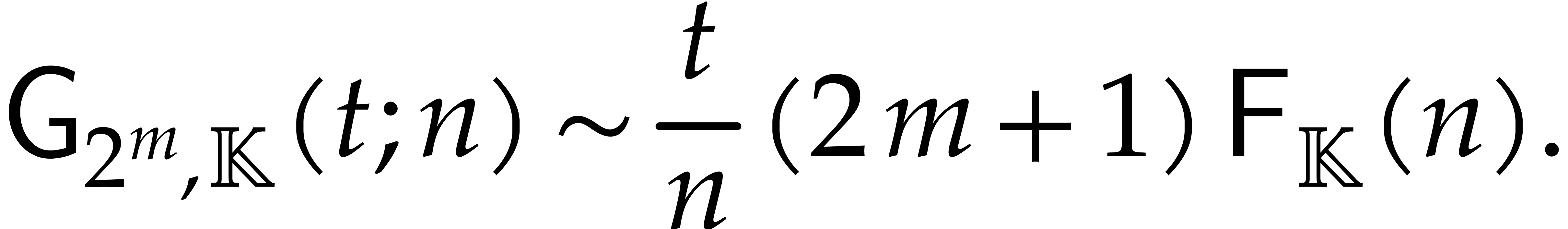

Proof. It suffices to compute  and then to apply Algorithm 3 recursively,

and then to apply Algorithm 3 recursively,  times. Every application of Algorithm 3 now takes

times. Every application of Algorithm 3 now takes  operations in

operations in  ,

whence the claimed complexity bound.

,

whence the claimed complexity bound.

Remark  were directly

computed using the formula (9), using

were directly

computed using the formula (9), using  operations in

operations in  . The new

algorithm is twice as fast for large

. The new

algorithm is twice as fast for large  .

.

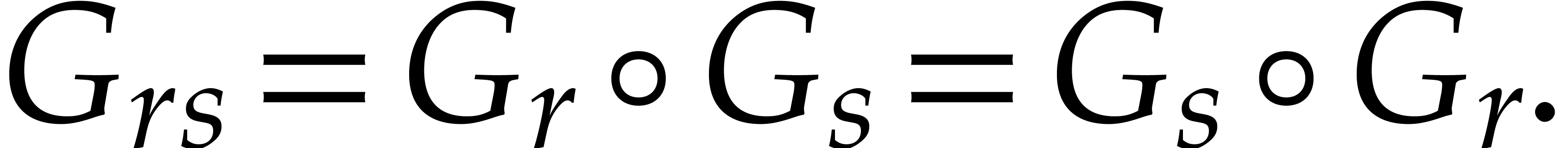

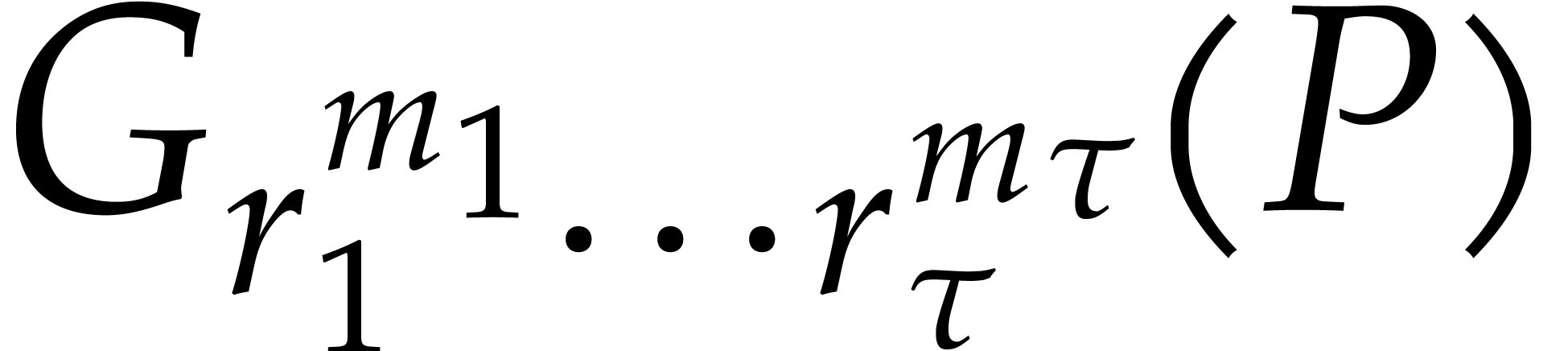

The algorithms from subsections 3.2 and 3.3

readily generalize to Graeffe transforms of order  for arbitrary

for arbitrary  , provided that

we have an

, provided that

we have an  -th root of unity

-th root of unity

. For convenience of the

reader, we specified the generalization of Algorithm 3

below, together with the resulting complexity bounds.

. For convenience of the

reader, we specified the generalization of Algorithm 3

below, together with the resulting complexity bounds.

Algorithm

|

||||||

|

be a primitive

be a primitive  -th root of unity in

-th root of unity in  , where

, where  is invertible in

is invertible in

. Given a monic polynomial

. Given a monic polynomial

with

with  and

and  , we can compute

, we can compute  in

time

in

time

Proof. Straightforward generalization of

Proposition 7.

be a primitive

be a primitive  -th root of unity in

-th root of unity in  , where

, where  are invertible in

are invertible in

. Given a monic polynomial

. Given a monic polynomial

with

with  and

and  , we can compute

, we can compute  in

time

in

time

Proof. Direct consequence of (4).

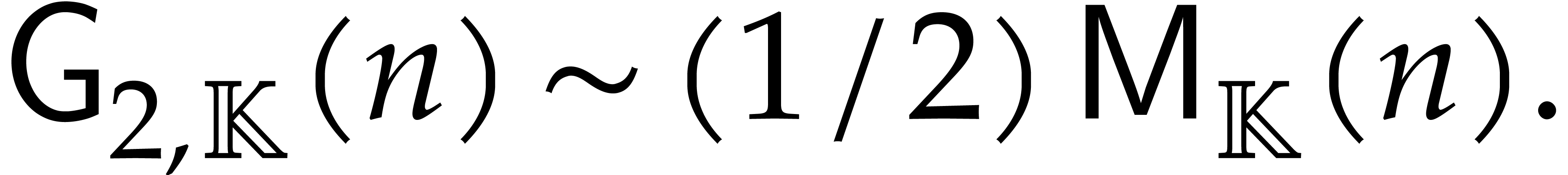

Remark  . In terms of the size

. In terms of the size  of

of

, it is instructive to

observe that the “average cost”

, it is instructive to

observe that the “average cost”

is minimal for  . Whenever

radix-3 DFTs can be implemented as efficiently as radix-2 DFTs, then it

follows that it might be interesting to use Graeffe transforms of order

three.

. Whenever

radix-3 DFTs can be implemented as efficiently as radix-2 DFTs, then it

follows that it might be interesting to use Graeffe transforms of order

three.

If  is a fixed finite field, then DFTs are most

efficient for sizes

is a fixed finite field, then DFTs are most

efficient for sizes  that divide

that divide  . For our root finding application, it is often

convenient to take

. For our root finding application, it is often

convenient to take  , in which

case

, in which

case  should be a power of two or three times a

power of two. The truncated Fourier transform was developed for the

multiplication of polynomials such that the degree of the product does

not have a nice size

should be a power of two or three times a

power of two. The truncated Fourier transform was developed for the

multiplication of polynomials such that the degree of the product does

not have a nice size  of this type. It turns out

that we may also use it for the efficient computation of Graeffe

transforms of polynomials of arbitrary degrees. Moreover, the

optimizations from the previous subsections still apply.

of this type. It turns out

that we may also use it for the efficient computation of Graeffe

transforms of polynomials of arbitrary degrees. Moreover, the

optimizations from the previous subsections still apply.

Let us briefly describe how the truncated Fourier transform can be used

for the computation of Graeffe transforms of power of two orders. With

the notations from subsections 3.2 and 3.3, we

assume that  is a power of two as well and that

we wish to compute the Graeffe transform of a polynomial

is a power of two as well and that

we wish to compute the Graeffe transform of a polynomial  of degree

of degree  with

with  . Let

. Let  denote the

reversal of a binary number

denote the

reversal of a binary number  of

of  bits. For instance,

bits. For instance,  and

and  . Then the truncated Fourier of

. Then the truncated Fourier of  at order

at order  is defined by

is defined by

It has been shown in [14] that  and

and

can both be computed in time

can both be computed in time  . For generalizations to arbitrary radices, we

refer to [25].

. For generalizations to arbitrary radices, we

refer to [25].

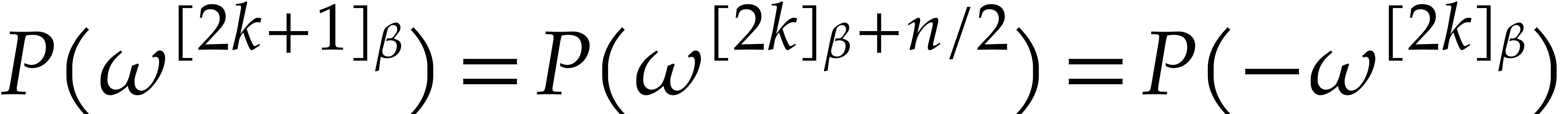

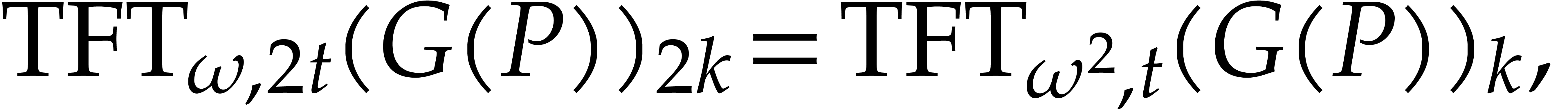

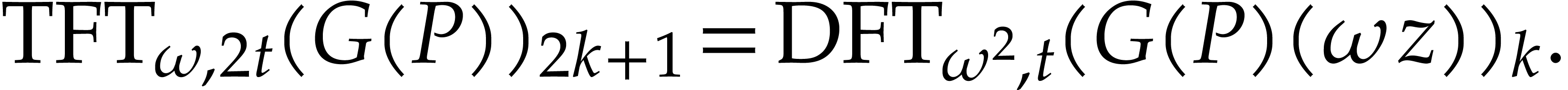

Taking  , we notice that

, we notice that

for  . This provides us with

the required counterpart of (8) for retrieving

. This provides us with

the required counterpart of (8) for retrieving  efficiently from

efficiently from  .

The relation (10) also has a natural counterpart:

.

The relation (10) also has a natural counterpart:

for  , and so does (11):

, and so does (11):

This leads to the following refinement of Algorithm 3:

Algorithm

|

||||||

|

be a primitive

be a primitive  -th root of unity in

-th root of unity in  , where

, where  ,

and assume that

,

and assume that  is invertible in

is invertible in  . Given a monic polynomial

. Given a monic polynomial  with

with  and

and  ,

we can compute

,

we can compute  in time

in time

Proof. Straightforward adaptation of the proof

of Proposition 7, while using [14].

In step 3 of Algorithm 1, we still need an

algorithm to compute the Taylor shift  .

If the characteristic of

.

If the characteristic of  exceeds

exceeds  , then it is (not so) well known [1,

Lemma 3] that this can be reduced to a single polynomial multiplication

of degree

, then it is (not so) well known [1,

Lemma 3] that this can be reduced to a single polynomial multiplication

of degree  using the following algorithm:

using the following algorithm:

It is interesting to observe that Taylor shifts can still be computed in

time  in small characteristic, as follows:

in small characteristic, as follows:

We have implemented the tangent Graeffe root finding algorithm

(Algorithm 1) in C with the optimizations presented in

section 3. Our C implementation supports primes of size up

to 63 bits. In what follows all complexities count arithmetic operations

in  .

.

In Tables 1 and 2, the input polynomial  of degree

of degree  is constructed by

choosing

is constructed by

choosing  distinct values

distinct values  for

for  at random and creating

at random and creating  . We will use

. We will use  ,

a smooth 63 bit prime. For this prime

,

a smooth 63 bit prime. For this prime  is

is  .

.

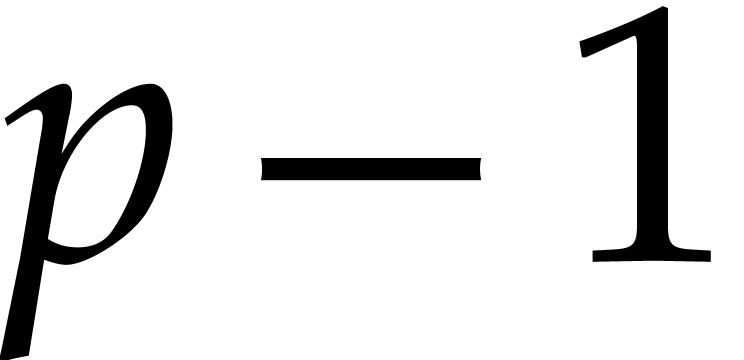

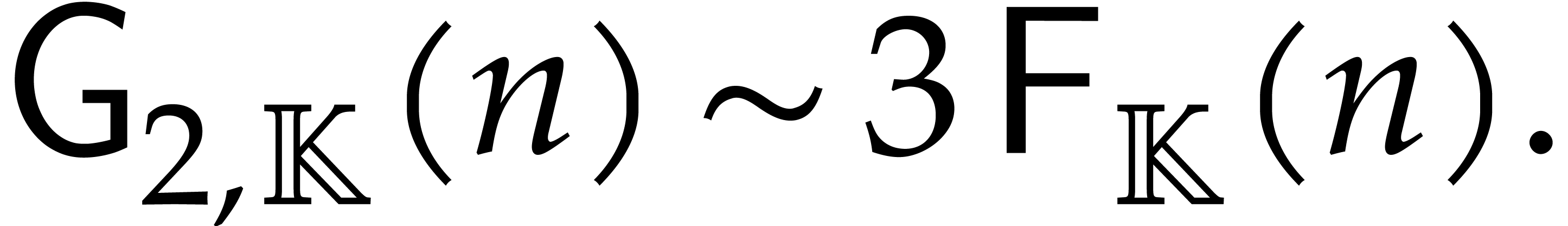

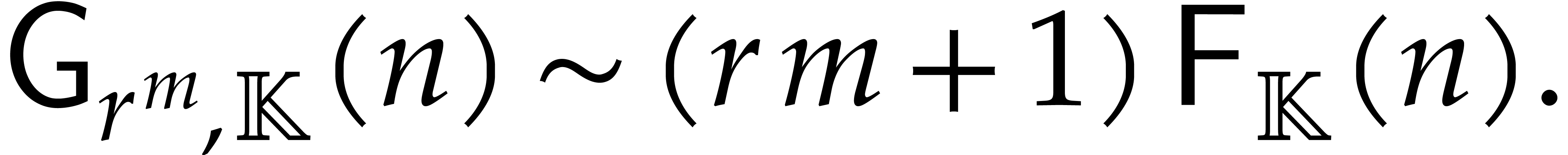

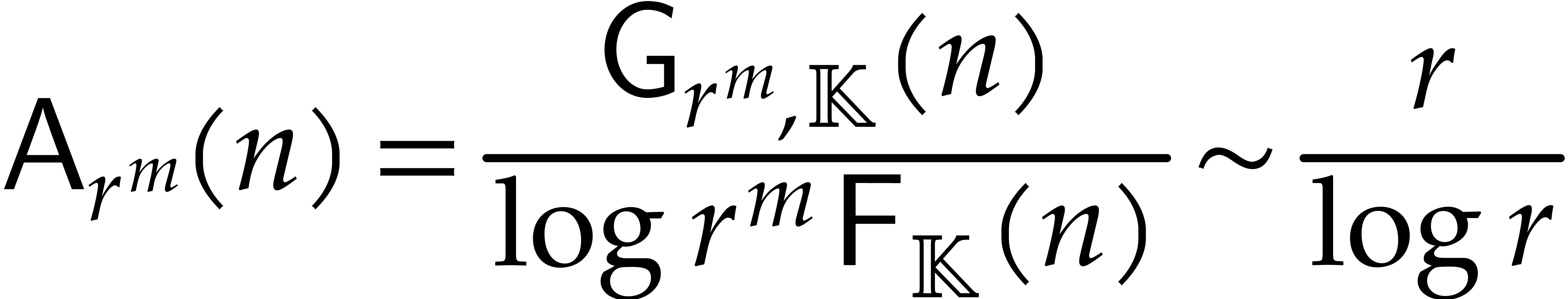

One goal we have is to determine how much faster the Tangent Graeffe (TG) root finding algorithm is in practice when compared with the Cantor-Zassenhaus (CZ) algorithm which is implemented in many computer algebra systems. In Table 1 we present timings comparing our sequential implementation of the TG algorithm with Magma's implementation of the CZ algorithm.

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

,

Magma uses Shoup's factorization algorithm from [

31

]. For our input

,

Magma uses Shoup's factorization algorithm from [

31

]. For our input

,

with

,

with

distinct linear factors, Shoup uses the Cantor–Zassenhaus equal

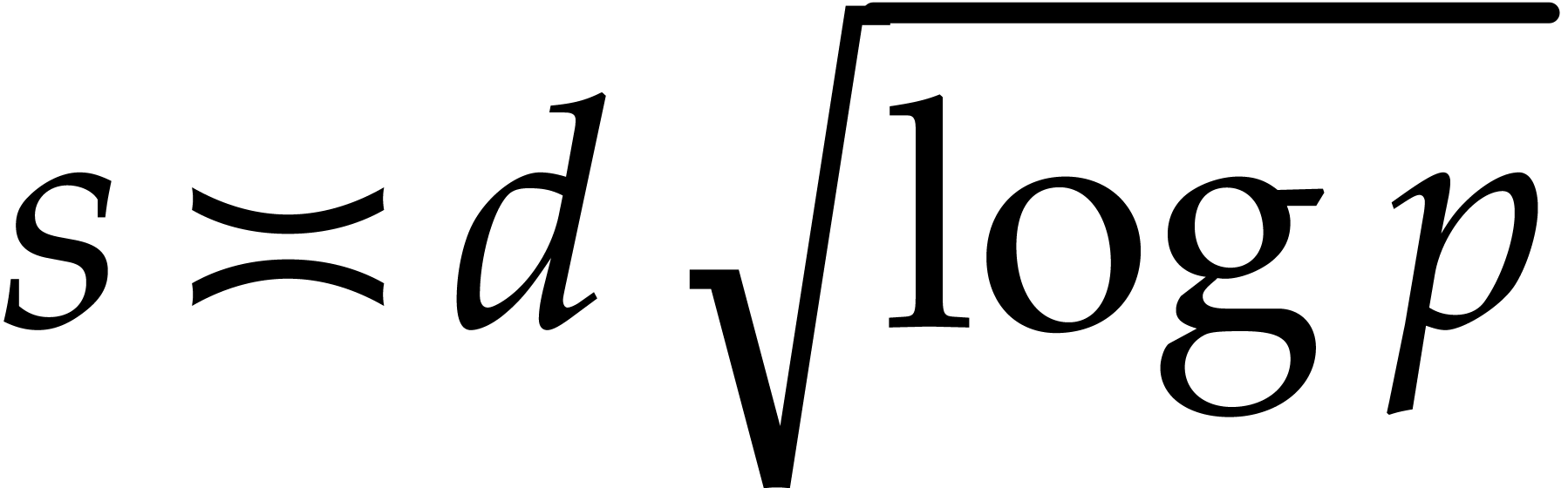

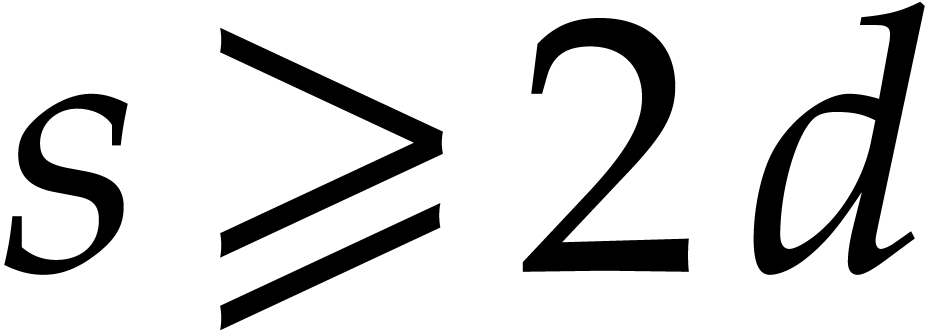

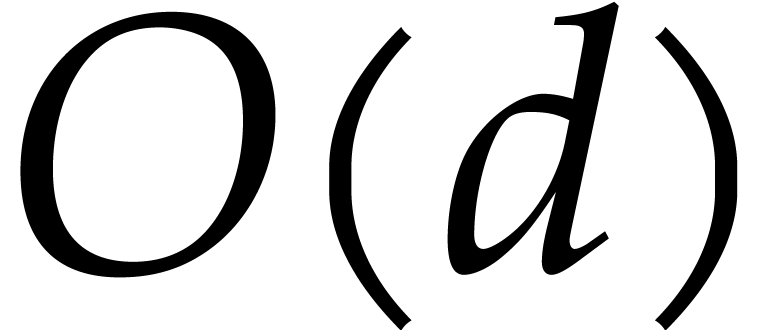

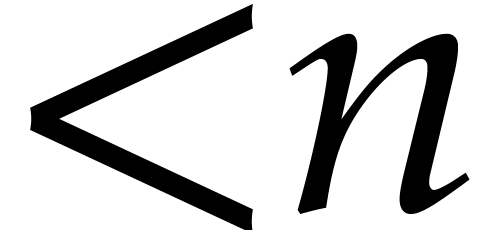

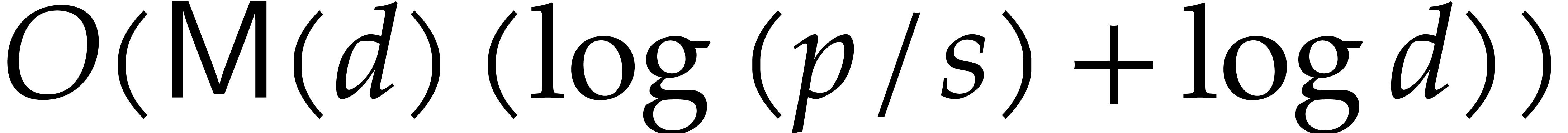

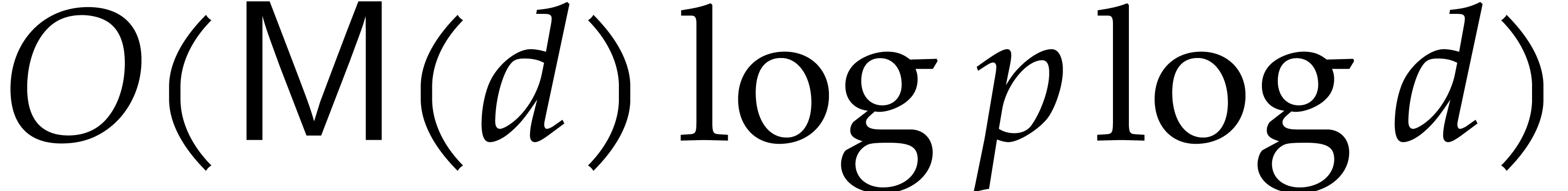

degree factorization method. Recall that the average complexity of TG is

distinct linear factors, Shoup uses the Cantor–Zassenhaus equal

degree factorization method. Recall that the average complexity of TG is

and of CZ is

and of CZ is

.

We also wanted to attempt a parallel implementation. To do this we used

the MIT Cilk C compiler from [

10

]. The Cilk C compiler provides a simple fork-join model of parallelism.

Unlike the CZ algo- rithm, TG has no gcd computations that are hard to

parallelize. We present some initial parallel timing data in Table

2

.

.

We also wanted to attempt a parallel implementation. To do this we used

the MIT Cilk C compiler from [

10

]. The Cilk C compiler provides a simple fork-join model of parallelism.

Unlike the CZ algo- rithm, TG has no gcd computations that are hard to

parallelize. We present some initial parallel timing data in Table

2

.

The timings in Table 1 are sequential timings obtained on a a Linux server with an Intel Xeon E5-2660 CPU with 8 cores. In Table 1 the time in column “first” is for the first application of the TG algorithm (steps 1 – 9 of Algorithm 1 ) showing that it obtains about 69% of the roots. The time in column “total” is the total time for the algorithm. Columns step 5 , step 6 , and step 9 report the time spent in steps 5 , 6 , and 9 in Algorithm 1 and do not count time in the recursive call in step 10 .

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||

The Magma column timing is for Magma's Factorization

command. The timings for Magma version V2.25-3 suggest that Magma's CZ

implementation involves a subalgorithm with quadratic asymptotic

complexity. Indeed it turns out that the author of the code implemented

all of the sub-quadratic polynomial arithmetic correctly, as

demonstrated by the second set of timings for Magma in column V2.25-5,

but inserted the  linear factors found into a

list using linear insertion! Allan Steel of the Magma group identified

and fixed the offending subroutine for Magma version V2.25-5. The

timings show that TG is faster than CZ by a factor of 76.6 (=8.43/0.11)

to 146.3 (=2809/19.2).

linear factors found into a

list using linear insertion! Allan Steel of the Magma group identified

and fixed the offending subroutine for Magma version V2.25-5. The

timings show that TG is faster than CZ by a factor of 76.6 (=8.43/0.11)

to 146.3 (=2809/19.2).

To implement the Taylor shift  in step 3,

we used the

in step 3,

we used the  method from [1, Lemma

3]. The better known method presented in [11] is

method from [1, Lemma

3]. The better known method presented in [11] is  . For step 5 we use

Algorithm 3 as presented. It has complexity

. For step 5 we use

Algorithm 3 as presented. It has complexity  . To evaluate

. To evaluate  and

and  in step 6 in

in step 6 in  we

used the Bluestein transformation [3]. In step 9

to compute the product

we

used the Bluestein transformation [3]. In step 9

to compute the product  , for

, for

roots, we used the

roots, we used the  tree

multiplication algorithm [11]. The division in step 10 is done in

tree

multiplication algorithm [11]. The division in step 10 is done in  with the fast division.

with the fast division.

The sequential timings in Tables 1 and 2 show

that steps 5, 6 and 9 account for

about 91% of the total time. We parallelized these three steps as

follows. For step 5, the two forward and two inverse FFTs

are done in parallel. We also parallelized our radix 2 FFT by

parallelizing recursive calls for size  and the

main loop in blocks of size

and the

main loop in blocks of size  as done in [26].

For step 6 there are three applications of Bluestein to

compute

as done in [26].

For step 6 there are three applications of Bluestein to

compute  ,

,  and

and  . We parallelized these

(thereby doubling the overall space used by our implementation). The

main computation in the Bluestein transformation is a polynomial

multiplication of two polynomials of degree

. We parallelized these

(thereby doubling the overall space used by our implementation). The

main computation in the Bluestein transformation is a polynomial

multiplication of two polynomials of degree  . The two forward FFTs are done in parallel and the

FFTs themselves are parallelized as for step 5. For the

product in step 9 we parallelize the two recursive calls in

the tree multiplication for large sizes and again, the FFTs are

parallelized as for step 5.

. The two forward FFTs are done in parallel and the

FFTs themselves are parallelized as for step 5. For the

product in step 9 we parallelize the two recursive calls in

the tree multiplication for large sizes and again, the FFTs are

parallelized as for step 5.

To improve parallel speedup we also parallelized the polynomial

multiplication in step 3 and the computation of the roots

in step 8. Although step 8 is  , it is relatively expensive because of two

inverse computations in

, it is relatively expensive because of two

inverse computations in  .

Because we have not parallelized about 5% of the computation the maximum

parallel speedup we can obtain is a factor of

.

Because we have not parallelized about 5% of the computation the maximum

parallel speedup we can obtain is a factor of  . The best overall parallel speedup we obtained is a

factor of 4.6=1465/307.7 for

. The best overall parallel speedup we obtained is a

factor of 4.6=1465/307.7 for  .

.

In Cilk, for each recursive C subroutine we wish to parallelize, one first needs to make a parallel version of that routine. For large recursive calls, the parallel version does them in parallel. For smaller recursive calls, the parallel version needs to call the sequential version of the code to avoid the Cilk process management overhead. The cutoff for the size of the input for which we need to use the sequential code has to be determined by experiment.

A. V. Aho, K. Steiglitz, and J. D. Ullman. Evaluating polynomials on a fixed set of points. SIAM Journ. of Comp., 4:533–539, 1975.

M. Ben-Or and P. Tiwari. A deterministic algorithm for sparse multivariate polynomial interpolation. In STOC '88: Proceedings of the twentieth annual ACM symposium on Theory of computing, pages 301–309. ACM Press, 1988.

Leo I. Bluestein. A linear filtering approach to the computation of discrete Fourier transform. IEEE Transactions on Audio and Electroacoustics, 18(4):451–455, 1970.

A. Bostan, G. Lecerf, and É. Schost. Tellegen's principle into practice. In ISSAC '03: Proceedings of the 2003 International Symposium on Symbolic and Algebraic Computation, pages 37–44. ACM Press, 2003.

R. P. Brent, F. G. Gustavson, and D. Y. Y. Yun. Fast solution of Toeplitz systems of equations and computation of Padé approximants. J. Algorithms, 1(3):259–295, 1980.

J. Canny, E. Kaltofen, and Y. Lakshman. Solving systems of non-linear polynomial equations faster. In Proceedings of the ACM-SIGSAM 1989 International Symposium on Symbolic and Algebraic Computation, pages 121–128. ACM Press, 1989.

D. G. Cantor and H. Zassenhaus. A new algorithm for factoring polynomials over finite fields. Math. Comp., 36(154):587–592, 1981.

A. Díaz and E. Kaltofen. FOXFOX: a system for manipulating symbolic objects in black box representation. In ISSAC '98: Proceedings of the 1998 International Symposium on Symbolic and Algebraic Computation, pages 30–37. ACM Press, 1998.

T. S. Freeman, G. M. Imirzian, E. Kaltofen, and Y. Lakshman. DAGWOOD: a system for manipulating polynomials given by straight-line programs. ACM Trans. Math. Software, 14:218–240, 1988.

M. Frigo, C.E. Leisorson, and R.K. Randall. The implementation of the Cilk-5 multithreaded language. In Proceedings of PLDI 1998, pages 212–223. ACM, 1998.

J. von zur Gathen and J. Gerhard. Modern computer algebra. Cambridge University Press, New York, 3rd edition, 2013.

B. Grenet, J. van der Hoeven, and G. Lecerf. Randomized root finding over finite fields using tangent Graeffe transforms. In Proc. ISSAC '15, pages 197–204. New York, NY, USA, 2015. ACM.

B. Grenet, J. van der Hoeven, and G. Lecerf. Deterministic root finding over finite fields using Graeffe transforms. AAECC, 27(3):237–257, 2016.

J. van der Hoeven. The truncated Fourier transform and applications. In J. Gutierrez, editor, Proc. ISSAC 2004, pages 290–296. Univ. of Cantabria, Santander, Spain, July 4–7 2004.

J. van der Hoeven and G. Lecerf. On the bit-complexity of sparse polynomial and series multiplication. J. Symbolic Comput., 50:227–254, 2013.

J. van der Hoeven and G. Lecerf. Sparse polynomial interpolation in practice. ACM Commun. Comput. Algebra, 48(3/4):187–191, 2015.

J. van der Hoeven et al. GNU TeXmacs. http://www.texmacs.org, 1998.

J. Hu and M. B. Monagan. A fast parallel sparse polynomial GCD algorithm. In S. A. Abramov, E. V. Zima, and X.-S. Gao, editors, Proc. ISSAC '16, pages 271–278. ACM, 2016.

M. A. Huang and A. J. Rao. Interpolation of sparse multivariate polynomials over large finite fields with applications. In SODA '96: Proceedings of the seventh annual ACM-SIAM symposium on Discrete algorithms, pages 508–517. Philadelphia, PA, USA, 1996. Society for Industrial and Applied Mathematics.

M. Javadi and M. Monagan. Parallel sparse polynomial interpolation over finite fields. In M. Moreno Maza and J.-L. Roch, editors, PASCO '10: Proceedings of the 4th International Workshop on Parallel and Symbolic Computation, pages 160–168. ACM Press, 2010.

E. Kaltofen. Computing with polynomials given by straight-line programs I: greatest common divisors. In STOC '85: Proceedings of the Seventeenth Annual ACM Symposium on Theory of Computing, pages 131–142. ACM Press, 1985.

E. Kaltofen, Y. N. Lakshman, and J.-M. Wiley. Modular rational sparse multivariate polynomial interpolation. In ISSAC '90: Proceedings of the International Symposium on Symbolic and Algebraic Computation, pages 135–139. New York, NY, USA, 1990. ACM Press.

E. Kaltofen and B. M. Trager. Computing with polynomials given by black boxes for their evaluations: greatest common divisors, factorization, separation of numerators and denominators. J. Symbolic Comput., 9(3):301–320, 1990.

E. Kaltofen and L. Yagati. Improved sparse multivariate polynomial interpolation algorithms. In ISSAC '88: Proceedings of the International Symposium on Symbolic and Algebraic Computation, pages 467–474. Springer Verlag, 1988.

R. Larrieu. The truncated Fourier transform for mixed radices. In Proc. ISSAC '17, pages 261–268. New York, NY, USA, 2017. ACM.

M. Law and M. Monagan. A parallel implementation for polynomial multiplication modulo a prime. In Proc. of PASCO 2015, pages 78–86. ACM, 2015.

R. Moenck. Fast computation of GCDs. In Proc. of the 5th ACM Annual Symposium on Theory of Computing, pages 142–171. New York, 1973. ACM Press.

H. Murao and T. Fujise. Modular algorithm for sparse multivariate polynomial interpolation and its parallel implementation. JSC, 21:377–396, 1996.

R. Prony. Essai expérimental et analytique sur les lois de la dilatabilité des fluides élastiques et sur celles de la force expansive de la vapeur de l'eau et de la vapeur de l'alkool, à différentes températures. J. de l'École Polytechnique Floréal et Plairial, an III, 1(cahier 22):24–76, 1795.

D. S. Roche. What can (and can't) we do with sparse polynomials? In C. Arreche, editor, ISSAC '18: Proceedings of the 2018 ACM International Symposium on Symbolic and Algebraic Computation, pages 25–30. ACM Press, 2018.

V. Shoup. A new polynomial factorization and its implementation. J. Symbolic Computation, 20(4):363–397, 1995.